Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

On This Page

- What Is AI Roadmap

- Strengthen Testing Foundation

- Programming and Scripting

- Test Automation Mastery

- AI and Machine Learning

- Prompt Engineering

- AI-Powered Testing Tools

- Testing AI Agents

- Autonomous Testing and Agentic AI

- MLOps, Observability, and Quality

- Enterprise-Grade AI Testing Skills

- Hands-On Portfolio Projects

- 12-Month AI Learning Plan

- Best Resources

- Home

- /

- Learning Hub

- /

- Complete AI Roadmap for Software Testers [2026]

Complete AI Roadmap for Software Testers [2026]

A phase-by-phase AI roadmap for software testers in 2026. Learn Python, test automation, ML fundamentals, prompt engineering, AI tools, and AI agent testing.

Salman Khan

March 8, 2026

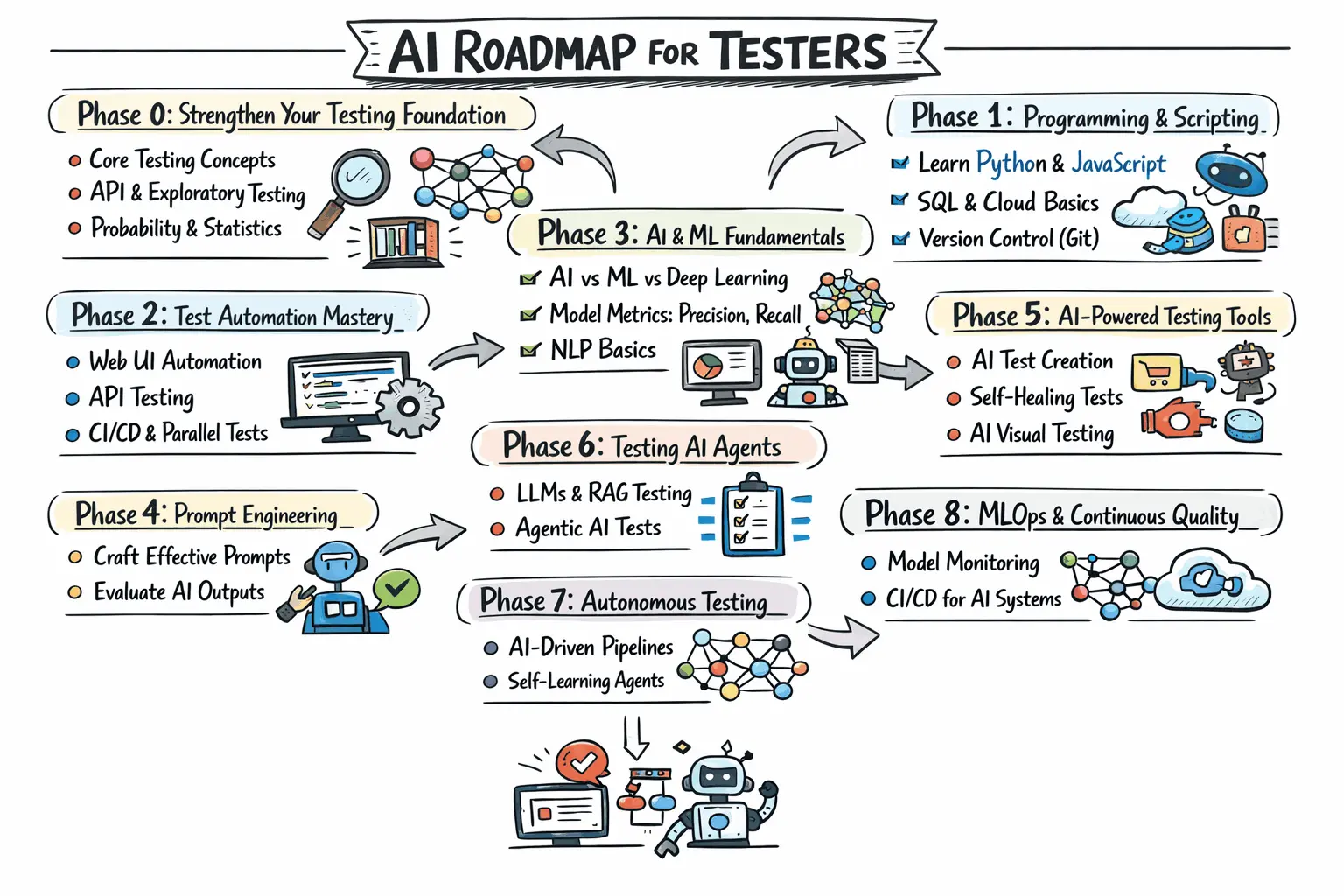

An AI roadmap for software testers lays out a clear, phase-by-phase path from testing fundamentals to Python and automation frameworks.

It then progresses into prompt engineering, LLM validation, AI-powered tools, and autonomous quality engineering, with defined timelines and hands-on projects.

Overview

What Is an AI Roadmap for Testers

A structured progression that guides QA professionals from core testing knowledge through advanced AI capabilities, organizing essential skills, tools, and methodologies into sequential stages that build on each other.

What Does the 2026 AI Testing Roadmap Cover?

The 2026 roadmap spans nine progressive phases that together form a comprehensive learning path from foundational testing through advanced AI quality engineering.

- Phase 0 - Testing Foundation: Solidify core test design techniques, testing types, API fundamentals, exploratory skills, and basic statistics needed for interpreting AI outputs.

- Phase 1 - Programming and Scripting: Gain practical proficiency in Python, JavaScript, SQL, cloud basics, and Git for writing test scripts and handling AI-generated code.

- Phase 2 - Test Automation Mastery: Build expertise in web UI frameworks, Page Object Model patterns, CI/CD integration, parallel execution, API automation, and performance testing.

- Phase 3 - AI and ML Fundamentals: Understand supervised versus unsupervised learning, evaluation metrics like BLEU and ROUGE, NLP basics, fine-tuning, and model assessment.

- Phase 4 - Prompt Engineering: Master role-based, chain-of-thought, few-shot, and constraint-based prompting techniques tailored for test generation and analysis.

- Phase 5 - AI-Powered Testing Tools: Get hands-on with AI-native test creation, self-healing locators, visual regression platforms, and predictive test analytics.

- Phase 6 - Testing AI Agents: Shift from using AI to validating it by testing LLM features, RAG pipelines, agentic AI, bias, adversarial inputs, and datasets.

- Phase 7 - Autonomous Testing: Implement AI-driven test selection in CI/CD, self-healing suites, exploratory agents, and multi-agent testing architectures.

- Phase 8 - MLOps and Observability: Set up model versioning, drift detection, evaluation pipelines, quality gates, canary rollouts, and production monitoring.

What Is an AI Roadmap for Software Testers

An AI roadmap for testers is a structured learning path mapping specific skills, tools, and concepts QA professionals need. It builds on existing testing expertise and progressively adds applied AI competencies.

The roadmap is divided into two distinct tracks that converge:

- Track A (Using AI for Testing): Leverage AI tools to write tests faster, generate data, and self-heal broken locators.

- Track B (Testing AI Agents): Validate applications like AI chatbots, recommendation engines, and LLM-based features for reliability.

Modern QA engineers need both tracks. As companies ship more AI features, the demand for testers who validate these systems grows exponentially.

Phase 0: Strengthen Your Testing Foundation

AI amplifies your existing testing skills. If those skills have gaps, AI will amplify the gaps too. Audit what you know and fill any holes.

Core Testing Concepts to Master

If you have 2+ years of testing experience, use this as a checklist rather than a study plan.

- Test Design Techniques: Equivalence partitioning, boundary value analysis, decision tables, and pairwise testing are essential foundations.

- Testing Types and Levels: Understand where unit, integration, system, and acceptance testing fit in the test pyramid.

- API Testing Fundamentals: REST APIs, HTTP methods, status codes, request/response validation, and authentication flows are backbone skills.

- Exploratory Testing: Your ability to think creatively and navigate uncharted paths is a competitive advantage AI cannot replicate.

- Bug Reporting and Root Cause Analysis: AI detects anomalies but a human tester identifies business impact and root cause.

Math Fundamentals (the Ones That Actually Matter for Testers)

You do not need calculus or linear algebra. You need basic statistics because AI outputs are probabilistic.

- Probability Basics: Understand confidence intervals, p-values, and what "95% accuracy" actually means in AI tool reports.

- Descriptive Statistics: Mean, median, standard deviation are essential for analyzing test results and AI evaluation scores.

- Confusion Matrix: True/false positives and negatives are how you evaluate any AI model's predictions effectively.

Milestone: You can design test cases using multiple techniques, explain the test pyramid, and interpret basic statistical measures.

Validate AI agents at scale with TestMu AI Agent to Agent testing. Try TestMu AI Now!

Phase 1: Programming and Scripting for Testers

You do not need to become a software engineer. You need enough coding comfort to write test scripts, manipulate data, and understand AI-generated code.

Python: Your Primary AI Language

Python is the lingua franca of AI/ML. Every major AI library and tool has Python support first. Learn in this order:

- Core Python (2 Weeks): Variables, control flow, functions, file I/O, and exception handling with testing-specific exercises.

- Data Structures (1 Week): Lists, dictionaries, sets, and tuples. JSON maps directly to Python dictionaries.

- Libraries for Testers (2 Weeks):

- requests: HTTP client for API testing

- pytest: Test framework with fixtures and parameterization. See the Selenium pytest tutorial

- pandas: Data manipulation for test data preparation and analysis

- json/os/pathlib: Parsing payloads and handling test artifacts

- OOP Basics (1 Week): Classes, objects, inheritance, encapsulation for Page Object Model patterns.

Dig deeper in the Python automation tutorial and Python for DevOps guide.

JavaScript/TypeScript: Your Secondary Language

If your team uses Playwright or Cypress, JavaScript is your automation language.

- ES6+ Syntax: Arrow functions, destructuring, async/await, and template literals for modern test scripts.

- Node.js Basics: npm, package.json, and the module system for framework configuration.

- Fetch/Axios and TypeScript: API interactions and type-safe test framework fundamentals.

SQL Fundamentals for Test Data and Validation

You will use SQL daily to validate backend data, prepare test datasets, and verify AI model predictions are stored correctly.

- SELECT Queries: JOINs, GROUP BY, and subqueries for verifying test data across tables.

- Data Manipulation: INSERT, UPDATE, DELETE statements for setting up and tearing down test state efficiently.

- Schema Understanding: Validate data integrity after AI processing pipeline execution.

- Pipeline Validation Queries: Extract training data quality metrics and validate AI pipeline outputs.

Cloud Basics (AWS, GCP, Azure)

AI testing runs on cloud infrastructure. You do not need certifications, but understand the basics impacting your testing work.

- Compute and Containers: EC2/GCE instances, Docker containers, and Kubernetes basics for running test pipelines.

- Storage and Databases: S3/GCS for test artifacts and RDS/Cloud SQL for managing test databases.

- AI/ML Services: AWS SageMaker, Google Vertex AI, Azure AI Studio where models are deployed.

- CI/CD in the Cloud: GitHub Actions, AWS CodePipeline, Google Cloud Build for running automated tests.

Version Control With Git

Non-negotiable. Learn branching, merging, pull requests, and resolving conflicts for managing test code and CI/CD pipelines.

Milestone: You can write a Python script that sends API requests, validates responses, queries a database, and runs in a cloud CI/CD pipeline.

Phase 2: Test Automation Mastery

AI-powered testing sits on top of automation. Without a solid automation foundation, AI tools will not magically give you one.

Web UI Automation

Choose one primary framework and learn it deeply. Here is how the major options compare for AI readiness:

- Selenium 4: Most widely used framework. AI-powered self-healing capabilities reduce test maintenance significantly.

- Playwright: Modern, fast, and reliable. Playwright AI integration is maturing rapidly for testers.

- Cypress: Developer-friendly with real-time reloading and time-travel debugging for JavaScript-heavy teams.

Key Automation Patterns to Learn

Master these patterns because every AI testing tool builds on them regardless of your chosen framework:

- Page Object Model (POM): Separates test logic from page structure. See Playwright POM and Cypress POM guides.

- Data-Driven Testing: Parameterize tests with external data sources. AI excels at generating the data itself.

- CI/CD Integration: Set up pipelines with GitHub Actions, Jenkins, or GitLab CI for automated test runs.

- Parallel Execution: Run tests across multiple browsers and devices simultaneously using local or cloud grid.

- Visual Regression Testing: AI-powered visual testing detects bugs that pixel-by-pixel comparison misses entirely.

API Test Automation

API testing is where AI shines brightest. An AI model can read an OpenAPI spec and generate hundreds of test cases in seconds.

- REST API Testing: Postman, RestAssured (Java), requests (Python), or Playwright API testing.

- Request Chaining: Dynamic data extraction and schema validation using JSON Schema standards.

- Contract and GraphQL Testing: Microservice contracts and GraphQL API testing for AI-powered applications.

Performance Testing Fundamentals

LLM API calls are slower than traditional APIs. AI features introduce unpredictable latency that demands performance testing.

- k6: Modern load testing tool with JavaScript scripting, ideal for testing AI API endpoints.

- JMeter: Industry standard for load, stress, and endurance testing of applications with AI components.

- Baseline Profiles: Establish response time baselines for LLM calls, embedding generation, and vector search endpoints.

Milestone: You have a framework with POM, data-driven tests, CI/CD via GitHub Actions, parallel execution, and basic k6 performance tests.

Phase 3: AI and Machine Learning Fundamentals

The goal is not to make you a data scientist but to give you enough understanding to use AI tools effectively and know their limitations.

AI vs. ML vs. Deep Learning vs. Generative AI

These terms are used interchangeably but mean different things. Getting the terminology right matters:

- Artificial Intelligence (AI): The broadest term covering any system that mimics human intelligence including rule-based systems.

- Machine Learning (ML): AI subset where systems learn from data. This powers self-healing tests and predictive analytics.

- Deep Learning: ML subset using neural networks. Powers computer vision for visual testing and NLP features.

- Generative AI: Models like GPT-4, Claude, Gemini that create content. Powers AI test case generation.

ML Concepts Testers Must Understand

These concepts directly impact how you use and evaluate AI testing tools at a practical level:

- Supervised vs. Unsupervised Learning: Supervised uses labeled data for predictions. Unsupervised finds patterns without labels.

- Classification vs. Regression: Classification predicts categories (pass/fail). Regression predicts numbers (execution time).

- Training, Validation, and Test Sets: How ML models are calibrated and why they sometimes make wrong predictions.

- Overfitting and Underfitting: An overfitted model works on staging but fails in production. Recognizing this is critical.

- Precision, Recall, and F1 Score: When a tool claims 95% accuracy, ask whether that is precision or recall.

Natural Language Processing (NLP) Basics

NLP powers natural language test creation, requirement analysis, and chatbot testing. Understand these key concepts:

- Tokenization: How text is broken into tokens for processing, affecting how LLMs understand your prompts.

- Embeddings and Vector Search: Text converted to numbers for similarity comparisons powering semantic test search.

- Large Language Models (LLMs): GPT-4, Claude, Gemini, Llama and their transformer architecture, context windows, and limitations.

Fine-Tuning Basics

You will test fine-tuned models and evaluate their quality. Understanding the process is valuable for effective validation.

- What Fine-Tuning Does: Adapts a pre-trained model to a specific domain using a smaller, specialized dataset.

- Why Testers Care: Fine-tuned models can regress on general capabilities while improving on the target task.

- Training Data Quality: Validate the dataset for completeness, label accuracy, bias, and format correctness before fine-tuning.

AI Evaluation Metrics Every Tester Should Know

Beyond precision and recall, these metrics appear in model evaluation reports and AI tool dashboards:

- BLEU: Measures how closely generated text matches reference text based on overlapping word sequences.

- ROUGE: Measures how much reference text is captured in generated text. Essential for testing summarization.

- BERTScore: Uses embeddings to measure semantic similarity even when wording differs between texts completely.

- Hallucination Rate: Percentage of generated responses containing factual inaccuracies. The most critical RAG metric.

- Perplexity: Measures output fluency. Lower perplexity means more natural output for model version comparisons.

Hands-On Project for This Phase

Build a test failure classifier using Python and scikit-learn. Train a model on historical test results to predict which tests will fail next.

Milestone: You can explain supervised vs. unsupervised learning, evaluate accuracy using precision/recall/BLEU/ROUGE, and build a simple ML classifier.

Phase 4: Prompt Engineering for Software Testing

Prompt engineering is the highest-ROI AI skill for testers in 2026. A well-crafted prompt generates more test cases in less time than spending hours of manual writing. Explore this guide on AI prompt engineering.

Prompt Engineering Techniques for Test Generation

These prompting patterns produce the best results for software testing tasks:

- Role-Based Prompting: Tell the AI to act as a specific tester type for more expert-level, specific output.

- Chain-of-Thought Prompting: Ask the AI to think step by step to catch edge cases single-shot prompting misses.

- Few-Shot Prompting: Provide 2-3 example test cases in your team's format for consistent output matching.

- Constraint-Based Prompting: Set boundaries like exact count, format, and exclusion criteria to prevent generic output.

Testing-Specific Prompt Templates

Here are battle-tested prompt templates for common testing tasks:

- Test Case Generation: Paste a user story and request positive, negative, edge case, and security test scenarios.

- Test Data Generation: Request realistic data rows with valid, invalid, boundary, and special character combinations.

- Bug Root Cause Analysis: Paste failure logs and request pattern analysis with specific fix actions.

- Code Review for Testability: Request mock identification, test hooks, and specific unit test case recommendations.

- Automation Script Generation: Request Playwright or Selenium tests with POM pattern targeting local or cloud grid execution.

Evaluating AI-Generated Test Output

Never blindly trust AI-generated test cases. Always evaluate them against these criteria:

- Completeness: Verify all functional areas are covered and edge cases are genuinely included in the output.

- Accuracy: Confirm expected results match the actual requirements and are not hallucinated by the model.

- Executability: Ensure test cases can actually be executed as written without vague or ambiguous steps.

- Uniqueness: Check that test cases are genuinely different and not just variations of the same scenario.

- Business Relevance: Confirm test cases focus on scenarios that matter to users and real business impact.

Milestone: You can generate 50+ quality test cases from a user story in under 10 minutes and evaluate AI output critically.

Phase 5: AI-Powered Testing Tools

Time to get hands-on with tools reshaping testing. Understanding categories helps you choose the right tool for the right problem. Learn more about AI automation.

Category 1: AI-Native Test Creation Platforms

These platforms let you create tests using natural language instead of code, representing the biggest shift in test authoring.

- KaneAI by TestMu AI: Create, debug, and evolve tests using natural language with cross-browser cloud execution built in.

Category 2: Self-Healing and Maintenance-Reduction Tools

These tools use ML to keep your existing tests working even when the application UI changes unexpectedly.

- KaneAI Self-Healing: Automatically adapts locators when UI elements change, reducing test maintenance effort significantly.

To get started, check out this guide to run tests with TestMu AI KaneAI.

Category 3: AI-Powered Visual Testing

Computer vision AI detects visually significant changes while ignoring irrelevant pixel-level differences across browsers.

- TestMu AI SmartUI: AI visual regression testing across browsers and devices, integrating with Selenium, Playwright, and Cypress.

To get started, check out this guide to run visual tests with TestMu AI SmartUI.

Category 4: AI-Driven Test Analytics and Intelligence

These tools analyze test results, predict failures, and optimize test execution using machine learning models.

- Predictive Test Selection: ML analyzes code changes to predict affected tests, reducing CI/CD pipeline execution times.

- Flaky Test Detection: AI identifies inconsistent tests, classifies root causes, and suggests targeted fixes for stability.

- Root Cause Analysis: AI clusters similar failures and traces them to specific code changes or infrastructure issues.

Milestone: You have hands-on experience with AI-native test creation, self-healing tests, visual regression testing, and flaky test analysis.

Phase 6: Testing AI Agents (LLMs, RAG, and Agentic AI)

This phase shifts from "using AI for testing" to "testing AI itself." This separates AI-literate testers from AI testing specialists. See the AI agent testing guide.

Testing LLM-Powered Features

LLM outputs are non-deterministic. The same prompt produces different outputs each time, fundamentally changing how you test.

- Hallucination Testing: Verify LLM responses against ground truth. Measure factual, numerical, and entity-based hallucination rates.

- Consistency Testing: Ask the same question with rephrasing and measure semantic similarity between responses using embeddings.

- Boundary Testing for LLMs: Test with max context length, empty inputs, adversarial inputs, and multilingual edge cases.

- Latency and Throughput: Measure p50, p95, and p99 latencies under load. Test time-to-first-token for streaming.

- Cost Testing: Verify your application handles token limits correctly without generating unexpectedly expensive API calls.

Testing RAG (Retrieval-Augmented Generation) Pipelines

RAG combines retrieval and generation. Testing requires validating both components and their interaction end to end.

- Indexing and Embedding Verification: Verify documents are indexed correctly and embeddings produce meaningful similarity representations.

- Retrieval Quality Testing: Measure retrieval precision and recall with queries of varying complexity and ambiguity.

- Chunking Strategy Testing: Test chunk sizes and overlaps to verify important information is not split across chunks.

- Grounding Verification: Confirm generated answers use retrieved documents rather than LLM-fabricated information entirely.

- Freshness Testing: Verify the indexing pipeline reflects document updates with properly chunked and embedded content.

- End-to-End Pipeline Validation: Test full flow from query to response, measuring combined retrieval and generation latency.

Testing AI Agents

AI agents take autonomous actions like querying databases or modifying files. Their emergent behavior makes testing fundamentally different from traditional software testing.

- Tool Use Testing: Verify the agent selects correct tools, passes correct parameters, and handles tool failures gracefully.

- Multi-Step Reasoning: Test complex tasks requiring multiple steps. Verify action sequences are logical and efficient.

- Safety and Guardrail Testing: Test whether agents can be tricked into unauthorized actions with adversarial inputs.

- Regression Testing for Agents: Create evaluation datasets capturing expected behavior. Run them after every model change.

Bias and Fairness Testing

AI agents can amplify biases in training data. As a tester, verify these critical fairness dimensions:

- Equal Output Quality: Verify the system produces equal quality outputs across different demographic groups without discrimination.

- Screening Bias: Test whether AI screening tools favor certain names, backgrounds, or cultural contexts unfairly.

- Filter Bubble Detection: Check that content moderation and recommendation engines do not create harmful filter bubbles.

Adversarial and Toxicity Testing

AI agents must be resilient against deliberately hostile inputs. This is increasingly important for production systems.

- Adversarial Input Testing: Craft inputs using typos, homoglyphs, Unicode tricks, and misleading phrasing to exploit weaknesses.

- Toxicity Detection: Verify AI never generates offensive or harmful content regardless of creative prompting attempts.

- Jailbreak Resistance: Test resistance to indirect injection, role-playing attacks, and encoding bypass tricks.

Dataset Validation

AI output quality ties directly to training data quality. Dataset Validation is an overlooked testing responsibility.

- Data Quality Checks: Check for duplicate, corrupted, or mislabeled data points in training and evaluation sets.

- Distribution Matching: Verify dataset distribution matches expected production distribution for representative testing coverage.

- PII Anonymization: Validate that sensitive PII data has been properly anonymized before any model training begins.

- Held-Out Validation: Ensure evaluation datasets are genuinely held-out and not accidentally leaked into training data.

Evaluation Tools and Frameworks

Master these frameworks to automate AI system testing at scale across your organization:

- DeepEval: Open-source LLM evaluation with hallucination, relevancy, faithfulness, and toxicity metrics via pytest.

- Ragas: Purpose-built for RAG pipeline evaluation measuring context precision, recall, and answer faithfulness.

- Arize AI: Production-grade observability with drift detection, monitoring, and automated evaluation for ML/LLM apps.

- TruLens: Measures groundedness, relevance, and sentiment for evaluating RAG context grounding quality.

- LangChain Evaluation: Built-in evaluators for string comparison, embedding distance, and LLM-as-judge in chain pipelines.

Milestone: You can design test plans for LLMs, RAG, and AI agents using DeepEval and Ragas. You understand adversarial and dataset validation.

Phase 7: Autonomous Testing and Agentic AI

Autonomous testing systems analyze applications, generate tests, execute them, and adapt with minimal human intervention. In 2026, this is production-ready.

The Spectrum of Test Automation Autonomy

Think of autonomous testing as a spectrum rather than a binary state with six distinct levels:

- Level 0, Manual Testing: Humans design, execute, and analyze every test without any automation assistance.

- Level 1, Scripted Automation: Humans write scripts, machines execute them. Traditional Selenium/Playwright automation.

- Level 2, AI-Assisted Automation: AI helps write scripts but humans review and maintain them. Most teams here.

- Level 3, AI-Driven Automation: AI generates tests from requirements, self-heals broken tests, and selects tests to run.

- Level 4, Autonomous Testing: AI agents explore applications independently, discovering and testing untested paths automatically.

- Level 5, Full Autonomy: AI manages the entire quality process end-to-end. Remains theoretical in 2026.

Building Autonomous Test Pipelines

Here is how you can build toward autonomous testing in your organization step by step:

- AI-Driven Test Selection in CI/CD: Integrate ML-based test selection analyzing code diffs. Research from Meta and Google shows this can cut pipeline times by 50-80% by running only failure-likely tests.

- Add Self-Healing to Existing Suites: Add AI-powered self-healing to your Selenium/Playwright framework with healed locator logging.

- AI-Powered Exploratory Testing: Use tools that autonomously navigate your application and flag visual or functional anomalies.

- Create Feedback Loops: Feed test results back into AI agents so predictions improve from historical data.

Agentic AI for Testing

Agentic AI autonomously plans and executes testing tasks. TestMu AI's Agent to Agent testing approach enables AI agents to validate other AI agents at scale.

- Objective-Based Testing: Tell the agent "verify a new user can complete a purchase" and it figures out the steps.

- Multi-Agent Architectures: Different agents specialize in UI, API, and performance testing coordinating via orchestrator.

- Continuous Learning: Agentic test systems learn from each execution, increasing focus on problem areas automatically.

Read this guide on test authoring with AI agents for deeper understanding.

Milestone: You have AI-driven test selection in CI/CD, self-healing for existing suites, and can articulate autonomy level tradeoffs.

Phase 8: MLOps, AI Observability, and Continuous Quality

AI model behavior can degrade over time without any code changes. This phase addresses post-deployment quality. See the AI observability guide.

MLOps Concepts for Testers

MLOps is DevOps for ML systems. You need to understand these pipelines to test effectively within them:

- Model Versioning: Track which model version is in production and run regression tests against specific versions.

- Versioning Beyond Models: Track dataset, prompt, and agent configuration versions with tools like PromptLayer and Humanloop.

- Data Drift Detection: Monitor when production data diverges from training data, triggering re-evaluation of model performance.

- Model Performance Monitoring: Track accuracy, latency, and error rates in production with threshold-based alerting enabled.

- A/B Testing for Models: Compare new model versions against current production using your A/B testing QA skills.

- Canary Rollout Validation: Route 5% traffic to new versions, monitor metrics, and give go/no-go signals for rollout.

AI Observability in Practice

AI observability means understanding why a system behaves as it does, not just detecting that something went wrong.

- Trace Logging: Log every prompt, response, token count, and latency using LangSmith or Arize AI.

- Evaluation Pipelines: Run curated test prompts on a schedule and compare scores over time for degradation.

- User Feedback Integration: Connect thumbs up/down and error reports to evaluation pipelines for continuous improvement.

- Cost and Resource Monitoring: Track API costs and token consumption. Alert on spikes indicating bugs or attacks.

Quality Gates for AI Agents

Define quality gates AI features must pass before deployment, analogous to traditional test gates:

- Accuracy Gate: Model accuracy on the evaluation dataset must exceed a clearly defined threshold value.

- Hallucination Gate: Hallucination rate must stay below threshold, for example less than 2% of responses.

- Latency Gate: P95 response time must stay within SLA limits under normal production traffic loads.

- Bias Gate: Performance across demographic groups must not vary beyond defined acceptable limits for fairness.

- Cost Gate: Per-request cost must stay within budget to prevent runaway API spending in production.

- Safety Gate: Must pass the adversarial test suite without generating harmful or off-topic content output.

Milestone: You can set up evaluation pipelines, define quality gates, and monitor deployed AI agents for drift and degradation.

Enterprise-Grade AI Testing Skills

Enterprise AI testing covers security, privacy, compliance, and safety dimensions that are non-negotiable when deploying AI to real users at scale.

Prompt Injection Testing

Prompt injection is the SQL injection of the AI era. Systematically verify that your AI features resist these attacks:

- Direct Injection: Test with "Ignore all previous instructions" inputs to verify the model maintains intended behavior.

- Indirect Injection: Plant malicious instructions in documents the AI processes. Verify they are not followed.

- Encoding Bypass Attempts: Test with base64-encoded instructions, Unicode tricks, and multilingual bypass attempts.

- Context Manipulation: Craft conversation histories that gradually shift model behavior toward producing unintended outputs.

Data Leakage and Privacy Testing

AI agents processing sensitive data introduce unique privacy risks that traditional testing does not address:

- Training Data Memorization: Probe the model with prompts designed to extract PII like emails or phone numbers.

- Cross-User Data Leakage: In multi-tenant apps, verify one user's data never bleeds into another user's responses.

- Context Window Leakage: Test that conversation history from one session does not carry over to another session.

- PII Handling: Verify the system redacts or refuses to process sensitive information per data handling policies.

Content Policy and Compliance Testing

Major AI providers publish responsible AI policies. Your application must comply. Here is what to test:

- Provider Safety Guidelines: OpenAI, Google, Anthropic, and Microsoft policies. Violations can revoke API access.

- Industry-Specific Compliance: Healthcare needs HIPAA, finance needs SOC 2, education needs COPPA and FERPA compliance.

- Content Safety Tiers: Define what content gets blocked, warned, or allowed. Build test suites for each tier.

- Audit Trail Verification: Verify AI interaction logs capture input, output, model version, and timestamp for audits.

Milestone: You can execute prompt injection test suites, identify data leakage risks, and verify content policy compliance for AI applications.

Hands-On Portfolio Projects for AI Testers

These five projects give you tangible, portfolio-worthy experience demonstrating real AI testing skills. Each combines multiple roadmap phases.

- Project 1: AI-Powered Chatbot Test Suite

Build a comprehensive test suite for a RAG-based chatbot. Test correctness, hallucinations, latency, adversarial inputs, and guardrail compliance. Use DeepEval for evaluation and Playwright for UI tests in GitHub Actions.. - Project 2: Semantic Search Quality Evaluator

Build an evaluation framework for AI search. Create a golden dataset, measure precision@k, recall@k, and MRR. Verify embedding index updates correctly. Use Ragas for evaluation metrics. - Project 3: LLM Agent Safety Tester

Test an open-source AI agent (LangGraph or CrewAI) for tool selection accuracy, multi-step task completion, guardrail enforcement, and prompt injection resistance. Create 100+ evaluation scenarios. - Project 4: Visual Regression + Model Comparison Dashboard

Compare AI model versions side by side. Use AI-powered visual regression testing combined with backend evaluation of accuracy, latency, and cost across versions. - Project 5: AI Recommendation System Tester

Test a recommendation engine for relevancy, diversity, fairness across demographics, cold-start scenarios, and performance under load using k6. Validate A/B test statistical significance.

Each project should live in a public GitHub repository with clear documentation and instructions for running the test suite.

12-Month AI Learning Plan for Testers

Month-by-month breakdown assuming 8-10 hours per week. Adjust the timeline based on your current skill level.

Quarter 1: Foundation (Months 1-3)

- Month 1: Audit testing fundamentals (Phase 0). Start Python basics with VS Code, Python, and Git setup.

- Month 2: Complete Python for testers (Phase 1). Start your chosen automation framework with an API project.

- Month 3: Deep dive into automation (Phase 2). Set up POM, CI/CD, and run tests.

Quarter 2: AI Foundations (Months 4-6)

- Month 4: AI/ML fundamentals (Phase 3). Take Andrew Ng's ML course. Build a test failure prediction model.

- Month 5: Start prompt engineering (Phase 4). Experiment daily with LLMs for test case generation and analysis.

- Month 6: Master prompt engineering. Create a prompt template library for your team. Explore AI testing tools.

Quarter 3: Applied AI Testing (Months 7-9)

- Month 7: Hands-on with AI-powered testing tools, self-healing, and visual regression (Phase 5). Start Portfolio Project 1.

- Month 8: Begin testing AI agents (Phase 6). Learn DeepEval, Ragas, PromptFoo. Start Portfolio Project 2.

- Month 9: RAG testing and AI agent testing. Practice adversarial testing and dataset validation workflows.

Quarter 4: Advanced and Integration (Months 10-12)

- Month 10: Autonomous testing (Phase 7). Enterprise testing: prompt injection, data leakage. Start Project 3.

- Month 11: MLOps and observability (Phase 8). Set up evaluation pipelines, quality gates, canary rollout validation.

- Month 12: Complete portfolio projects. Contribute to open-source. Explore the AI engineer career path.

Best Resources to Learn AI for Software Testing

Curated resources selected for relevance to testers, not generic AI learners. Organized by phase of the roadmap.

Courses

- Python for Everybody (University of Michigan, Coursera): Best starting point for testers learning Python practically.

- Machine Learning Specialization (Andrew Ng, Coursera): Gold standard for ML fundamentals. Focus on weeks 1-4.

- ChatGPT Prompt Engineering for Developers (DeepLearning.AI): Free course directly applicable to test generation use cases.

- Generative AI for Software Testing: This AI testing career guide provides a structured QA-focused learning path.

Books

- "AI-Powered Testing" by Tariq King: Definitive book covering both using AI for testing and testing AI agents.

- "Hands-On ML with Scikit-Learn, Keras, and TensorFlow" by Geron: Most practical ML book for Phase 3 projects.

- "Prompt Engineering for Generative AI" by Phoenix and Taylor: Comprehensive guide with practical prompt examples.

Communities and Podcasts

Stay connected with the AI testing community for shared experiences and rapidly evolving tool updates.

- Ministry of Testing: Active community with AI testing discussions, webinars, and hands-on workshops regularly.

- TestGuild Automation Podcast: Weekly coverage of AI testing tools, practices, and industry thought leadership. Check out the curated list of AI software testing podcasts.

Open-Source Tools to Practice With

- DeepEval: Open-source LLM evaluation framework. Practice hallucination testing, consistency, and bias detection.

- PromptFoo: Open-source prompt testing and evaluation tool. Great for building prompt test suites quickly.

- LangSmith: LLM observability platform. Practice tracing and evaluating AI system behavior in production scenarios.

Recommended Reads

These guides follow the roadmap progression, from AI fundamentals through automation, tools, testing specializations, and DevOps integration.

- AI in Software Testing

- Artificial Intelligence in Software Engineering

- AI Platforms

- Intelligent Test Automation

- Automatic Test Case Generation

- AI Unit Test Generation

- Self-Healing Test Automation

- ChatGPT Prompts for Software Testing

- Generative AI Testing

- NLP Testing

- AI Testing Tools

- AI Tools for Developers

- Visual AI

- AI in Regression Testing

- AI Performance Testing

- AI Mobile Testing

- AI and Accessibility

- Predictive Analytics in Software Testing

- Anomaly Report in Software Testing

- AI Data Integration

- Autonomous Testing

- Vibe Testing

- MLOps vs DevOps

- AI in DevOps

- Benefits of AIOps

Summing Up

This roadmap is not about becoming an AI engineer. It is about becoming a more effective tester by integrating AI into your existing skill set phase by phase.

Pick the phase matching your current level, dedicate consistent weekly time, apply learnings to real projects, and build skills progressively.

Citations

- Smart Test Selection in CI/CD: Optimizing Pipeline Efficiency (ResearchGate, 2025): https://www.researchgate.net/publication/391749041

- Targeted Test Selection Approach in Continuous Integration (arXiv, 2025): https://arxiv.org/html/2509.10279

- Practical Pipeline-Aware Regression Test Optimization for Continuous Integration (arXiv, 2025): https://arxiv.org/html/2501.11550

- CI/CD Pipeline Optimization Using AI: A Systematic Mapping Study (MDPI, 2025): https://www.mdpi.com/2673-4591/112/1/32

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests