AI in Quality Assurance: How Teams Are Using It in 2026

AI in quality assurance automates test creation, self-heals broken locators, and cuts maintenance time. See how QA teams use AI in quality assurance testing, with tools and examples.

Salman Khan

April 19, 2026

AI in quality assurance uses machine learning to automate test creation, detect flaky scripts, and prioritize what to test first. From AI in software testing and quality assurance to fully autonomous test execution, the spectrum of what is possible has expanded significantly in 2026.

For teams validating the ML models that power those systems, AI/ML testing addresses accuracy, bias, and drift as first-class quality concerns.

According to the ThinkSys QA Trends Report 2026, 77.7% of organizations now use or plan to use AI in their QA processes, making it one of the fastest-adopted shifts in modern software delivery.

Overview

What Is AI in Quality Assurance

AI in QA uses machine learning and smart algorithms to improve software testing. It reviews past test results to find high-risk areas, prioritize regressions, and ensure better coverage.

How to Use AI in Quality Assurance

Implementing AI in QA requires careful planning, from identifying opportunities to selecting, training, and validating models. Here is the structured approach:

- Identify Test Scope: Determine where AI can add value in QA. Define objectives such as improving coverage, automating repetitive tasks, or prioritizing high-risk tests.

- Select AI Models: Choose models that fit project needs. For test generation, NLP-based AI tools or agents can convert prompts into automated test scripts.

- Train AI Models: Gather, curate, and label high-quality data. Proper annotation ensures models recognize patterns, execute tests accurately, and predict potential defects reliably.

- Validate AI Models: Test and evaluate models using subsets of data. Platforms like TestMu AI Agent to Agent Testing simulate real interactions of AI agents using these models to verify performance and reliability.

- Integrate AI Models: Deploy trained models into the QA workflow to automate test creation, execution, and analysis, ensuring improved coverage, defect detection, and efficiency.

What Is AI in QA

AI in QA leverages machine learning and intelligent algorithms to enhance the software testing process. It analyzes historical test results to identify high-risk areas, prioritize regression tests, flag brittle scripts, and detect visual inconsistencies.

AI in software quality assurance goes beyond test execution. It spans planning, risk analysis, defect prediction, and continuous improvement across the full quality lifecycle, making it relevant at every stage of delivery.

Why Use AI in Quality Assurance

Using AI in QA automates repetitive tests, detects flaky tests, predicts defects, and adapts test scripts automatically. It also optimizes regression coverage, ensures UI consistency, prioritizes high-risk tests, and accelerates releases.

- AI-Enhanced Test Execution: Improves execution efficiency by identifying stable tests, recommending parallel runs, and automating repetitive validations. This reduces execution time and frees testers to focus on exploratory and critical functional testing.

- Intelligent Test Selection: Evaluates code changes, historical defects, and execution data to select the most relevant test cases. This avoids redundant runs, saves time, and ensures focus on high-risk areas.

- Predictive Defect Analysis: Analyzes past test results and change histories to highlight modules most likely to fail. This software defect prediction helps teams improve coverage and prevent critical production defects.

- Flaky Test Identification: Tracks test stability, detects intermittent failures, and highlights unreliable scripts before they block a release or generate false confidence in build quality.

- Enhanced Defect Accuracy: Correlates multiple data sources, logs, test results, and past defect trends to detect issues and reduce false positives. This increases confidence in test results and defect reporting.

- Optimized Regression Coverage: Prioritizes regression runs based on recent code commits, risk level, and defect history. It ensures critical functionality is validated first within limited test execution windows.

Key Takeaway: AI in QA directly reduces the two biggest drains on team velocity: flaky tests and redundant regression runs. Starting with intelligent test selection and predictive defect analysis gives teams a measurable return before they invest in broader AI tooling.

These benefits extend directly to the backend through AI API testing, which applies AI-driven test generation, semantic validation for non-deterministic LLM responses, and self-healing across schema changes to keep API suites stable with minimal manual upkeep.

For QA leads weighing how much of the workflow to delegate to AI, this guide to AI-augmented software testing outlines the realistic middle ground — where AI accelerates authoring, maintenance, and triage while engineers stay accountable for coverage strategy, risk calls, and release readiness.

Note: Get insights into test results with TestMu AI's AI-native Test Intelligence. Try TestMu AI Today!

What Are Examples of AI in Quality Assurance

Examples of AI in QA include generating test data, creating test scripts, and prioritizing high-risk tests. AI can also manage test scheduling, self-heal broken scripts, and provide actionable analytics.

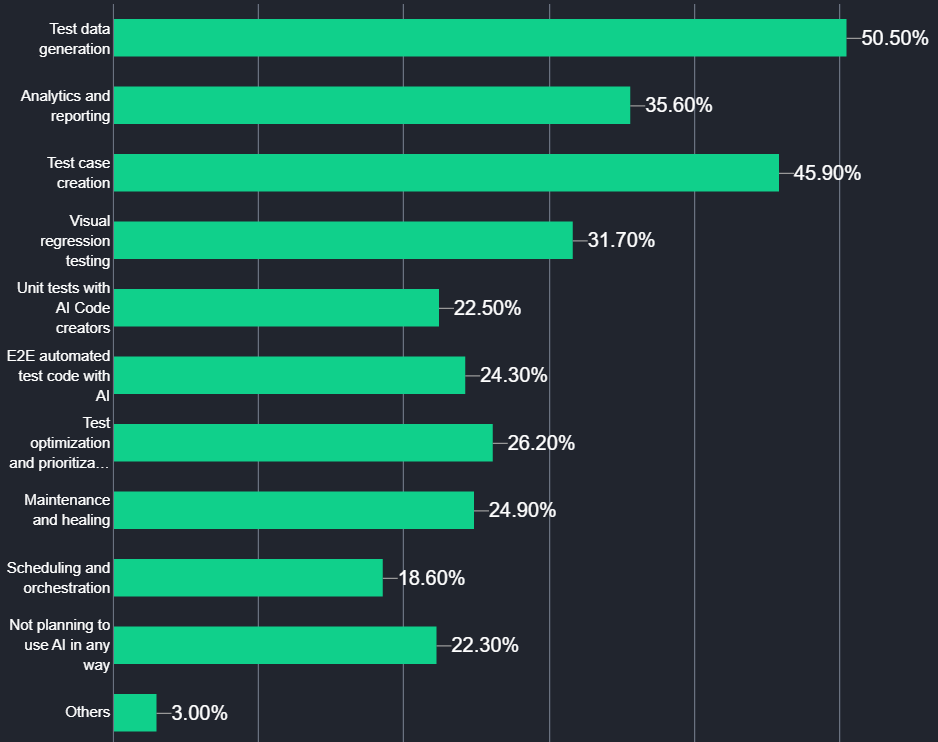

According to the Future of Quality Assurance Report, several key examples highlight how AI is used in testing processes.

- Test Data Generation: Generates diverse and realistic test datasets that simulate user behavior and edge cases. It reduces the need for manual data preparation and ensures comprehensive coverage for functional, integration, and performance tests.

- AI-Driven End-to-End Testing: Creates E2E test scripts that simulate real user interactions across workflows. These scripts are executed within automation frameworks to validate integrations, APIs, and UI components under varying conditions.

- Generative AI in Quality Assurance: Generative AI in quality assurance enables teams to produce test cases, test data, and even full test plans from natural language prompts or requirement documents, cutting authoring time from hours to minutes.

- Unit Test Generation: Analyzes source code to automatically generate unit tests, covering standard scenarios and edge cases. AI unit test generation can increase coverage and ensure individual components behave as expected.

- Test Optimization and Prioritization: Evaluates historical test results, code changes, and defect patterns to prioritize test execution. High-risk areas are tested first, redundant runs are minimized, and regression cycles become more efficient without compromising coverage.

- Scheduling and Orchestration: Manages test execution across environments, allocating resources dynamically and scheduling tests to avoid conflicts. It ensures efficient utilization of test infrastructure and timely completion of automated test suites.

- Visual Regression Testing: Compares UI snapshots across builds to detect layout shifts, misaligned elements, or missing components. TestMu AI SmartUI offers smart visual testing that automatically highlights visual deviations, enabling rapid correction before end users are impacted.

- Self-Healing Test Scripts: Detects changes in UI elements or workflows and automatically updates affected test scripts. Self-healing test automation minimizes manual maintenance, keeps regression tests functional, and allows QA teams to focus on validation and analysis.

- Analytics and Reporting: Analyzes test execution data, defect trends, and coverage gaps to predict potential failures. Predictive analytics can generate actionable insights and reports that help QA teams optimize testing, reduce risk, and improve release confidence.

- AI-Powered Test Intelligence: TestMu AI provides Test Intelligence that helps teams understand execution patterns and coverage gaps. Its Test Intelligence platform leverages AI to detect unstable tests, adapt scripts, and highlight UI issues, guiding smarter prioritization and execution decisions.

Key Takeaway: Self-healing scripts and AI-powered test intelligence deliver the most immediate impact because they cut maintenance time without requiring a complete re-architecture of existing test suites. Visual regression testing and generative AI for test authoring are strong second priorities for teams that already run stable automation.

At the frontier of visual testing automation is the visual testing AI agent, which combines intelligent screenshot comparison, baseline management, and CI/CD integration to autonomously validate UI consistency across every build.

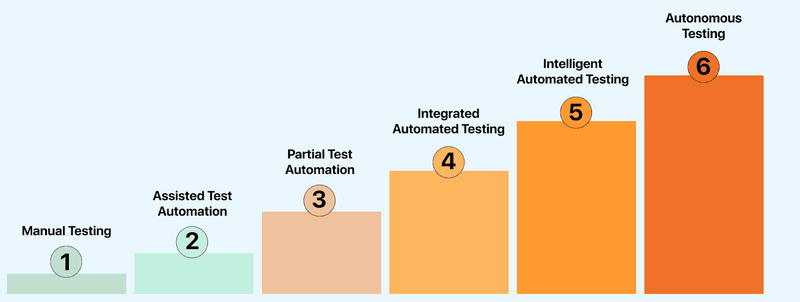

What Are the Six Levels of AI in QA Testing

The six levels of AI in QA testing represent a spectrum of automation, from manual testing to fully AI-based testing. As AI capabilities evolve, they gradually reduce the dependency on manual intervention while improving testing efficiency and accuracy.

- Manual Testing: Human testers perform all tasks, including writing, executing, and analyzing test cases, identifying defects, and reporting issues. Testing is conducted entirely without automation support.

- Assisted Test Automation: Automated tools assist testers with test execution. Humans continue to create and maintain scripts, manage workflows, and handle analysis and validation manually.

- Partial Test Automation: Testing is shared between humans and automation. Testers manage execution, data handling, and result analysis, while automation handles repetitive tasks under human supervision.

- Integrated Automated Testing: AI provides recommendations within automated tools. Testers review and apply these suggestions to refine test cases and adjust test suites as needed.

- Intelligent Automated Testing: AI can generate test cases, execute tests, and report results. In intelligent test automation, human involvement is optional and limited to handling specific scenarios or exceptions.

- Autonomous Testing: In autonomous testing, AI manages test creation, execution, and evaluation without human involvement. It monitors code changes, runs tests, and identifies defects autonomously.

Key Takeaway: Most QA teams currently operate at levels 2 or 3, using automation tools but still writing and maintaining scripts manually. Moving to level 4 or 5 requires structured AI integration into the CI/CD workflow, not just adding a new tool on top of an unchanged process.

How to Integrate AI in QA Testing

Understanding how to use AI in quality assurance starts with identifying where it adds the most value in your pipeline. The structured approach below covers selection, training, validation, and deployment of AI models into your QA workflow.

- Identify Test Scope: Focus on identifying the scope and objectives of implementing AI in QA. Define the key areas for using AI in different areas, such as improving test coverage or automating repetitive tasks.

- Select AI Models: Select the AI models that best fit your software project requirements. For example, if you want to automate the test generation process, you can choose an NLP-based AI model or tool to generate tests.

GenAI-native test agents like TestMu AI KaneAI help you generate tests using natural language prompts. It lets you quickly generate tests without manually writing test scripts, speeding up test creation and ensuring better coverage.

- Train AI Models: High-quality data is essential for training AI models. Collect, curate, and label the data needed for training AI models. Also, use the proper data annotation method to ensure that the AI model can recognize patterns, execute accurate tests and predict defects.

- Validate AI Models: Once the AI model is trained, test and validate it. Develop test algorithms and evaluate models using subsets of the annotated data. The goal is to verify that the model performs as expected in real-world scenarios by producing accurate and consistent results.

- Integrate AI Models Into Your Workflow: Once the AI model is tested and validated, integrate it into your testing infrastructure. This can involve automating aspects of the testing process, like generating test cases or analyzing test results.

To validate the behavior of AI agents that operate using these models, consider using TestMu AI Agent to Agent Testing. It simulates real-world interactions to evaluate how agents perform, respond, and adapt in dynamic scenarios. To get started, check out how to test your first AI agent.

Key Takeaway: The most common integration failure is skipping validation: deploying an AI model without confirming it produces reliable results on real project data. Running a controlled pilot on a single test suite before expanding ensures the model earns team trust before wider adoption.

Which AI Tools Are Best for QA Testing

The choice of an AI tool for QA testing depends on the project requirements. However, you can leverage Generative AI testing tools like TestMu AI KaneAI to plan, organize, and author tests using natural language prompts.

1. TestMu AI (Formerly LambdaTest) KaneAI

TestMu AI KaneAI is a GenAI testing agent designed to support fast-moving AI QA teams. It lets you create, debug, and enhance tests using natural language, making test automation quicker and easier without needing deep technical expertise.

Features:

- Intelligent Test Generation: Automates the creation and evolution of test cases through NLP-driven instructions.

- Smart Test Planning: Converts high-level objectives into detailed, automated test plans.

- Multi-Language Code Export: Generates tests compatible with various programming languages and frameworks.

- Show-Me Mode: Simplifies debugging by converting user actions into natural language instructions for improved reliability.

- API Testing Support: Easily include backend tests to improve overall coverage.

- Wide Device Coverage: Run your tests across 10,000+ real devices and 3,000+ browser-OS combinations.

To get started, refer to this guide on TestMu AI KaneAI.

2. Aqua Cloud

Aqua Cloud provides intelligent test management solutions, leveraging AI for test planning and test optimization. It centralizes testing workflows and offers predictive analytics to enhance decision-making.

Features:

- Test Management Automation: Reduces manual overhead with AI-driven workflows.

- Collaboration Tools: Supports cross-functional QA and development team collaboration.

- Scalability: Handles extensive testing needs across large software ecosystems.

- Analytics and Reporting: Provides actionable insights through predictive data analysis.

3. Virtuoso

Virtuoso is an AI-powered test automation platform that helps to create and maintain functional tests by using natural language processing and self-healing capabilities to increase testing speed without deep coding knowledge.

Features:

- Live Authoring With AI Suggestions: It suggests test steps in real time while you're writing code, making it quicker to build reliable test cases without starting from scratch.

- Cross Browser Testing in the Cloud: It executes your tests on different browsers and OS in the cloud, so you don't need to configure anything manually.

- Self-Healing With Real-Time Updates: It identifies changes in the app's UI and automatically updates your test scripts, so you don't have to rewrite them each time something changes.

Key Takeaway: The right AI testing tool depends on where your team's biggest bottleneck is: KaneAI for natural language test authoring, Aqua Cloud for test management and analytics, or Virtuoso for self-healing and maintenance reduction. Many high-performing teams pair a GenAI-native agent with an infrastructure platform to cover both authoring speed and execution scale.

What Is the Role of an AI Agent in the QA Life Cycle

AI QA agents design tests from requirements, automate scripts, and prioritize high-risk tests. They detect defects, generate actionable insights, and guide coverage and execution efficiently.

- Test Design: Reads requirements, user stories, or design documents and generates test cases automatically. It focuses on key workflows, edge cases, and validation points, eliminating repetitive manual scripting.

- Test Automation: Converts designed test cases into executable test scripts across multiple languages and frameworks. It identifies UI elements, adjusts scripts when changes occur, and generates test data automatically to keep validations stable.

- Test Execution: AI agents analyze code changes, historical results, and user behavior to prioritize tests, ensuring high-risk areas are addressed first. They also integrate with existing CI/CD tools to enhance the overall automation workflow.

- Reporting and Insights: The agent processes all execution data into actionable insights. Patterns, recurring failures, and high-risk areas are highlighted in clear reports, allowing testers to make decisions faster.

- Defect Detection and Analysis: During runs, the agent tracks failures, unstable tests, and patterns of repeated errors. It highlights the most likely root causes, helping testers quickly pinpoint and fix issues rather than spending hours combing through logs.

These QA-specific applications are part of a much broader set of AI agent use cases reshaping how organizations deliver quality at speed.

Key Takeaway: AI agents are most effective when they have access to historical test data and requirement documents, since that context enables accurate test case generation and meaningful failure pattern detection. Teams that give AI agents read access to their defect history and sprint backlogs see faster onboarding and more precise prioritization from day one.

Where Does AI Fit in the Future of QA

AI in QA is evolving to plan, execute, and adapt tests autonomously while collaborating with humans. It identifies high-risk areas, integrates with DevOps pipelines, improves visual and accessibility testing, and leverages cloud platforms for scalable testing.

Key future trends include:

- Agentic AI in Test Automation: Emerging AI agents are designed to autonomously plan, execute, and adapt test strategies in real-time. These agents collaborate with human testers, providing insights and recommendations.

- Predictive Risk-Based Testing: AI models are increasingly used to analyze historical data and identify potential risk areas within applications. By focusing testing efforts on high-risk components, teams can optimize resource allocation and improve the effectiveness of their testing efforts.

- Integration With DevOps and Continuous Delivery: AI is becoming integral to DevOps pipelines, facilitating continuous testing and integration. This integration ensures that testing is embedded into the development process, leading to faster delivery cycles and more reliable software releases.

- Enhanced Visual and Accessibility Testing: AI-powered tools are advancing in detecting visual anomalies and accessibility issues across various platforms. These tools ensure that user interfaces are consistent and accessible, improving the overall user experience. Learn more about visual AI in software testing and the role of AI and accessibility.

- Cloud-Based and Scalable Testing Solutions: The adoption of cloud technologies allows for scalable and flexible testing environments. AI-driven cloud testing platforms enable teams to perform GenAI testing across multiple configurations without the constraints of on-premises infrastructure.

Modern AI-driven testing systems often rely on architectures explained in MCP and AI Agents, where intelligent agents coordinate tools, maintain testing context, and orchestrate workflows.

Upskilling in AI testing pays off quickly. The KaneAI Certification is a solid way to validate your hands-on AI testing skills and stand out as a future-ready QA professional.

Key Takeaway: AI in QA is evolving toward agentic, continuous systems that operate across the full SDLC, not as a bolt-on to existing pipelines, but as an active participant in planning, execution, and reporting. Teams that build AI integration skills now will be positioned to lead as autonomous testing becomes the standard.

Key Takeaways

- AI in quality assurance is now mainstream. According to the ThinkSys QA Trends Report 2026, 77.7% of organizations use or plan to use AI in their QA processes - making adoption a baseline expectation, not an advantage.

- Self-healing and predictive defect analysis deliver the fastest ROI. Teams that start with these two capabilities see the sharpest reduction in maintenance effort and escaped defects before committing to a broader AI stack.

- Generative AI unlocks test authoring at scale. KaneAI lets teams write, debug, and evolve test cases using natural language, removing the bottleneck of manual scripting for teams under sprint pressure.

- Flaky tests are a signal, not an inconvenience. Test Intelligence surfaces unstable scripts before they block releases, so teams fix root causes instead of rerunning pipelines and hoping for green.

- Adoption follows a six-level maturity curve. Most teams sit at levels 2 or 3 (assisted and partial automation). Moving to level 4 or 5 requires structured integration, not just adding AI tools to an existing pipeline.

- Get started with the KaneAI getting-started docs to run your first AI-generated test in minutes, or review the full AI testing tools roundup to benchmark options before committing to a stack.

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests