AI API Testing Guide: How to Test APIs Smarter and Faster

Learn AI API testing to automate test generation, improve coverage, and optimize performance with modern tools, best practices, and real-world use cases.

Piyusha Podutwar

April 19, 2026

The rise of microservices and API-centered architectures has made reliable system testing significantly more complex. Traditional methods like manual testing and scripted automation are becoming less effective in terms of speed, coverage, and scalability.

To bridge this gap, artificial intelligence and machine learning are increasingly being integrated throughout the API testing process. This article discusses key developments in this space: NLP-driven test case generation, predictive bug detection through anomaly detection models, defect-informed test suite optimization, and adaptive testing powered by reinforcement learning.

Overview

How Is AI Used in API Testing?

AI in API testing brings smart capabilities that go beyond traditional scripting. It automatically generates test cases from API structures, self-heals tests when endpoints change, simulates realistic traffic for performance checks, strengthens security by learning from evolving threats, prioritizes execution based on risk, and expands coverage by uncovering edge cases that manual testing often misses.

What Role Does AI Play in API Automation Testing?

- Autonomous Test Case Generation: Produces test cases from API schemas, documentation, and real traffic patterns, cutting down the manual effort needed to build suites.

- Test Case Execution: Runs tests automatically and performs exploratory checks through fuzzing and edge-case generation to improve speed, reliability, and security.

- Anomaly Detection: Identifies behavioral anomalies, regressions, and unusual responses while ranking tests by risk and business impact.

- Test Optimization and Coverage: Pinpoints gaps, recommends new test cases, and applies prioritization and impact analysis to remove redundancy and improve efficiency.

- Smart Test Maintenance: Detects and resolves schema mismatches automatically so tests continue running as APIs evolve.

How Do You Use AI Agents for API Testing?

- Organisation and Configuration: Define what quality means for your API, mirror production environments, enable detailed logging, and prepare real-world and edge-case data.

- Quality and Functional Testing: Validate status codes, schemas, authentication, and rate limits, then apply semantic checks to evaluate response coherence and correctness.

- Security, Safety, and Equality: Test for bias across varied inputs, simulate adversarial prompts and jailbreaks, and confirm restricted content is handled according to safety policies.

- Continuous Testing and Performance: Monitor SLAs across response-time percentiles, run load tests, and integrate regression suites into CI/CD pipelines.

- Tools and Analysis: Generate reports on functionality, quality, bias, and performance while combining tools like Postman, KaneAI, and Akto for deeper investigation.

What Role Does KaneAI Play in Scaling API Testing?

- API Testing: Validates request payloads, response behavior, and status codes with detailed logs for tracing condition-specific failures.

- End-to-End Validation: Confirms that changes at the API layer do not break connected web or mobile interfaces.

- Data Validation: Verifies backend data consistency to catch cases where APIs return success but fail to persist or process data correctly.

- Self-Healing Tests: Adapts automatically to changes in API responses, schemas, and flows, reducing maintenance as APIs evolve.

- PR-Based Validation: Triggers test runs on every pull request to catch regressions early and deliver feedback inside the development workflow.

- Integrated Issue Tracking: Logs failures instantly, keeping debugging and resolution within a single connected workflow.

What is AI-Driven API Testing?

AI-driven API testing uses machine learning and natural language processing to automatically generate, execute, and optimize API tests, detect anomalies, and expand coverage with minimal effort.

AI-driven API testing is the use of Artificial Intelligence (AI) techniques such as machine learning and natural language processing to automatically generate, execute, and optimize API test cases, detect anomalies, and improve test coverage with minimal human intervention. At its core, AI API testing shifts quality assurance from a reactive, manual discipline to a proactive, data-driven one.

What is the use of AI in API Testing?

AI in API testing automates test generation, self-heals broken scripts, detects anomalies, expands coverage, and prioritizes execution to deliver faster, more reliable software releases.

APIs are an essential component of modern software, whether it be two microservices exchanging data or a mobile app sending data to the cloud. Understanding what AI API testing brings to this environment is the foundation for adopting it effectively.

For a deeper understanding of API testing in software development and testing, refer to this detailed API testing tutorial.

Let us understand the basics before discussing its key features.

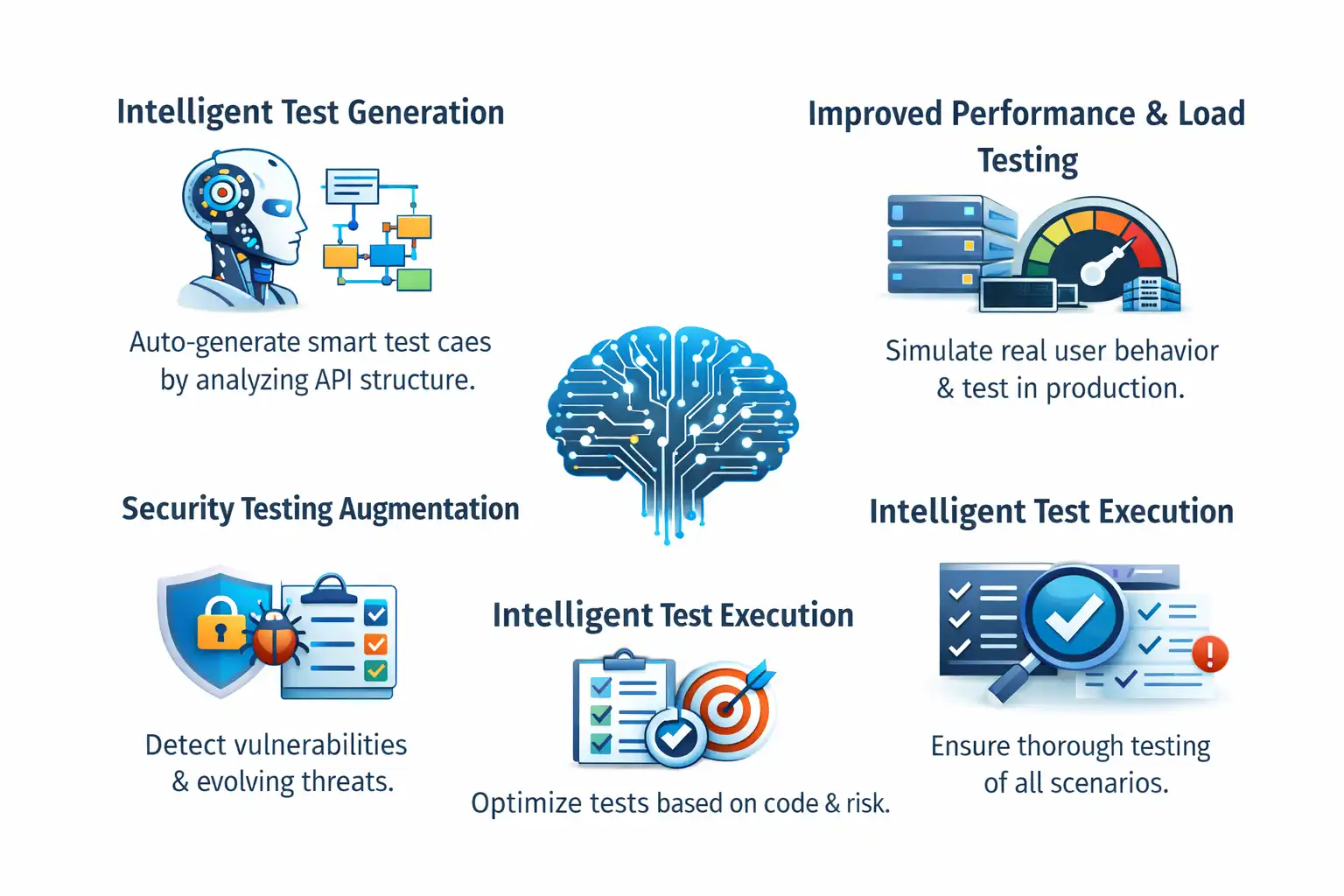

Key Features of AI API Testing

The following are key features of AI API Testing.

- Intelligent Test Generation: A key advantage of AI API testing is its ability to automatically generate test cases by analyzing the API's structure. Unlike traditional methods that rely on manual effort, AI creates smart, system-wide test scenarios, making the setup faster and more efficient.

- Self-Healing Test Maintenance: Maintaining tests can be complex, but AI simplifies it by detecting changes in API endpoints and automatically updating affected test scripts. This reduces fragility and minimizes ongoing maintenance for QA teams.

- Improved Performance and Load Testing: Traditional tests rely on static load patterns that don't reflect real usage. AI enhances performance testing by simulating realistic user behavior, dynamically adjusting load, and identifying bottlenecks before they impact production, while also supporting safe testing in production scenarios.

- Security Testing Augmentation: By learning from evolving threat behaviors, Security Testing Augmentation driven by AI goes beyond rule-based scanners and continuously analyses APIs for vulnerabilities, including injection attacks, compromised authentication, and data exposure.

- Intelligent Test Execution: AI emphasizes test execution based on code changes, risk assessment, and historical failure patterns, optimizing test runs to generate quick feedback loops while monitoring coverage of functionality.

- Increased Test Coverage: Finally, AI identifies untested scenarios, recommends additional test cases for risk areas, and ensures complete coverage of edge cases, boundary conditions, positive scenarios, and negative scenarios that ordinary workers may overlook.

AI empowers QA engineers rather than replacing them. AI-driven API testing helps teams deliver faster and more securely by automating the repetitive and surfacing the critical.

What are the different types of tests in AI API testing?

The main types of AI API testing are functional, security, integration, contract, and performance testing, each targeting risks unique to AI systems and non-deterministic outputs.

Testing AI APIs is different from traditional APIs because responses are not always fixed. Even valid requests can produce varied outputs, making accuracy harder to evaluate.

Because of this, AI API testing focuses more on consistency and overall response behavior rather than exact matches.

Each test type is designed to address specific risk areas that are unique to AI systems.

To effectively test AI APIs, you need a comprehensive approach that goes beyond traditional methods. The key test categories to consider are:

- Functional Testing: Functional tests verify API responses and behavior against expected outcomes, such as accurate predictions from an AI model. This includes validating request and response formats, error handling, and business logic, like ensuring a text-generation API produces logical output.

- Security Testing: API security testing identifies and mitigates vulnerabilities and potential risks. It focuses on content filtering, data privacy, authentication, and authorization.

- Integration Testing: Integration testing ensures the API works seamlessly with other components like databases, messaging queues, or external systems. Unlike functional testing, which isolates endpoints, integration testing validates interactions across dependencies.

- Contract Testing: Contract testing validates the provider-consumer agreement. It helps prevent breaking changes, reduces ambiguity in API behavior, and ensures consistency when data structures evolve.

- Performance Testing: Performance tests evaluate how an API handles high load, large data volumes, and concurrent users. The goal is to ensure optimal response time and resource usage through scenarios like stress and load testing.

What is the role of AI in API automation testing?

AI in API automation testing applies machine learning to design, execute, manage, and optimize end-to-end API tests, improving speed, coverage, and reliability over traditional methods.

AI in API automation testing refers to the application of machine learning algorithms and intelligent automation techniques to design, execute, manage, and continuously optimize the end-to-end testing of application programming interfaces.

Each role described below directly contributes to what makes AI API testing more capable than traditional API testing.

- Autonomous Test Case Generation: AI generates test cases from API schemas, documentation, and traffic patterns, reducing manual effort.

- Test Case Execution: AI automates execution and performs exploratory testing using fuzzing and edge-case generation, improving speed, reliability, and security.

- Anomaly Detection: AI detects behavioral anomalies, regressions, and response issues, while prioritizing tests based on risk and business impact.

- Test Optimization and Coverage: AI identifies testing gaps, suggests additional test cases, and uses techniques like test case prioritization and test impact analysis to reduce redundancy and improve efficiency.

- Smart Test Maintenance: AI handles schema changes by automatically detecting and updating mismatches, ensuring tests continue running without interruption.

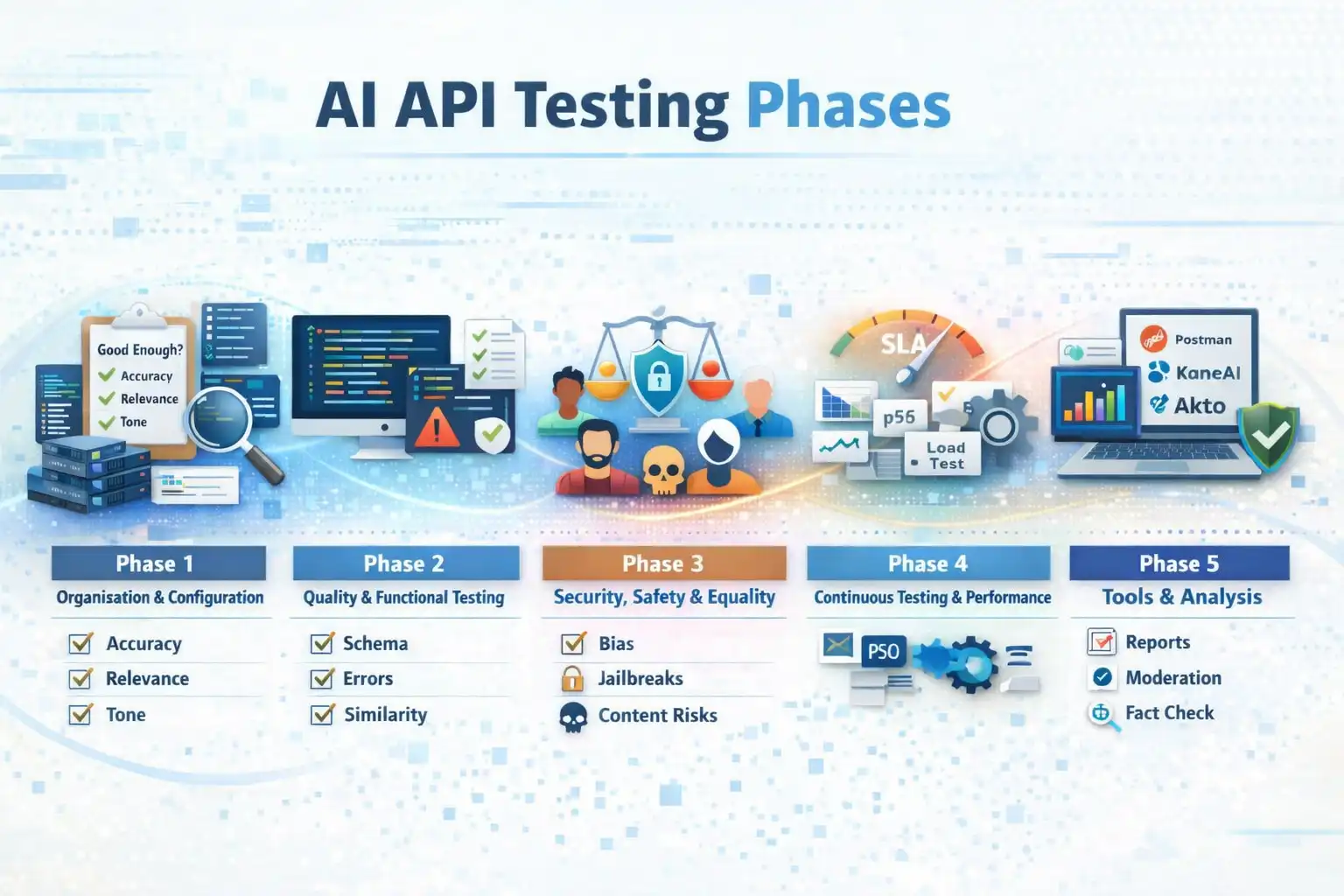

How to Perform AI API Testing

Perform AI API testing in five phases: setup and configuration, quality and functional testing, security and bias checks, continuous performance testing, and reporting with analysis tools.

Traditional APIs return the same result for the same request, but AI APIs can produce varied outputs.

AI API testing follows a structured approach from setup to continuous monitoring, creating a repeatable framework as APIs evolve.

Standard assertion-based testing is often ineffective due to variability in responses. Instead, the focus is on whether the output is relevant, accurate, unbiased, and meets safety standards.

Phase 1: Organisation and Configuration: Start by defining what "good enough" means for your API, including accuracy, relevance, and tone. Set up environments that closely reflect production, including authentication and rate limits. Enable detailed logging to capture requests, responses, timing, and quality signals. Include real-world, edge, and conflicting scenarios in your test data.

Phase 2: Quality and Functional Testing: Validate the basics first: status codes, schema alignment, authentication, and rate limits. Test how the API handles invalid inputs such as missing fields, large payloads, and incorrect data types, ensuring error messages are clear. For quality, use semantic similarity methods like Sentence-BERT to compare responses when wording varies. Evaluate outputs for coherence, usefulness, and factual correctness using supporting validation tools where needed.

Phase 3: Security, Safety, and Equality: Test for bias by varying inputs such as gender, age, or race and checking if responses remain fair and consistent. For security, simulate jailbreaks, prompt injections, and adversarial inputs. Verify that harmful or restricted content is properly detected and handled according to safety policies.

Phase 4: Continuous Testing and Performance: Define SLAs and monitor response times across key percentiles (p50, p95, p99). Run load tests to identify limits and optimize cost versus performance. Build regression test suites and integrate them into CI/CD pipelines to ensure consistent validation with every change.

Phase 5: Tools and Analysis: Generate reports covering functionality, quality, bias, and performance. When issues occur, identify patterns and root causes. Use tools like Postman for API workflows, KaneAI for natural language testing, and Akto for security validation. Combine these with moderation, fact-checking, and sentiment analysis tools, and build custom evaluations for domain-specific needs.

How to Use AI Agents for AI API Testing

Use AI agents in API testing to automate test generation, adapt to API changes, integrate with workflows, and continuously validate API behavior across evolving systems. These are practical AI agent use cases in modern testing environments.

The manual workflow above covers one developer, one bug, one fix. That is the entry point. But modern systems evolve constantly, and every new endpoint, schema update, or deployment introduces potential risks.

This section shows how AI agents can support API testing by moving from manual debugging to continuous, intelligent validation without requiring extensive scripting or rework.

The API: A FastAPI Product Inventory Service

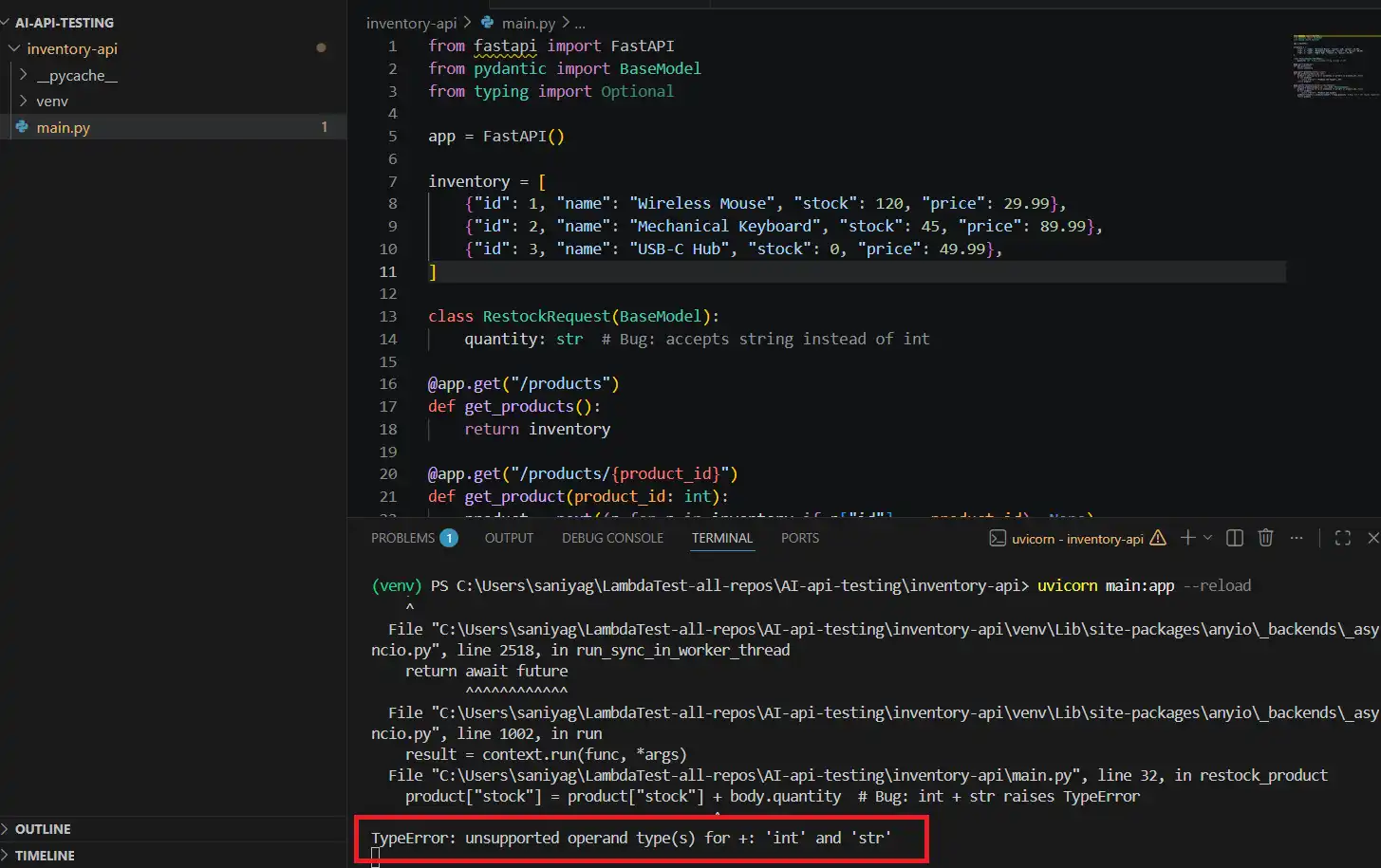

This is the service you will use throughout both tracks. It is a simple Python-based inventory API with an intentional bug built in. The bug passes basic smoke tests but fails under real-world usage.

Working with a deliberately broken API helps you understand what AI API testing is actually solving.

Prerequisites

- Python: 3.9 or above, download from python.org

- Postman: Desktop app installed, downloaded from postman.com

- Terminal: Any terminal to run the server

- KaneAI account: Free account, needed for the KaneAI track

This track walks you through running the API locally, triggering the bug, and using an AI tool to identify the root cause and fix it. It represents the starting point of any AI API testing workflow, where API testing AI assistance meets hands-on developer debugging.

- Project Setup: Open your terminal and run the following commands.

mkdir inventory-api

cd inventory-api

python -m venv venv

source venv/bin/activate # On Windows: venv\Scripts\activate

pip install fastapi uvicorn- Create the API File: Inside the

inventory-apifolder, create a new file calledmain.pyand paste this code exactly.

from fastapi import FastAPI

from pydantic import BaseModel

from typing import Optional

app = FastAPI()

inventory = [

{"id": 1, "name": "Wireless Mouse", "stock": 120, "price": 29.99},

{"id": 2, "name": "Mechanical Keyboard", "stock": 45, "price": 89.99},

{"id": 3, "name": "USB-C Hub", "stock": 0, "price": 49.99},

]

class RestockRequest(BaseModel):

quantity: str # Bug: accepts string instead of int

@app.get("/products")

def get_products():

return inventory

@app.get("/products/{product_id}")

def get_product(product_id: int):

product = next((p for p in inventory if p["id"] == product_id), None)

if not product:

return {"error": "Product not found"}, 404

return product

@app.patch("/products/{product_id}/restock")

def restock_product(product_id: int, body: RestockRequest):

product = next((p for p in inventory if p["id"] == product_id), None)

if not product:

return {"error": "Product not found"}

product["stock"] = product["stock"] + body.quantity # Bug: int + str raises TypeError

return productRun the Server

uvicorn main:app --reloadYou should see:

INFO: Uvicorn running on http://127.0.0.1:8000

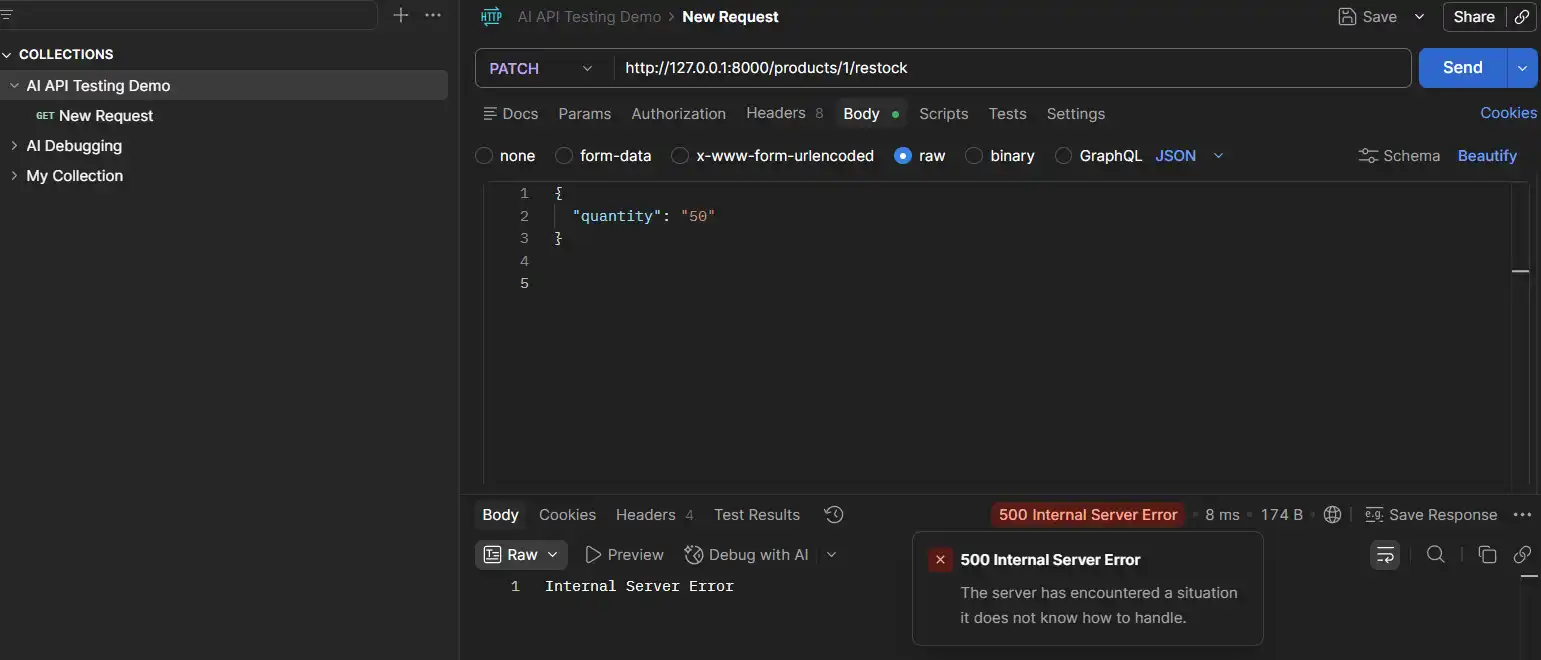

Scenario: PATCH /products/{product_id}/restock returns 500

The bug lives in the restock endpoint. The RestockRequest model accepts quantity as a str instead of int. Everything looks fine until you actually send a restock request.

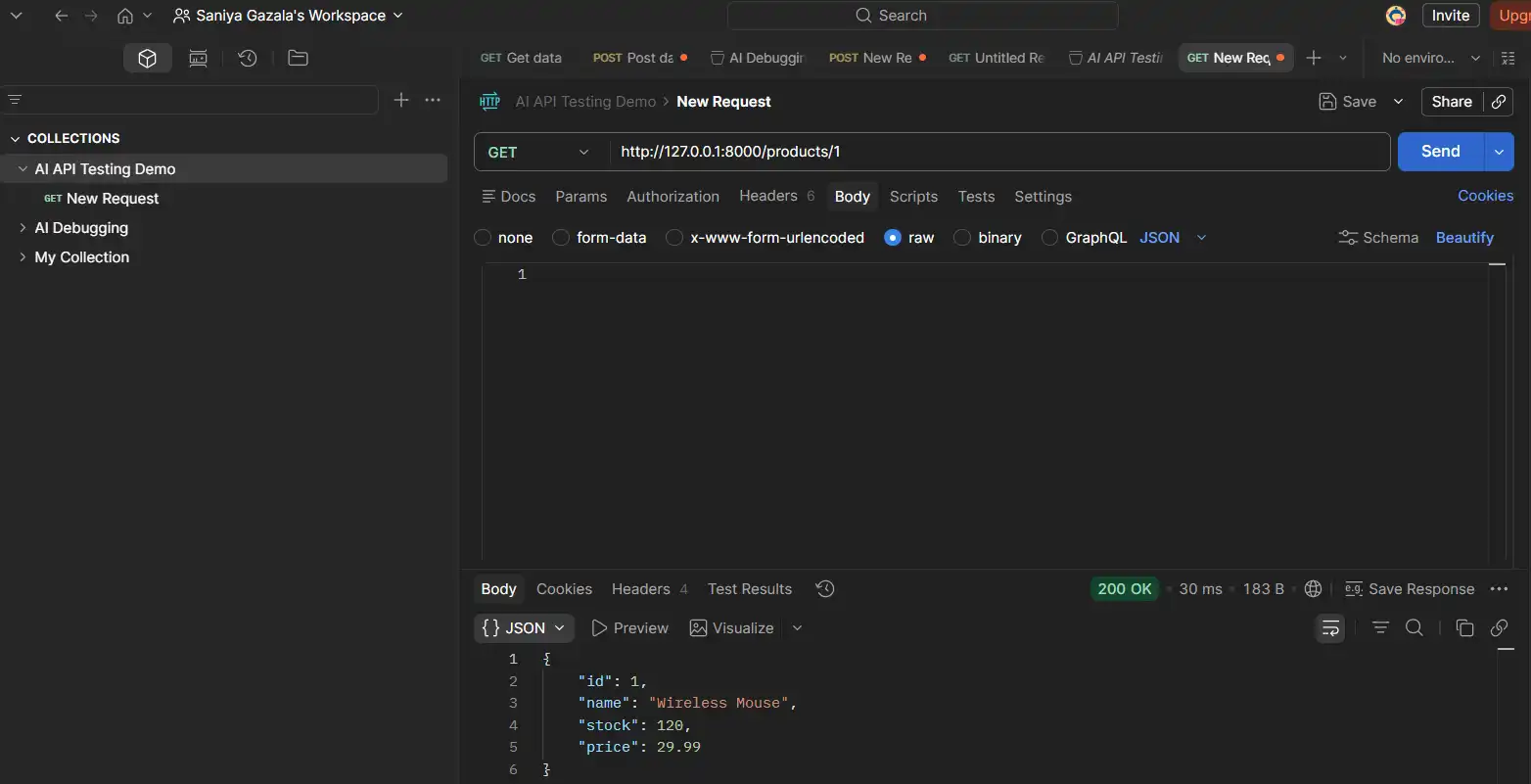

Step 1: Confirm the GET works

Open Postman and create a new request with these parameters.

- Method: GET

- URL:

http://127.0.0.1:8000/products/1 - Click Send

Result: You get a clean 200 with the Wireless Mouse record. Nothing looks broken yet.

Step 2: Trigger the bug

Create a new request with these parameters.

- Method: PATCH

- URL:

http://127.0.0.1:8000/products/1/restock - Body: Select raw and JSON, then paste:

{

"quantity": "50"

}- Click Send

Result: The server returns 500. The terminal shows:

And in the terminal you will see this error: TypeError: unsupported operand type(s) for +: 'int' and 'str'

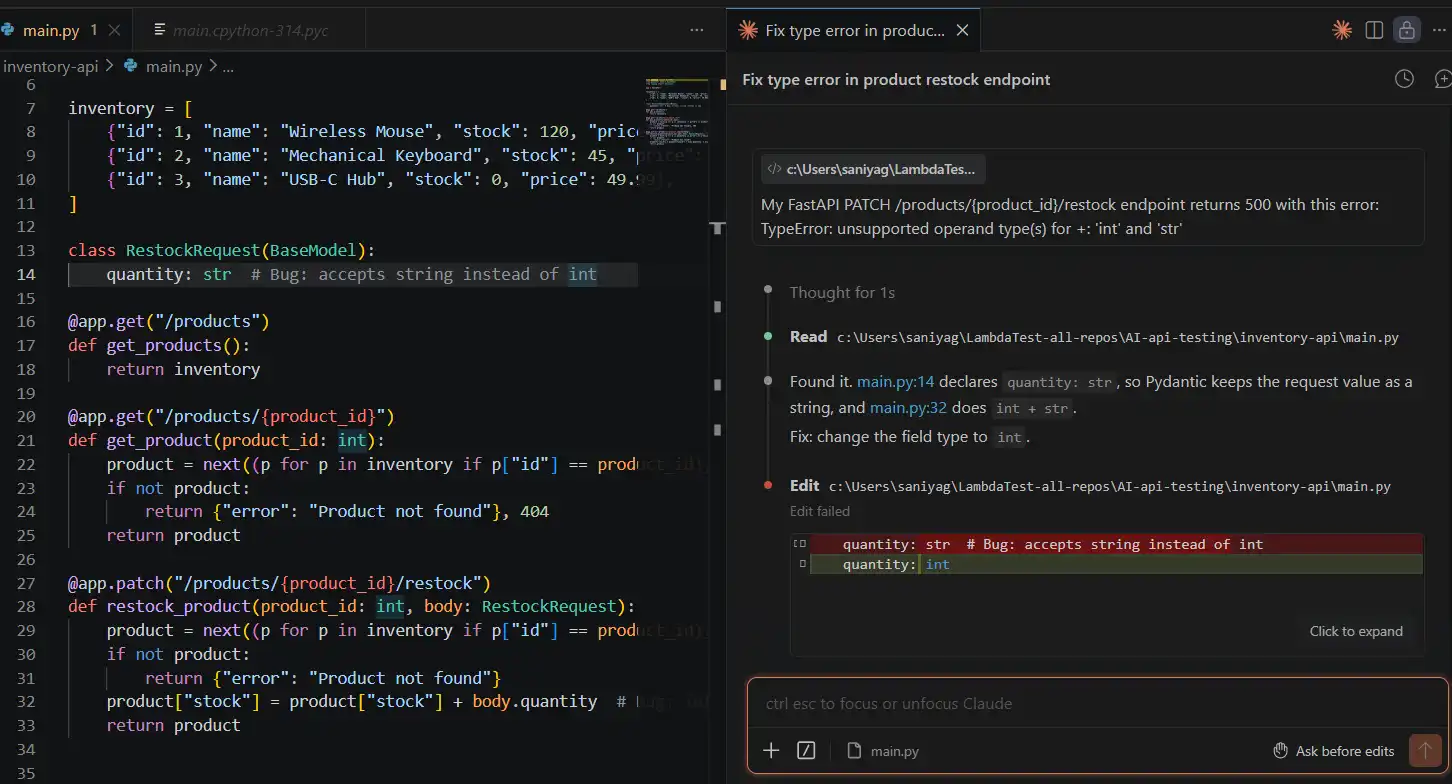

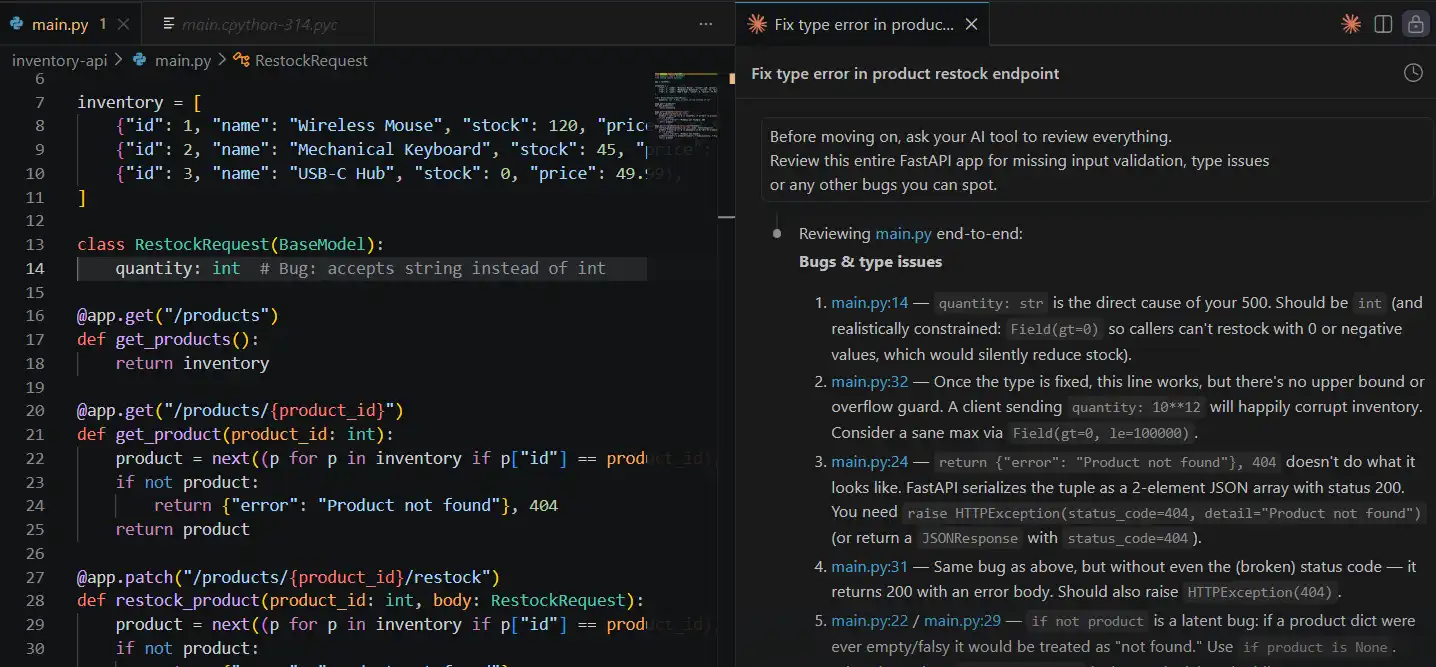

Step 3: AI Debugging in Action

This is a practical example of how AI debugging helps identify root causes faster by analyzing errors and suggesting fixes in real time.

Copy the error from your terminal. Open your AI tool, Claude, Copilot Chat, or ChatGPT, and paste this prompt exactly.

My FastAPI PATCH /products/{product_id}/restock endpoint returns 500 with this error:

TypeError: unsupported operand type(s) for +: 'int' and 'str'

Here is the relevant code that is causing the error.

class RestockRequest(BaseModel):

quantity: str # Bug: accepts string instead of int

@app.patch("/products/{product_id}/restock")

def restock_product(product_id: int, body: RestockRequest):

product = next((p for p in inventory if p["id"] == product_id), None)

if not product:

return {"error": "Product not found"}

product["stock"] = product["stock"] + body.quantity

return product

The request body sends quantity as "50" (a JSON string). What is the root cause, and how do I fix it?

What AI identifies: The RestockRequest model declares quantity: str, so Pydantic accepts string values without complaint. When the route tries to add product["stock"] (an int) to body.quantity (a str), Python raises a TypeError. The fix is to change the type annotation to int.

Pydantic will then automatically reject non-numeric input and coerce valid numeric strings before they reach your logic.

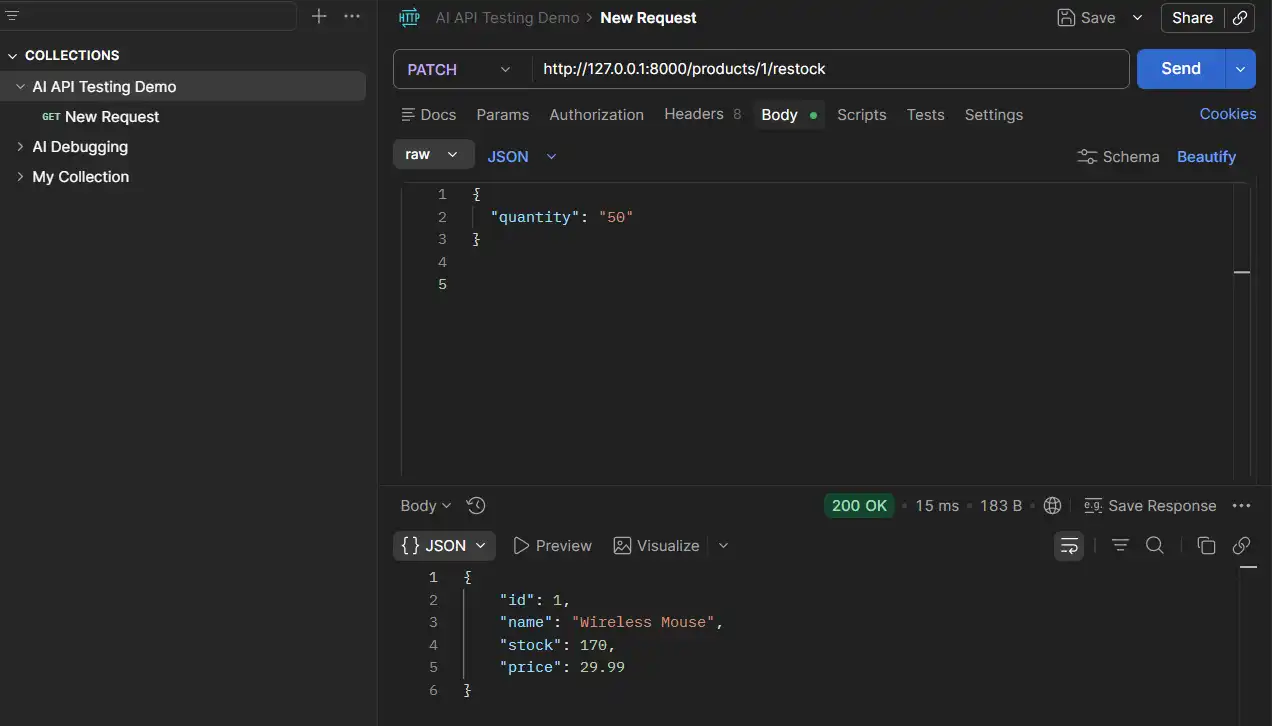

Step 4: Apply the Fix

Change the RestockRequest model in main.py.

class RestockRequest(BaseModel):

quantity: int # Fixed: Pydantic now validates and coerces correctlySave the file. Uvicorn reloads automatically. Resend the PATCH request in Postman.

Result:

The bug is fixed. Stock updated correctly.

Step 5: Ask AI to audit the whole file

Before moving on, ask your AI tool to review everything.

Review this entire FastAPI app for missing input validation, type issues

or any other bugs you can spot.

What AI surfaces: The get_product route returns a tuple ({"error": "Product not found"}, 404) instead of a proper FastAPI JSONResponse. FastAPI silently serializes the tuple as a list, so clients receive a 200 with a malformed body instead of a real 404. This is the kind of bug you would only catch in production.

That is the manual track. One developer, one endpoint, two bugs, found and fixed in under 30 minutes.

But this workflow does not scale. The moment a second developer touches this API, or a new endpoint is added, or this service connects to a frontend, you need something that validates every layer, on every code change, automatically.

That is where API testing AI tools built for scale make the real difference, and where AI API testing moves from reactive to preventive.

To scale API testing, you need a cloud-based platform that can automatically validate APIs across environments, teams, and continuous code changes.

This is where tools like KaneAI by TestMu AI (formerly LambdaTest) come in. They enable teams to move beyond manual testing by using natural language to create, execute, and maintain tests at scale.

With AI-driven automation, test coverage expands, execution becomes faster, and issues are caught earlier, making API testing more proactive and reliable.

Where Does KaneAI Fit in Scaling API Testing?

KaneAI fits into scaling API testing as a GenAI-native agent that lets teams plan, author, and execute tests in natural language, simplifying validation of complex AI-driven APIs at scale.

KaneAI by TestMu AI is a GenAI-native testing agent designed to simplify AI test automation for modern API-driven systems. It enables teams to plan, author, and execute tests using natural language, reducing the effort required to validate complex AI APIs.

For AI API testing, KaneAI brings multiple layers of validation into a single workflow:

- API Testing: Validate request payloads, response behavior, and status codes, with detailed logs for tracing issues that appear under specific API conditions.

- End-to-End Validation: Ensure that changes at the API layer do not break downstream systems like web or mobile interfaces.

- Data Validation: Verify backend data consistency to catch cases where APIs return success responses but fail to persist or process data correctly.

- Self-Healing Tests: Automatically adapts to changes in API responses, schemas, or flows, reducing maintenance as APIs evolve.

- PR-Based Validation: On every code change, tests can be triggered to validate API behavior, helping catch regressions early and providing clear feedback within the development workflow.

- Integrated Issue Tracking: Failures are captured and logged instantly, keeping debugging and resolution within a single, connected workflow.

Instead of validating APIs in isolation, KaneAI helps teams continuously test behavior, reliability, and edge cases across the entire system, making AI API testing more scalable and consistent.

What Changed Between the Two Tracks

This comparison shows exactly where manual API testing with AI assistance ends and where automated, scalable AI API testing tools take over. The shift is not just about speed; it is about coverage and confidence on every single code change.

| Local (Manual) | KaneAI (Scaled) | |

|---|---|---|

| Test authoring | Postman, by hand | Plain English in KaneAI |

| Bug detection | After it surfaces in testing | On every pull request |

| Negative test coverage | Written manually if remembered | Generated alongside the happy path |

| Regression protection | Re-run manually after each fix | Runs automatically on every change |

| Team visibility | Lives on one developer's machine | Shared, reportable, CI/CD integrated |

You can explore KaneAI's full API Testing and Network Assertions capability, which lets you validate both frontend behavior and backend API responses in a single test flow.

What tools support AI-driven API testing?

Tools that support AI-driven API testing include KaneAI, Postman, Akto, OWASP ZAP, and pytest, designed to handle AI-specific validation, security, automation, and performance testing.

AI API testing is evolving quickly to meet modern testing needs. New tools are being developed to handle the unique challenges of testing both AI-powered and traditional APIs.

Choosing the right API testing tool is a critical decision for any AI API testing strategy. The latest tools highlight how AI tools for developers are improving and transforming API testing.

KaneAI by TestMu AI (formerly LambdaTest)

KaneAI is an AI-powered test agent within TestMu AI that enables test creation and execution using natural language across web, mobile, and APIs.

Key AI Features: Natural language test creation, smart execution, auto-healing, AI test generation, analytics, and visual regression testing.

Suitable For: Teams looking for AI-driven, cloud-based testing with automation and orchestration capabilities.

Postman (Postbot)

Postbot is Postman's AI assistant that enhances API testing, documentation, and collaboration.

Key AI Features: AI test generation, intelligent documentation, smart autocomplete, anomaly detection, and test optimization.

Suitable For: Development and QA teams using Postman who want AI-assisted workflows across the SDLC.

Akto

Akto is an open-source, AI-native API security testing platform designed for modern DevSecOps teams. It automatically discovers APIs, tests for vulnerabilities, and enforces security during development and runtime.

Key AI Features: Autonomous API discovery, AI-driven vulnerability testing, GenAI security testing, sensitive data detection, automated red teaming, and CI/CD integration.

Suitable For: Application security and DevSecOps teams needing continuous, automated API security coverage.

Parasoft SOAtest

Parasoft SOAtest is an enterprise-grade, AI-augmented API testing platform covering functional, security, and performance testing across complex systems.

Key AI Features: Natural language test generation, smart parameterization, ML-driven test impact analysis, schema-change automation, non-deterministic validation, and service virtualization.

Suitable For: Enterprise and regulated environments managing complex or legacy API systems.

Tricentis Tosca

Tricentis Tosca is an enterprise AI-powered test automation platform that uses a model-based approach to generate test cases without manual scripting.

Key AI Features: Vision AI for codeless automation, model-based test generation, risk-based testing, test impact analysis, and CI/CD integration.

Suitable For: Large enterprises with complex systems such as SAP, legacy environments, and regulated industries.

Case Studies: AI API Testing in Action

Real-world case studies show AI API testing driving faster deployments in e-commerce, improving fraud detection in fintech, and reducing security assessment cycles across regulated industries.

Examining real implementations across many industries makes the theoretical benefits associated with AI-powered API testing tangible.

These case studies highlight AI in action, showing how enterprises have successfully implemented AI API testing strategies, the challenges they encountered, and the quantifiable results achieved.

Netflix: APIs for Customization and Suggestions

With 700+ microservices and frequent deployments, Netflix relies heavily on AI-driven APIs for recommendations and personalization. While traditional API tests ensure endpoint stability and schema accuracy, AI-specific testing focuses on recommendation quality, model drift after retraining, and performance at scale.

This approach reflects key lessons from designing Netflix's experimentation platform, where continuous validation of model outputs and automated regression checks help detect shifts in user relevance before they impact viewer engagement.

Capital One: DevOps and API Security Testing

Following the Capital One cyber incident, Capital One introduced AI-driven continuous security testing to address the gaps left by conventional, inconsistent penetration testing. The company recognized that annual security reviews were insufficient for rapidly evolving API ecosystems.

AI models now continuously monitor APIs for vulnerabilities, detect unusual behavior patterns, and integrate security validation directly into CI/CD pipelines through automated adversarial testing.

This shift showed that AI-powered testing can work effectively in highly regulated financial environments without compromising compliance, reducing security assessment cycles from months to days and significantly improving vulnerability detection.

Challenges and Limitations in AI API Testing

Challenges in AI API testing include limited interpretability, non-deterministic outputs, data quality issues, model drift, CI/CD integration hurdles, and cost, privacy, and compliance risks.

Understanding limitations is just as important as understanding capabilities. Even though AI and ML are increasingly being used in API testing, a number of intrinsic challenges and constraints still limit their use in real-world applications. Teams that go into AI API testing with a clear view of these limitations tend to adopt more effectively than those who treat it as a magic solution.

Challenges in Debugging and Limited Interpretability of the Model

One of the biggest challenges with AI-powered API testing is in regulated industries, where you need to trace every decision and justify every outcome and because even the most advanced models, especially LLMs, work like black boxes, root cause analysis is difficult.

Subjective Validation and Non-Deterministic Outcomes

Unlike traditional testing practices, testing AI APIs is fundamentally different because you can't rely on exact output matching. The same input might generate several relevant but differently worded responses, which contrasts traditional pass/fail logic.

Data Quality Constraints and Model Drift Over Time

AI-based testing tools learn from historical test data, so if that data is incomplete or poorly labeled, the results will reflect that. In many real projects, test records are inconsistent, missing context, weak labels, or gaps that were never filled in. This directly affects how accurately the AI generates tests and predicts failures.

Scalability and CI/CD Integration Challenges

CI/CD pipelines and AI-driven testing don't always get along well. Most pipelines expect tests to behave the same way every time. AI models are not built like that. Throw in a large number of microservices with frequent releases, and the processing demands of keeping AI models running, handling feedback, and pushing updates start to pile up.

Performance, Cost, Security, and Privacy Risks

Running AI-powered tests continuously is not cheap. Compute and token costs add up faster than most teams expect. Teams in regulated industries have it even harder, since data privacy and compliance requirements around how models are used and managed aren't something you can figure out on the fly.

Understanding these limitations helps teams adopt AI API testing strategically, improve reliability, and set realistic expectations for scaling AI-driven testing.

For those looking to strengthen their fundamentals, exploring common API testing interview questions can also help reinforce key concepts and real-world problem-solving approaches.

When to Use AI API Testing vs Traditional Methods

Use AI API testing for complex, frequently changing, or AI-driven APIs that benefit from adaptive testing, and stick with traditional methods for stable, deterministic, and simple APIs.

Knowing when to use each approach helps teams make smarter decisions about where to invest their testing effort. Not every API needs AI API testing, and knowing where the line falls saves time and budget.

API testing has traditionally relied on labor-intensive and deterministic scripts, but as modern technologies have become more sophisticated and dynamic, AI-driven testing methods have become increasingly common.

| Aspect | Traditional API Testing | AI-Driven API Testing |

|---|---|---|

| Test Creation | Manually scripted by QA engineers; time-consuming for complex APIs. | Automatically generated from API specs, schemas, and traffic patterns. |

| Setup and Maintenance | Time-consuming setup; frequent manual updates when endpoints change. | Faster initial setup; self-healing tests detect and repair broken scripts automatically. |

| Test Coverage | Limited by tester time and expertise. | Broader coverage with automated edge-case and boundary-condition detection. |

| Handling Changes | Low adaptability; tests break when APIs change. | High adaptability; AI adjusts to evolving APIs and schema drifts. |

| Execution and Speed | Sequential or basic parallel execution. | Intelligent prioritization and optimized execution via Test Impact Analysis (TIA). |

| Resource Usage | High human effort, low compute usage. | Lower human effort, higher compute requirements. |

| Skills Needed | Strong API testing and scripting skills. | Testing basics plus AI/tool configuration skills. |

| Cost Profile | Lower upfront cost, higher ongoing labor cost. | Higher initial cost, lower long-term maintenance cost. |

| Best Fit | Simple, stable, deterministic APIs. | Complex, data-driven, frequently changing APIs. |

Best Practices for AI API Testing

Best practices for AI API testing include using realistic test data, validating model outputs, monitoring production behavior, building feedback loops, and tracking performance, latency, and cost.

Following best practices in AI API testing helps teams catch issues early instead of discovering them in production. These practices apply across tools and focus on functionality, reliability, performance, and security.

- Start with Realistic Test Data: AI systems behave differently with clean versus real-world data. Use inputs that reflect actual user behavior, anomalies, and inconsistencies to uncover issues that ideal conditions would miss.

- Validate Model Outputs, Not Just API Responses: A 200 OK does not guarantee correctness. Outputs can still be wrong, biased, or irrelevant. Check if responses are meaningful and aligned with the input, and ensure confidence levels are reasonable.

- Continuously Monitor AI Behavior in Production: Testing does not stop after release. Data drift, user behavior changes, and model updates can impact performance. Monitor response quality, accuracy, latency, and error patterns in real time to detect issues early.

- Build Feedback Loops into Testing: Incorporate real-world signals like user feedback, flagged outputs, and low-confidence predictions into test design. This improves coverage and keeps testing aligned with how the system evolves.

- Monitor Performance, Latency, and Cost: Beyond accuracy, track latency, throughput, and cost drivers like token usage. Sudden spikes often indicate issues in configuration or traffic. Continuous monitoring and AI performance testing help optimize performance and scalability.

Conclusion

AI is no longer a future consideration for API testing, but a present necessity. Testing methods have to evolve in tandem with the complexity, scale, and rising robustness of machine learning models in APIs. Automatically adapting to changes, validating non-deterministic outputs, identifying bias and security flaws, and extending coverage without expanding personnel are just a few of the attributes that AI-driven approaches offer that traditional methods cannot match.

Beyond efficiency, organizations that leverage AI API testing in their procedures see better system confidence, quicker release cycles, and a competitive advantage for creating reliable, high-quality applications. The question is how quickly everyone on your team will embrace the transformation, which has already begun.

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests