Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

AI in Software Testing: Benefits & Use Cases [2026]

Explore AI in software testing with benefits, tools, use cases, and types. Understand how it differs from manual testing, its shortcomings and future trends.

Salman Khan

March 16, 2026

AI in software testing does more than automate repetitive tasks. It learns from past failures, adapts test strategies on its own, and keeps scripts running without constant manual fixes.

According to the Capgemini World Quality Report 2024-25, 68% of organizations are actively utilizing or roadmapping Generative AI in quality engineering practices.

Overview

What Is AI Software Testing

AI in software testing involves automating different aspects of testing lifecycle such as test creation and execution. It predicts defects, optimizes test runs, self-heals scripts, reduces human effort, and improves software quality.

How AI Is Used in Software Testing

Following are some of the steps to integrate AI into your testing workflow, improving efficiency, accuracy, and continuous software quality assurance.

- Define Objectives: Identify tasks for AI such as generating tests, predicting defects, or exploring critical application workflows efficiently.

- Select AI Tools or Agents: Choose tools to enhance existing automation or deploy autonomous AI agents capable of adapting to software changes seamlessly.

- Integrate With CI/CD Pipelines: Connect AI tools or agents with your testing frameworks, pipelines, and applications, providing necessary inputs like stories, logs, or scripts.

- Execute and Monitor Tests: Run tests using AI, generate cases, prioritize risks, repair broken scripts, detect anomalies, and track results for accuracy and coverage.

- Analyze Results and Iterate: Review AI testing outcomes, expand coverage, adjust configurations, and repeat continuously to maintain reliable, comprehensive, and evolving software quality.

Which AI Tools Are Best for Software Testing

Here are some of the best AI software testing tools for automating test creation, improving accuracy, and reducing manual QA effort:

- TestMu KaneAI: A Generative AI testing agent designed for fast QA, automating test case creation, debugging, management, and accelerating natural language test authoring.

- ACCELQ: Cloud-based AI platform enabling enterprise-level codeless test automation across web, mobile, desktop, and API, ensuring reliable long-term execution and reduced maintenance.

- Testim: Simplifies automated testing with minimal coding, using machine learning to stabilize tests, adapt to changes, and reduce maintenance overhead for frequent software updates.

- TestComplete: Offers dynamic AI-driven testing with checkpoints for tables, images, and settings, enabling functional tests across web, desktop, and mobile platforms efficiently.

What Is AI in Software Testing

AI in software testing applies machine learning, NLP, and deep learning to automate test creation, find defects early, and fix broken test scripts without manual effort.

Unlike traditional automation that breaks when the UI changes, AI agents learn from application behavior, adapt to changes, and flag high-risk areas before testers even start scripting.

What Are the Benefits of AI in Software Testing

The key benefits of AI in software testing include improved accuracy, faster execution, expanded coverage, reduced flakiness, and lower maintenance cost for automation teams.

Here are the key benefits of AI testing and what they mean in practice:

- Improved Test Accuracy: AI uses predictive analytics in software testing to reduce false positives. Fewer false alerts rebuild developer trust in test results, making CI pipelines effective quality gates.

- Faster Test Execution: AI-based prioritization runs failure-prone tests first, cutting feedback time by 40-60%. The shift moves from "run everything" to "run what matters."

- Enhanced Test Coverage: AI analyzes code paths and defect history to find coverage gaps. It solves "we need the right tests," not "we need more tests."

- Reduced Test Flakiness: AI self-healing repairs broken locators using smart locator strategies that track how elements appear on the page. It fixes the broken locator, but teams should also fix underlying naming conventions.

- Better Test Maintenance and Stability: AI adapts scripts automatically when UI elements change, cutting the maintenance cost that kills automation ROI for teams shipping frequently.

Note: Address flaky test issues with AI-native test intelligence. Try TestMu AI Today!

How to Use AI in Software Testing

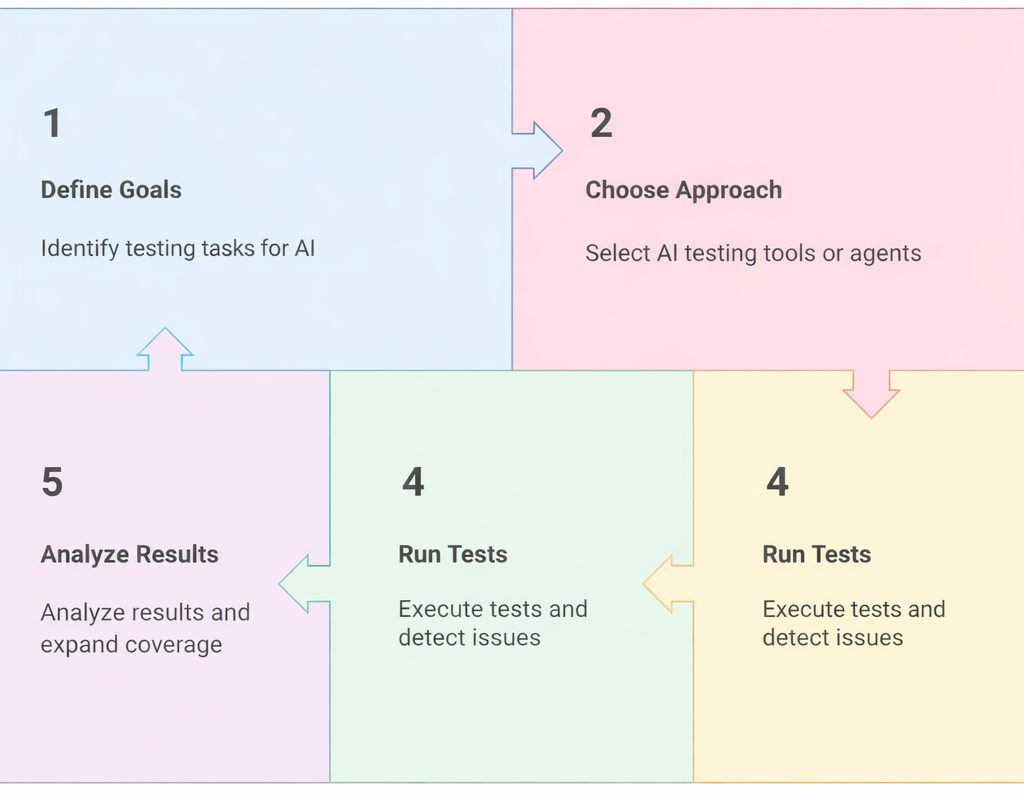

To use AI in software testing, define your testing goals, choose the right AI tools or agents, integrate them into your CI/CD pipeline, run tests, and analyze results.

Start with a specific bottleneck, not a technology decision. Identify whether maintenance, flakiness, or speed is your core problem first.

Here is how to structure your AI testing adoption:

- Define Goals: Pick one specific problem like flaky tests, slow regressions, or coverage gaps. Vague goals like "use AI for testing" lead to failed pilots.

- Choose Approach: Decide between adding AI to your existing framework or deploying standalone AI agents. Adding AI is lower risk; autonomous agents need more setup.

- Integrate with Tools or Agents: Connect AI testing tools or agents to your CI/CD pipeline with quality inputs like user stories and defect data. Poor data produces generic tests.

- Run Tests: Let the AI generate cases, prioritize high-risk modules, and repair scripts. Monitor early cycles closely to check that tests are catching real bugs.

- Analyze Results: Review AI outputs critically. Expand coverage where gaps exist, but remove duplicate or trivial tests. The goal is a leaner suite, not a bigger one. Track how many bugs reach production to measure real impact.

What Are the Real-World AI Use Cases in Software Testing

Real-world AI use cases in software testing include test case generation, self-healing automation, defect prediction, visual testing, and smart test execution in CI/CD pipelines.

According to Grand View Research, the global AI-enabled testing market is projected to reach $1.63 billion by 2030, growing at a CAGR of 18.4%. Here is where AI delivers measurable impact:

- Test Case Generation: AI analyzes requirements and UI flows for automatic test case generation, producing functional, edge, and regression cases. The real value is in edge cases humans overlook, but testers must still review for business-critical scenarios.

- Self-Healing Automation: AI repairs broken locators by tracking page structure using self-healing test automation. Teams running nightly regressions against frequently updated UIs see the highest ROI, as a single sprint can break dozens of locators.

- Smarter Test Execution: AI evaluates code changes, failure history, and risk scores to run relevant tests first and skip low-value cases. Risk-based prioritization often cuts pipeline time in half while catching the same defects.

- Defect Prediction: AI in software defect prediction looks at past bugs, code change patterns, and code complexity to flag high-risk modules before testing begins. This shifts QA from reactive to proactive, but accuracy depends on clean defect data.

- Visual and UI Testing: Visual AI catches layout shifts, broken elements, and responsiveness issues that pixel-by-pixel comparisons miss. It understands page layout, flagging a misaligned button without alerting on minor pixel differences.

- Continuous Testing: AI embedded in CI/CD pipelines learns from build and failure data to adapt test selection per commit. It avoids running 2,000 tests when a one-line CSS change only needs 30, making continuous delivery pipelines viable.

- Natural Language Testing: In NLP testing, AI converts plain language into executable test steps. This lets product managers contribute scenarios directly, removing the delay between writing requirements and creating test scripts.

- AI Agents for Autonomous Testing: In autonomous testing, AI agents explore applications independently, creating tests without predefined scripts. They discover paths scripted tests miss, but need proper monitoring to ensure agents test meaningful scenarios.

Many modern AI testing platforms rely on architectures explained in MCP and AI Agents, where intelligent agents maintain context while interacting with testing tools, frameworks, and automation pipelines.

What Are the Types of AI Testing

The main types of AI testing include functional, regression, performance, security, bias, explainability, data, adversarial, model drift, compliance, and autonomous testing.

Here are the primary types of AI software testing and what each demands in practice:

- Functional Testing: AI generates functional tests by learning expected behavior from user interactions. The biggest benefit is for complex, branching workflows where manually scripting every path is impractical.

- Regression Testing: AI identifies features most at risk after code changes and prioritizes those tests. This transforms regression from "run everything" to "run what matters," making high-frequency deployment sustainable.

- Performance Testing: AI adapts load scenarios in real time based on system behavior, finding bottlenecks that fixed load profiles miss. Adjusting load dynamically mirrors real traffic patterns and exposes issues that static scripts cannot.

- Security Testing: AI identifies vulnerabilities by analyzing code patterns and simulating attacks at scale. It catches injection flaws and misconfigured permissions but does not replace expert review for business logic vulnerabilities.

- Bias and Fairness Testing: AI audits model outputs across demographic segments to detect discriminatory patterns. This is essential for systems making decisions about people. Teams must define their fairness criteria before testing, not after.

- Explainability Testing: Tests whether AI model decisions can be clearly explained using techniques like SHAP or LIME. This is critical in regulated industries where explainability and accuracy often trade off against each other.

- Data Testing: AI validates datasets for errors, drift, and inconsistencies that hurt model accuracy. This is the most overlooked area in ML pipelines. Automated data testing catches issues before they become mysterious accuracy drops in production.

- Adversarial Testing: AI generates deceptive inputs to test model stability. Production ML systems face adversarial inputs daily, from modified images breaking classifiers to crafted text getting past content filters. This must be standard validation, not an afterthought.

- Model Drift Testing: AI monitors model performance over time and alerts teams when accuracy degrades due to changing data patterns. Every model drifts. Continuous monitoring with automated alerting is the basic production requirement.

- Ethical and Compliance Testing: AI verifies systems meet regulatory requirements like GDPR, HIPAA, and emerging AI governance frameworks. Automated compliance checks catch obvious violations like PII in training data before they become audit findings.

- Autonomous Testing: AI generates and executes test cases without human intervention across web, mobile, desktop, and API platforms. Teams getting value here set up proper monitoring first, because autonomous tests need clear pass/fail signals.

Pro-tip: You can also use platforms like TestMu AI Agent to Agent Testing to check how AI agents behave. It simulates real-world interactions, letting you see how agents respond, adapt, and perform in dynamic situations.

You can measure accuracy, reliability, bias, and safety. This helps teams spot weak points and improve agent performance.

To get started, check out this TestMu AI Agent to Agent Testing guide.

What Are Some of the Best AI Software Testing Tools

The best AI testing tools include TestMu KaneAI, ACCELQ, Testim, and TestComplete. These tools automate test creation, improve accuracy, and reduce manual QA effort using AI and ML.

According to Gartner, by 2027, 80% of enterprises will have integrated AI-augmented testing tools into their software engineering toolchains, up from approximately 15% in early 2023.

- TestMu KaneAI: A Generative AI testing agent for high-speed QA teams that automates test case authoring, debugging, and management with natural language-driven test creation capabilities.

KaneAI lets teams create complex test cases using natural language, accelerating test automation and making the process more intuitive for testers at every skill level.

- Test Creation: Enables the development and refinement of tests through natural language instructions, making test automation approachable and accessible for users of all skill levels.

- Intelligent Test Planner: Generates and executes test steps based on high-level objectives, streamlining the test creation process and reducing manual planning effort for QA teams.

- Multi-Language Code Export: Transforms tests into various major programming languages and frameworks, giving QA teams flexibility in automation and seamless integration across diverse technology stacks.

- 2-Way Test Editing: Syncs natural language edits with code, allowing modifications from either interface so testers and developers can collaborate effectively on test script updates.

- Integrated Collaboration: Supports tagging KaneAI in tools like Slack, Jira, or GitHub to initiate automation workflows directly, enhancing teamwork, efficiency, and cross-team communication across projects.

- ACCELQ: A popular cloud-based platform for codeless AI-powered test automation across desktop, API, mobile, and web, automating the entire enterprise stack with reliable long-term execution.

- Testim: An AI platform that simplifies automated test creation with minimal coding, using machine learning to adapt tests, stabilize execution, and reduce ongoing maintenance overhead.

- TestComplete: Offers AI-driven testing with checkpoints for validating tables, images, and settings, enabling testers to create and run functional tests across web, mobile, and desktop.

Features:

AI Software Testing vs Manual Software Testing

AI testing automates and adapts to changes while manual testing relies on human execution and exploratory checks. The real question is which combination gives your team the fastest feedback for your release cadence.

| Aspect | AI Software Testing | Manual Software Testing |

|---|---|---|

| Approach | AI-driven automation that analyzes, predicts defects, and optimizes test processes for faster, more reliable outcomes. | Testers execute predefined scripts and exploratory tests. |

| Test Case Generation | Automatically generated using AI insights, historical data, and patterns to improve coverage and effectiveness. | Written and maintained manually by QA engineers. |

| Execution Speed | Fast, parallel execution across multiple environments using AI optimization. | Slower, limited by human pace. |

| Accuracy | High accuracy via predictive analytics and pattern recognition, reducing human error. | Prone to human error and oversight. |

| Test Coverage | Broad and deep; AI identifies and prioritizes high-risk areas efficiently. | Often limited; time-consuming to expand. |

| Maintenance | Self-healing scripts adapt automatically to UI and code changes, reducing manual updates. | Manual updates required for every change. |

| Defect Detection | Proactive; predicts likely failure points before execution using AI insights. | Reactive; defects found during test runs. |

| Feedback Loop | Continuous testing with rapid feedback for faster iterations. | Slower feedback cycles. |

| Flakiness Management | AI stabilizes tests using dynamic locators, diagnostics, and self-healing capabilities. | Troublesome; flaky tests require manual investigation. |

| Resource Utilization | Optimized resource use; focuses human effort on complex scenarios. | High manual effort and staffing. |

| Time to Market | Shorter release cycles with faster, targeted testing enabled by AI. | Longer due to manual cycles. |

Shortcomings of AI in Software Testing

AI testing has real limitations vendors understate. Deploy AI where it works and keep human expertise where it is irreplaceable. Generative AI in software testing accelerates repetitive tasks but fails predictably here:

- Testing for Complex Scenarios: AI cannot reason about multi-step workflows across multiple modules and integrations. A domain-expert tester catches how a discount code interacts with loyalty tiers. For complex integration scenarios, human test design remains essential.

- UX Testing: AI catches broken layouts and accessibility violations but cannot evaluate whether a workflow feels intuitive. AI tells you a button exists, not whether users understand what it does. UX still requires human judgment.

- Documentation Review: AI flags inconsistencies in test documentation but cannot evaluate whether a requirement is ambiguous or incomplete from a business perspective. Requirements review requires human judgment AI cannot reliably handle.

- Test Reporting and Analysis: AI generates metrics and dashboards but struggles to provide useful context. Knowing 12 tests failed is useless without understanding which failures block the release versus which are environmental. Human interpretation remains critical.

What Is the Future of Software Testing With AI

The future of AI in software testing points toward autonomous quality systems, smarter element handling, lower manual effort, and AI-driven predictive testing across delivery pipelines.

The World Quality Report 2025-26 found that organizations report an average productivity boost of 19% from AI integration, with Generative AI shifting from analyzing outputs to shaping inputs like test case design and requirements refinement. As AI tools become central to testing workflows, understanding prompting techniques for testers will be essential for getting accurate and useful results from these systems.

- Growing Role of AI: AI is becoming infrastructure, not tooling. Within the next few years, test frameworks without AI capabilities will feel as outdated as manual deployment scripts feel today. Teams should evaluate AI support as a key factor when selecting any new testing tool.

- Smarter Element Handling: AI in test automation is evolving beyond locator repair to contextual understanding of UI elements. Future agents will understand what an element does rather than relying on HTML attributes, making tests stable through redesigns.

- Expanded Automation: AI will handle tasks currently requiring human judgment, like distinguishing bugs from design updates. The scope of "requires a human tester" will shrink, leaving higher-skilled strategy and domain reasoning.

- Reduced Human Dependency: Human testers will shift from execution to oversight, defining quality standards and reviewing AI decisions. Teams that reskill QA engineers for this shift will outperform those that simply reduce headcount.

- Quantum Computing Impact: Quantum computing remains speculative for testing but holds potential for large-scale test combination problems that take too long with regular computing. Watch this trend but focus current resources on proven AI capabilities.

- Predictive Capabilities: AI will evolve from predicting which tests to run to predicting which code changes cause production incidents. This connects testing and production monitoring into one quality view across the delivery pipeline.

- Lower Maintenance Effort: Intelligent test automation will push test maintenance costs toward zero. When tests are cheap to maintain, teams can afford broader coverage without having to decide which tests are worth keeping.

Conclusion

AI is transforming testing now. Teams using AI-driven testing ship faster with fewer production defects. The question is no longer whether to adopt AI in testing but where to start and how to scale responsibly.

Start with the pain point that costs your team the most time: flaky tests, slow pipelines, or maintenance effort. Prove value there, then expand. The teams that try to adopt AI everywhere at once end up adopting it nowhere effectively.

For QA teams looking to start their AI journey, this AI roadmap for software testers provides a structured path from programming basics to autonomous testing.

With AI reshaping testing workflows, the testers who thrive are the ones who understand both testing fundamentals and AI capabilities. The KaneAI Certification validates hands-on AI testing skills and positions you as a QA professional ready for the next generation of quality engineering.

Citations

- Capgemini World Quality Report 2024-25: https://www.capgemini.com/us-en/news/press-releases/world-quality-report-2024/

- Gartner Market Guide for AI-Augmented Software-Testing Tools: https://www.gartner.com/en/documents/5194063

- Grand View Research - AI-Enabled Testing Market Size Report, 2030: https://www.grandviewresearch.com/industry-analysis/ai-enabled-testing-market-report

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests