Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

- TestMu AI (Formerly LambdaTest)

- /

- Learning Hub

- /

- Test Reports in Software Testing: Types, Examples & Best Practices

Test Reports in Software Testing: Types, Examples & Best Practices

Learn how to create effective test reports in software testing. Covers test report types, key components, real-world examples, metrics, AI dashboards, and best practices for QA teams.

Irshad Ahamed

April 24, 2026

According to McKinsey's State of AI 2025 survey, 88% of organizations use AI automation in at least one function, yet most QA teams still track activity metrics (tests executed, bugs found) rather than outcomes (defect escape rate, release quality). The disconnect is clear: teams generate test data but struggle to turn it into decisions. That is what test reports solve.

A test report is a structured document that records what was tested, how it was tested, what passed, what failed, and what defects were found. It feeds into release decisions throughout the Software Development Life Cycle (SDLC) and serves as the evidence trail for quality assurance compliance.

Overview

What Are Test Reports?

Test reports are structured documents that summarize the results, status, and quality of testing activities. They help teams understand what has been tested, what issues were found, and whether the software meets quality standards.

What are the Types of Test Reports?

- Test Summary Report: High-level overview of test execution and results.

- Detailed Test Report: In-depth breakdown of executed test cases and defects.

- Defect Report: List of identified bugs with severity and status.

- Test Coverage Report: Mapping between requirements and test cases.

- Automation Test Report: Tool-generated results from automated test runs.

- Progress/Status Report: Regular updates during active testing cycles.

What are the Benefits of Software Test Reports?

- Measure testing progress and coverage.

- Track defect trends and quality metrics.

- Support go/no-go release decisions.

- Improve communication between QA, developers, and stakeholders.

- Maintain compliance and audit documentation.

- Identify recurring issues and improve future test cycles.

What are the Challenges in Creating Test Reports?

- Manual data consolidation: Test results from multiple tools require time-consuming aggregation.

- Inconsistent report formats: Lack of standardized templates leads to confusion.

- Lack of standardized KPIs: Difficulty measuring quality trends consistently.

- Limited visibility across tools: Disconnected systems reduce transparency.

- Delayed reporting cycles: Manual processes cause outdated insights.

Automation and AI-driven reporting tools help centralize data, standardize metrics, and deliver real-time, actionable insights.

What are Test Reports?

A test report captures every artifact from a test cycle: which test cases were executed, pass/fail results per module, defects logged with severity, environment configurations, and a release readiness recommendation. It answers the stakeholder question: "Can we ship this?"

A well-structured test report includes these core components:

- Test execution summary: Total test cases planned vs. executed vs. passed vs. failed vs. blocked, with pass rate percentage.

- Defect summary: Open vs. closed defects, grouped by severity (critical, major, minor) and module, with resolution status.

- Coverage mapping: Which requirements have test cases, which were tested, and which have gaps. Links to test coverage metrics.

- Environment details: OS, browser, device, build version, database, and API endpoints used during testing.

- Risk assessment: Untested areas, known open defects, and their potential impact on release.

Note: Generate real-time test reports with TestMu AI's native analytics, dashboards, and flaky test detection. Try TestMu AI free!

What are the Types of Test Reports?

Types of Test Reports provide different levels of detail depending on the testing stage and purpose:

- Daily Test Report: Summarizes testing activities, results, and defects identified during a single day’s work.

- Test Cycle Report: Covers the results of a complete testing cycle or sprint, showing progress, pass/fail rates, and defect trends.

- Test Summary Report: High-level overview prepared at the end of a testing phase or project, often used for release decisions.

- Defect Report: Focuses specifically on bugs found, including severity, priority, status, and resolution progress.

- Performance Test Report: Details how the application behaves under various load and stress conditions, highlighting performance bottlenecks.

- Compliance/Regulatory Test Report: Documents testing evidence required to meet industry standards, regulations, or contractual obligations.

What are the Benefits of Software Test Reports?

Most QA teams measure activity (tests executed, bugs found) rather than outcomes. With 62% of enterprises actively experimenting with AI agents, many still lack structured reporting to translate AI-generated insights into release decisions. Well-structured test reports bridge that gap in four specific ways:

- Go/no-go release decisions: A test summary report with pass rates, open critical defects, and untested areas gives product managers the data to decide whether to ship or hold. Without it, the decision is based on gut feel.

- Defect root cause analysis: Grouping defects by module, severity, and origin stage (requirements vs. code vs. environment) reveals where quality breaks down. Teams that track defect origins in reports can reduce defect leakage by focusing prevention efforts on the highest-defect modules.

- Compliance and audit evidence: Regulated industries (healthcare, finance, automotive) require documented proof that software was tested. Test reports provide the audit trail mapping requirements to test cases to results, essential for HIPAA, PCI-DSS, SOX, and ISO 27001 compliance.

- Sprint-over-sprint trend analysis: Comparing pass rates, defect counts, and automation coverage across sprints reveals whether quality is improving or degrading. This is how QA leads justify headcount and tooling investments to management.

When To Create a Test Report?

A test report should be created at key stages of the testing lifecycle to provide timely insights, with timing based on the development approach, testing type, and release plan.

- End of a Test Cycle or Sprint: Summarizes results at the end of each sprint or cycle in Agile/iterative development to evaluate progress and readiness for the next phase.

- After Major Milestones: Generates a report once a significant feature or module is completed to confirm it meets acceptance criteria.

- Before a Release or Deployment: Prepares a final test summary report to validate release readiness, including go/no-go recommendations.

- Post-Release Monitoring: Creates reports after deployment to track performance, detect early defects, and maintain compliance records.

- Continuous Testing in CI/CD: Automates report generation after every build to quickly detect regressions and maintain code quality.

How to Write a Good Test Summary Report?

A Test Summary Report provides a concise assessment of all testing activities completed in a phase or cycle. It highlights key results, test coverage, product quality, identified defects, and overall release readiness, giving stakeholders a clear snapshot for informed decision-making.

To understand how to create a solid test summary report, consider an example: AB is an online travel agency for which an organization is developing the ABC application. While preparing the report, the testing team documents all activities performed during testing and provides an overview of the application.

The ABC application offers services such as bus and railway ticket bookings, hotel reservations, domestic and international holiday packages, and flight bookings. These functionalities are divided into modules like Registration, Booking, and Payment, all of which are included in the report.

Here are the steps to create a test summary report for an online travel agency.

Step 1: Create a Testing Scope

The team mentions those modules or areas that are in scope, out of scope, and untested owing to dependencies or constraints.

- In-scope: We completed the functional testing of the following modules:

- User registration

- Registration confirmation

- Ticket booking

- Hotel package booking

- Payment

- Out of scope:

- Multi-tenant user testing

- Concurrency

- Untested modules:

- The User Registration page that has the field values in mixed cases

Step 2: Test Metrics

Test metrics include the following:

- The count of planned test cases

- The count of executed test cases

- The count of passed test cases

- The count of failed test cases

The usage of test metrics is to analyze test execution results, the status of the cases, and the status of the defects, among others. The testing team can also generate charts or graphs to represent the distribution of defects: function-wise, severity-wise, or module-wise.

Step 3: Implemented Testing Type

The team includes all the types of testing it has implemented on the ABC application. The motive for doing so is to convey to the readers that the team has tested the application properly.

- Smoke testing: When the QA team receives the build, the team implements smoke testing to confirm whether the crucial functionalities are working as expected. The team accepts the build and commences testing. After the software application passes the smoke testing, the testing team gets the confirmation to continue with the next type of testing.

- Regression testing: The team conducts testing not on a particular feature or defect fix but on the entire software application. It consists of defect fixes and new enhancements. This testing confirms that after these defect fixes and new enhancements exist in the software application, the application has rich functionality. The team adds and executes new test cases to the new features.

- System Integration testing: The team performs system integration testing to ensure that the software application is functioning as per the requirements.

Step 4: Test Environment and Tools

The team notes all the details of the test environment used for the testing activities (such as Application URL, Database version, and the tools used).

The team can create tables in the following format.

Step 5: Learnings during the Testing Process

The team includes information such as the critical issues they faced while testing the application and the solutions devised to overcome these issues. The intention of documenting this information is for the team to leverage it in future testing activities.

The team can represent this information in the following format.

Step 6: Suggestions or Recommendations

The team notes suggestions or recommendations while keeping the pertinent stakeholders in mind. These suggestions and recommendations serve as guidance during the next testing cycle.

Step 7: Exit Criteria

When the team defines the exit criteria, it indicates test completion on the fulfillment of specific conditions, such as the following:

- The team has successfully executed all its planned test cases.

- The team has closed all the critical issues.

- The team has planned the actions for all open issues, which it will address in the next release cycle.

Step 8: Sign-off

If the team has fulfilled the exit criteria, the team can provide the go-ahead for the application to ‘go live.’ If the team has not fulfilled the exit criteria, the team should highlight the specific areas of concern. Further, the team should leave the decision about the application going live with the senior management and other top-level stakeholders.

Note: We have provided a free and easy-to-use Test Report Template. Check it out now!

Who Needs Test Reporting?

Test reports serve as a critical communication tool for various stakeholders involved in the software development lifecycle:

- Product Managers & Business Analysts: Evaluate release readiness, track progress, and ensure alignment with business objectives.

- Developers: Gain visibility into defect patterns, root causes, and areas requiring corrective action.

- Testers & QA Leads: Analyze execution outcomes, identify gaps, and refine the overall testing strategy.

- Senior Management: Use clear quality metrics to make informed go/no-go decisions for product releases.

Each audience needs a different view of the same data. Modern test management tools solve this with role-based dashboards rather than one-size-fits-all PDF reports.

AI Dashboards for Smart Test Reporting

Static test reports no longer meet the needs of Agile and DevOps teams. With only 39% of organizations reporting measurable EBIT impact from AI, the gap between AI adoption and AI-driven decision-making is clear. AI-powered dashboards are replacing static PDF reports with real-time, interactive quality views that close this gap.

- Automated analysis: AI scans through massive test data, identifying defect patterns and root causes faster than manual reviews.

- Predictive insights: Machine learning models can forecast areas likely to fail, helping teams focus testing where risk is highest.

- Noise reduction: AI filters out flaky or irrelevant test results, ensuring stakeholders see only actionable data.

- Real-time dashboards: Interactive, visual reports allow stakeholders to track test health continuously and make quicker release decisions.

- Business Impact: AI dashboards align with Agile and DevOps by offering instant, reliable insights. This reduces delays, cuts down defect leakage, and enables faster, higher-quality releases.

Create & Analyze Effective Test Reports with TestMu AI Native Analytics

TestMu AI provides two powerful, native solutions to help teams create, analyze, and share test reports effectively:

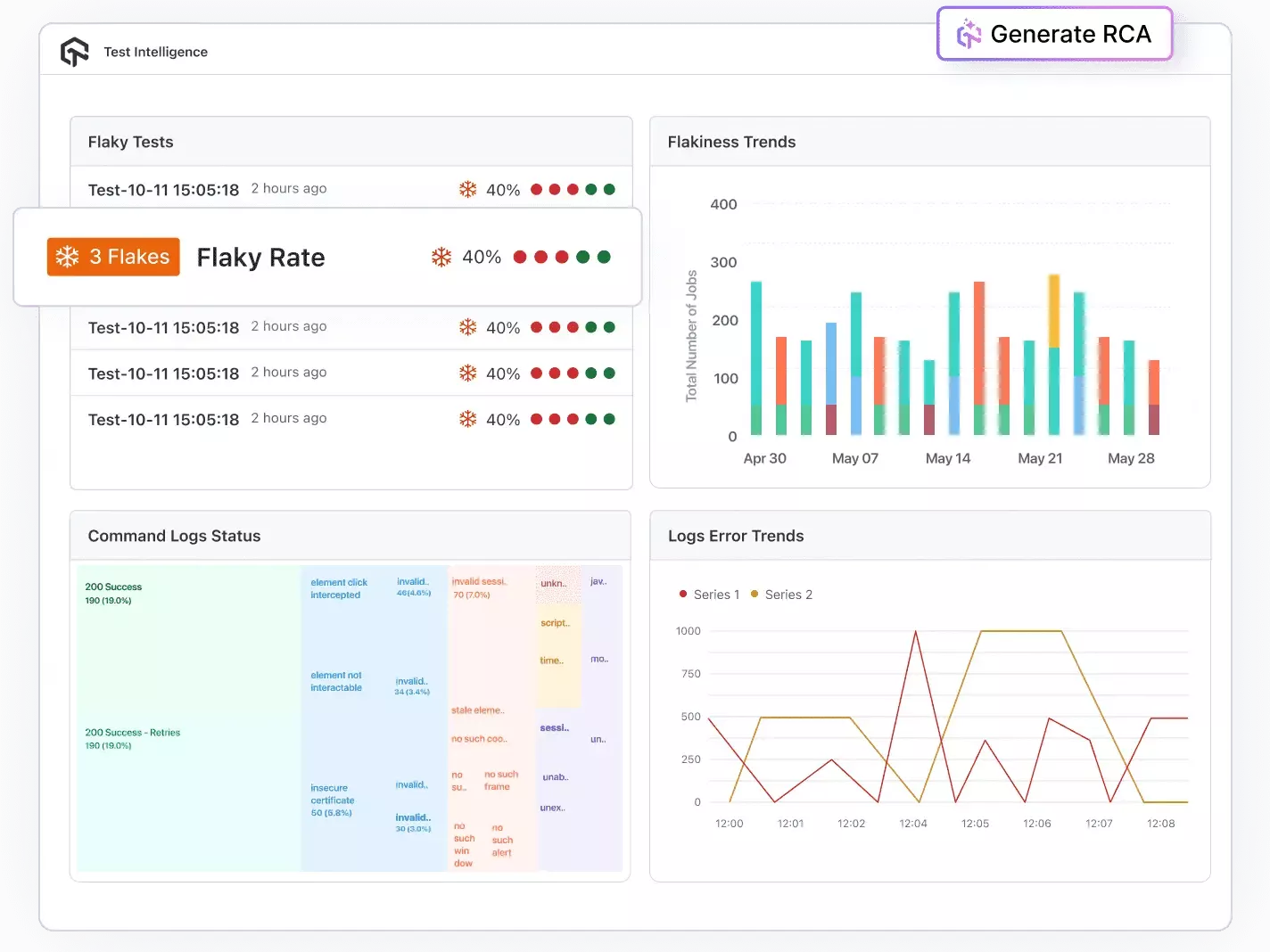

Test Intelligence: Test Intelligence uses data analytics, automation, and AI-driven insights to enhance testing accuracy, speed, and effectiveness. It helps teams detect issues early, identify patterns, optimize test suites, and make informed quality decisions using real-time and historical data.

- Detect Flakiness: Detects flaky tests and anomalies automatically.

- Perform RCA: Perform root cause analysis with AI-driven log and error classification.

- Predict Trends: Forecast error trends based on historical data.

- Customize Insights: Use customizable filters and insights to simplify automation and organize test cases efficiently.

- Spot Irregularities: Identify anomalies across test executions and environments before they impact releases.

- Profile Performance: Analyze CPU, memory, and battery usage during mobile app tests to uncover performance bottlenecks.

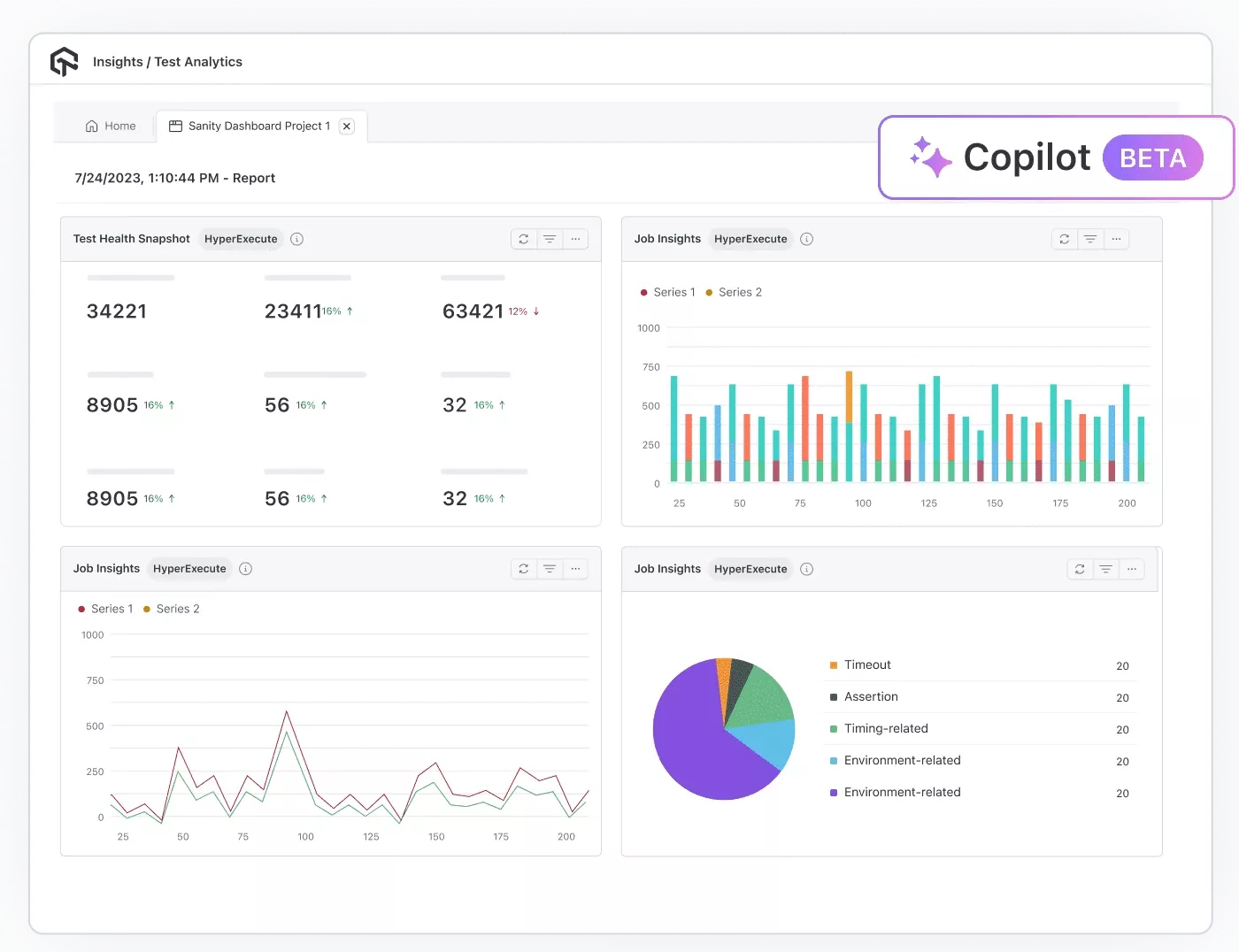

Test Analytics: Test Analytics provides data-driven visibility into testing activities through interactive dashboards and customizable metrics. It helps teams track trends, evaluate performance, optimize resources, and make informed decisions based on real-time and historical test data.

- Access Dashboards: Explore interactive dashboards with customizable widgets tailored to your workflow.

- Track Trends: Monitor historical test execution patterns and performance trends across builds.

- Optimize Parallelism: Track parallel test usage and optimize resource allocation for faster execution.

- Build Dashboards: Create personalized dashboards to follow key team metrics and securely share insights via expiring links or exportable reports.

- Use AI CoPilot: Leverage the AI CoPilot Dashboard for smart recommendations and data-driven decisions.

TestMu AI Test Intelligence vs. Test Analytics - What’s the Difference?

| Aspect | Test Intelligence | Test Analytics |

|---|---|---|

| Primary goal | Explain why tests fail; reduce noise and flakiness | Show what happened; summarize progress and quality |

| Lens | Diagnostic and predictive | Descriptive and observability |

| Best for | SDETs, developers, QE leads | PMs, QA managers, exec stakeholders |

| Key outputs | Failure clusters, flaky test list, probable root causes, anomaly signals | Execution summary, trends, coverage views, environment matrix, utilization |

| Time horizon | Near-term risk forecasting; immediate triage | Historical and real-time rollups for releases/sprints |

Challenges Faced in Creating Test Reports

- Quick Release Rhythms: Agile, DevOps, and CI/CD require rapid testing and reporting, often within days or hours instead of months. Delayed feedback can postpone releases or risk shipping low-quality products.

- High-volume, Irrelevant Data: Test automation and device/browser proliferation generate massive data, much of which is “noise” from flaky tests, unstable environments, or false negatives.

- Data from Disparate Sources: Large organizations use multiple tools, frameworks, and teams (e.g., Selenium, Appium), making it hard to aggregate and standardize results.

Solution: Automate report generation, integrate testing tools into CI/CD pipelines, and use real-time dashboards for instant feedback.

Solution: Implement test result filtering, maintain stable test environments, and prioritize high-value metrics for reporting.

Solution: Use centralized reporting tools like TestMu AI's Test Analytics to unify results across frameworks through APIs and integration platforms.

Best Practices for Writing Effective Test Reports

- Automate report generation from CI/CD: Integrate your CI/CD pipeline to auto-generate reports after every build. Tools like TestMu AI, Allure, and ExtentReports can produce HTML reports triggered by Jenkins, GitHub Actions, or GitLab CI, eliminating manual data collection.

- Define 3-5 key metrics per audience: Executives need pass rate, critical open defects, and release risk. QA leads need test coverage gaps and flaky test counts. Developers need failure logs and defect distribution by module. One report cannot serve all three - create layered views or filtered dashboards.

- Include a "what changed" section: Every report after the first should highlight deltas: new defects since last cycle, tests added, coverage changes, and resolved blockers. This prevents the "same report, different date" problem.

- Publish within 24 hours of cycle completion: A test report published 3 days after test execution is stale. Close the cycle, generate the report, and share it via Slack/email the same day. Automated dashboards eliminate this lag entirely.

- Track defect escape rate: Measure how many production bugs were in modules marked "passed" in test reports. This is the single best metric for evaluating whether your test reports are actually predicting quality. With 88% of organizations using AI but only a third scaling enterprise-wide per McKinsey, automated test reporting is how QA teams prove AI is delivering measurable quality improvement.

- Version-control your report templates: Store templates in your repo (not in Google Docs or Confluence) so they evolve with the codebase. Include the template version in every published report for traceability.

Conclusion

Start with one change: after your next test cycle, generate a report that answers three questions for your stakeholders - what is the pass rate by module, how many critical defects are still open, and what was not tested. If your current tooling makes answering those three questions painful, it is time to switch to automated reporting.

TestMu AI's Test Analytics and Test Intelligence generate these insights automatically from your test runs, with AI-powered flaky test detection, root cause analysis, and customizable dashboards. Explore the analytics documentation to set up your first dashboard in minutes.

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests