- Home

- /

- Test Management

- /

- Test Case Management Guide: Lifecycle, RTM & Best Practices

Test Case Management: The Complete Guide

Learn how test case management works in practice: how to write effective test cases, structure repositories, build RTMs, eliminate test debt, and integrate with CI/CD.

Abhishek Mishra

April 22, 2026

Test case management is the discipline of authoring, versioning, executing, and retiring test cases across the SDLC, with every case traceable to a requirement and every execution linked to results.

In 2025, Forbes reported that 40% of organizations lose more than $1M every year to poor software quality. Most of that loss doesn't come from too few test cases, it comes from cases in spreadsheets nobody maintained, linked to requirements that changed two sprints ago.

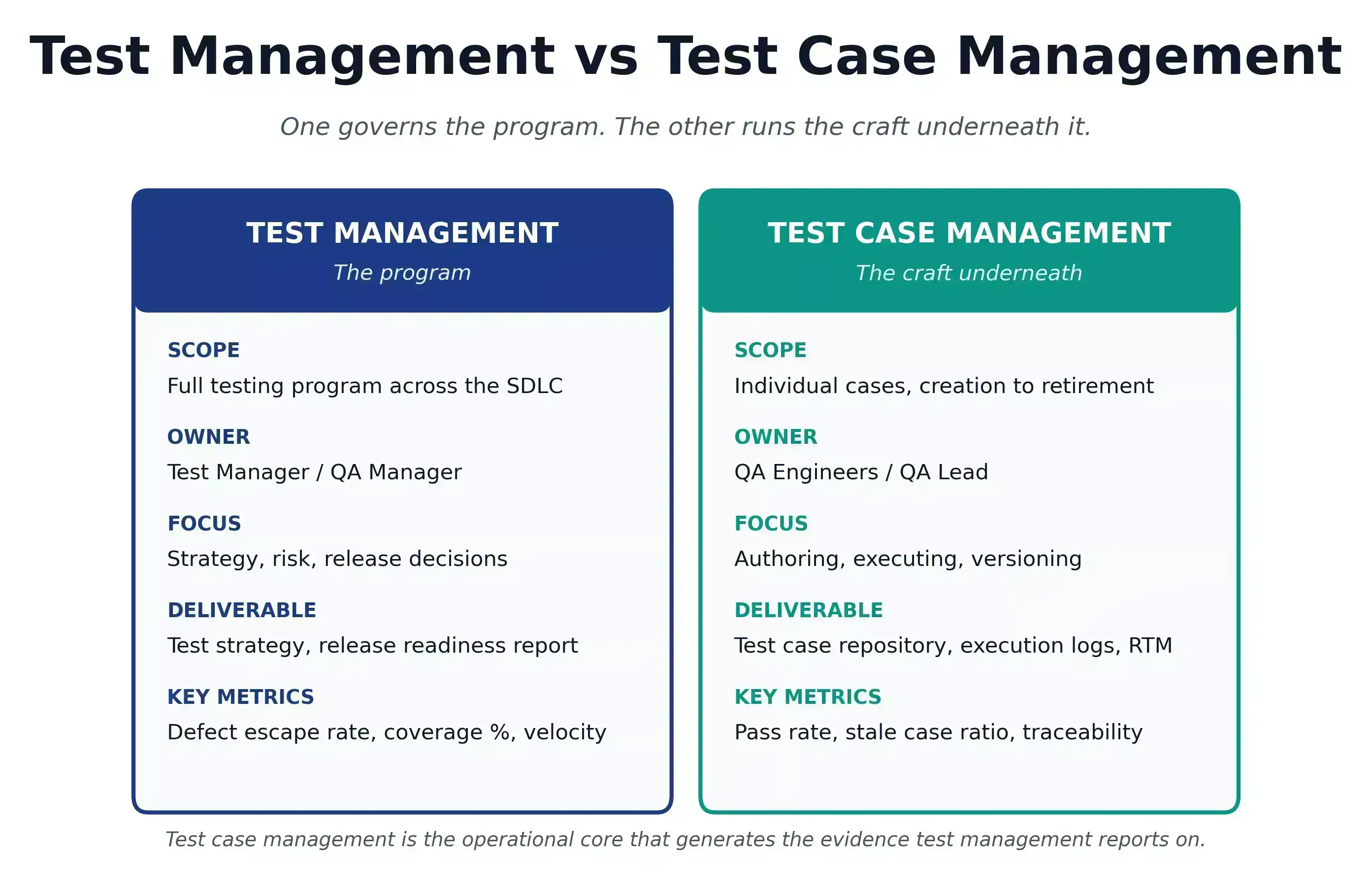

Test management is the program; test case management is the craft underneath it that produces the evidence the program reports on.

Overview

What is Test Case Management?

Test case management is the process of creating, organizing, executing, versioning, and retiring test cases with clear links to requirements and results.

How does test case management differ from test management?

- Test management: Governs overall testing strategy

- Test case management: Manages individual test cases

- Relationship: Cases generate evidence used in release decisions

What is the test case lifecycle?

- Draft: Case created

- Review: Peer validated

- Ready: Approved for execution

- Active: Used in test runs

How does test case management support Agile and CI/CD?

- Sprint-ready cases: Written alongside features

- Tag-based runs: Execute only required tests

- Pipeline integration: Automated execution feedback

How can TestMu AI support test case management?

- Unified workspace: Manage cases and runs in one place

- AI test creation: Generate cases from requirements

- Lifecycle tracking: Manage status from draft to retirement

What Is Test Case Management?

Test case management is the process of authoring, versioning, executing, and retiring test cases across the SDLC, with every case traceable to a requirement and every execution linked to results. It covers test case documentation, peer review, execution tracking, and retirement.

Test Management vs Test Case Management

| Dimension | Test Management | Test Case Management |

|---|---|---|

| Scope | Full testing program across all SDLC phases | Individual test cases from creation through retirement |

| Level | Program and release level | Test case and execution level |

| Primary focus | Strategy, planning, resourcing, risk, and reporting | Authoring, organizing, executing, versioning, and tracking test cases |

| Who leads it | Test Manager / QA Manager | QA Engineers / QA Lead |

| Key activities | Risk analysis, test estimation, team coordination, defect triage, metrics | Test case writing, peer review, versioning, execution recording, defect linking |

| Primary deliverable | Test strategy, test plan, release readiness report | Test case repository, execution records, requirement traceability matrix (RTM) |

| Key metrics | Defect escape rate, test coverage %, execution velocity, defect density | Pass rate per run, stale case ratio, defect traceability coverage |

| Relationship | Governs the full testing program and makes release decisions | Operational core of test management; generates the evidence test management reports on |

Strong test management with vague test cases still ships production bugs. A perfect test case repository with no release governance still misses defects.

Note: Every test case linked, traceable, and audit-ready. Try TestMu AI Test Management Now!

Why Test Case Management Matters

If cases are not executed in 90+ days, have no linked requirement, or include vague steps like "verify it works," they become test case debt. A stale case ratio above 30% means your coverage metrics are reporting on a product that no longer exists.

- Fewer production escapes: cases linked to requirements catch defects before ship.

- Lower fix cost: bugs caught in tests cost a fraction of bugs caught in production.

- Case reusability: named preconditions and shared step libraries plug into new projects without rewrites.

- Provable release readiness: a live RTM answers "does every requirement have a passing test" before the release meeting, not during it.

- Faster sprint closes: tag-driven execution means smoke runs in minutes, regression testing runs overnight.

- Audit-ready evidence: for finance, healthcare, and aviation, test case history is mandatory. Execution logs showing who ran which case, when, and whether it passed are exactly what auditors ask to see.

Who Owns the Test Case Management Process?

Four roles touch the test case management process, each at a different altitude:

- QA engineers write cases, execute runs, record results, and raise defects.

- QA leads and test managers run peer review, enforce the lifecycle, and own the RTM.

- Developers consume linked cases during code review; defects tell them exactly what broke.

- PMs and BAs verify every user story has linked cases before sprint close.

Most failures trace to role ambiguity: everyone writes cases, nobody reviews, no one owns retirement. Assign the lead explicitly.

The 12-Field Test Case Template

A test scenario is the situation: "checkout with an expired card." A test case is the execution specification: exact steps, inputs, and expected result. One scenario generates 3 to 8 cases.

Use this test case template as a standard. Twelve fields separate a repeatable case from one that produces different results depending on who runs it:

| Field | Example |

|---|---|

| Test Case ID | AUTH-TC-042 |

| Title | "Verify login fails when password field is empty" |

| Module / Feature | Authentication > Login |

| Preconditions | "Account [email protected] exists. App on /login." |

| Test Steps | "1. Enter [email protected]. 2. Leave Password empty. 3. Click Sign In." |

| Expected Results | "Error 'Password is required' appears. User remains on /login." |

| Test Data | Email: [email protected], Password: (empty) |

| Priority | Critical / High / Medium / Low |

| Tags | Smoke, Regression, Functional, API, Security |

| Status | Draft / Review / Ready / Active / Retired |

| Linked Requirements | US-123 |

| Linked Defects | BUG-456 |

To learn more, read our detailed guide on the test case template with all essential fields included.

Free Test Case Template

Note: Explore real test case examples to see how each field in a template is used in real-world scenarios. Download Now!

Best Practices for Writing Effective Test Cases

Five properties determine whether a case is worth running:

- Atomic scope. One case, one scenario. Combined cases produce ambiguous failures.

- Measurable expected results. "Should work" fails. "Error 'Password is required' appears below the field" passes.

- Named test data. Not "valid payment details." Specify the exact card number, amount, and billing address.

- Independent execution. If a case depends on a prior case's outcome, one failure breaks the whole chain.

- Negative and boundary coverage. For every happy path, write one invalid input and one boundary case.

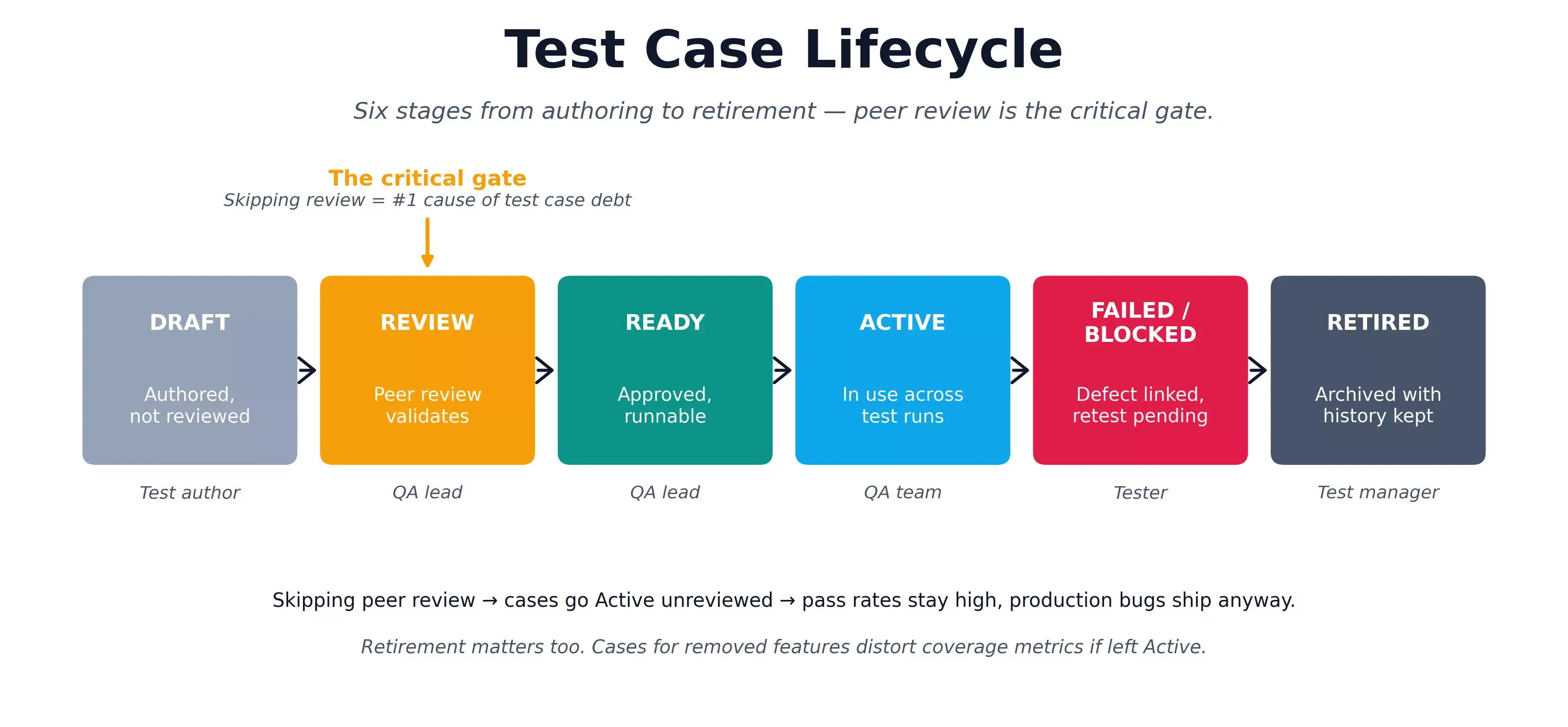

Test Case Lifecycle

| Stage | What Happens | Owner |

|---|---|---|

| Draft | Authored, not yet reviewed. May be incomplete. | Test author |

| Review | Peer review validates completeness and links. | QA lead |

| Ready | Approved and available for test runs. | QA lead |

| Active | In use across one or more test runs. | QA team |

| Failed / Blocked | Execution failed or blocked; defect linked. | Tester |

| Retired | Feature removed or case superseded. History preserved. | Test manager |

The Review stage is the key discipline. Teams under sprint pressure skip peer review and promote Draft cases straight to Active, which is the single most common cause of test case debt. Retirement matters equally: cases for removed features that stay Active distort coverage metrics.

Austin Siewert

CEO, Vercel

Discovered @TestMu AI yesterday. Best browser testing tool I've found for my use case. Great pricing model for the limited testing I do 👏

2M+ Devs and QAs rely on TestMu AI

Deliver immersive digital experiences with Next-Generation Mobile Apps and Cross Browser Testing Cloud

Test Case Repository Structure

Flat repositories with hundreds of ungrouped cases are unusable at scale. Four levels - Project, Module, Feature, Test Type - mirror how the product is built.

E-Commerce Platform

├── Authentication

│ ├── Login - Functional, Negative, Boundary

│ └── Registration - Functional, Negative, Security

└── Checkout

├── Cart - Functional, Edge Cases

└── Payment - Functional, Negative, SecurityNaming: [Module]-[Feature]-[Scenario]-[Condition]. Example: AUTH-LOGIN-Negative-EmptyPassword.

Tag taxonomy:

- Smoke: build verification. Max 30 cases.

- Critical Path: login, checkout, data save.

- Regression: full suite. Nightly or pre-release.

- Functional: current sprint features.

- Edge Case: boundaries, invalid inputs, error paths.

No case enters without a linked requirement and Ready status. Triage quarterly - retire anything not executed in 90 days.

Test Case Design Techniques

Design techniques are systematic methods for deriving a complete, non-redundant set of test cases from requirements. For a deep dive into each method, see test case design techniques.

| Technique | Best For |

|---|---|

| Equivalence Partitioning | Form inputs, numeric ranges, text fields; one case per valid/invalid partition. |

| Boundary Value Analysis | Age fields, quantity limits, date ranges; most off-by-one defects live at edges. |

| Decision Table Testing | Pricing logic, discounts, conditional access; covers every combination. |

| State Transition Testing | Order flows, session management; tests valid and invalid transitions. |

| Use Case Testing | Checkout, onboarding; covers real user paths including failures. |

| Pairwise Testing | Multi-config features; reduces N-way combinations to manageable coverage. |

Execution Planning and Test Case Execution Tracking

The repository is what you have. A test run is what you execute against a specific build. Assemble runs by risk, not module completeness:

- Smoke: run first. Fail here = return the build.

- Critical Path: login, primary operations, payment.

- Functional: new features from the current sprint.

- Regression: all approved cases from previous sprints. Nightly.

- Edge Cases: run last, always for payment and auth.

Result states: Passed, Failed, Blocked, Skipped. Blocked and Skipped need a documented reason. "Partial" produces reports nobody can act on.

Cases per user story: minimum 3 (happy path, invalid, boundary). Payment flows need 20+. An FAQ page needs 3.

Requirement Traceability Matrix (RTM)

The RTM answers the one question every release requires: does every requirement have a passing test case?

| Requirement | Test Cases | Execution | Coverage |

|---|---|---|---|

| US-101: Log in with valid credentials | TC-001, 002, 003 | 2 Passed, 1 Failed | At Risk |

| US-102: Fail with invalid password | TC-004 | Passed | Covered |

| US-103: Password reset via email | (none) | Not Executed | Gap |

- Gap: no test case. Unacceptable before release.

- At Risk: tests exist but one failed. Block release until fixed.

- Covered: all linked cases passed.

Forward traceability finds coverage gaps. Backward traceability finds orphaned cases. Run both. Keep the RTM live in Test Management - a requirement that changes mid-sprint should immediately surface which cases are now stale.

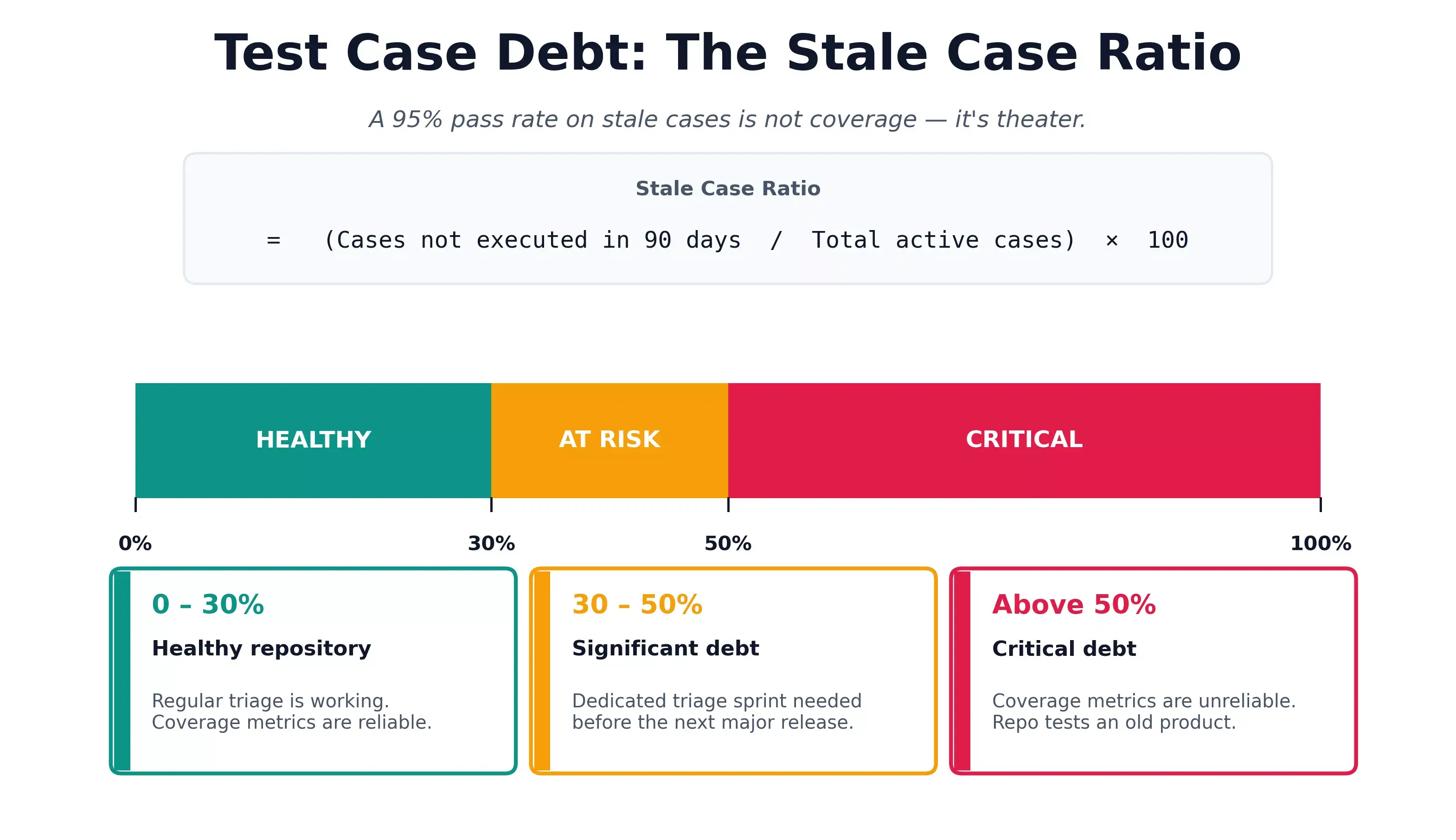

Test Case Debt

Test case debt is the accumulation of outdated, duplicate, or never-executed cases. A repository with 4,000 cases and a 95% pass rate looks healthy until production defects reveal the cases are passing on behavior from two releases ago.

Measure it with the stale case ratio:

- Stale Case Ratio = (Cases not executed in 90 days / Total active cases) x 100

- Above 30%: significant debt. Triage sprint needed.

- Above 50%: critical. Coverage metrics are unreliable.

Other signs: high pass rate with production escapes; testers skipping cases informally; review time over 30 minutes per case; duplicate cases across modules.

Reduction strategy:

- Quarterly triage: tag every case untouched in 90 days as a retirement candidate.

- Retirement policy: feature removed = cases retired immediately.

- Coverage gap analysis: forward and backward traceability, together.

- Reusable step libraries: login, data setup, teardown extracted once, referenced everywhere.

Austin Siewert

CEO, Vercel

Discovered @TestMu AI yesterday. Best browser testing tool I've found for my use case. Great pricing model for the limited testing I do 👏

2M+ Devs and QAs rely on TestMu AI

Deliver immersive digital experiences with Next-Generation Mobile Apps and Cross Browser Testing Cloud

Test Case Management in Agile and CI/CD

Test case management in agile

In agile, cases are written during sprint planning, reviewed mid-sprint, and executed in the final days. The failure mode: each sprint adds cases, nothing retires. Teams that retire one case per three added never hit the wall where regression outlasts the sprint.

In CI/CD

In CI/CD, tags become pipeline config - that's what prevents a 4,000-case suite from running on every pull request.

| Stage | Tags | Trigger | Gate |

|---|---|---|---|

| PR Check | Smoke, Critical Path | Every PR | 100% pass to merge |

| Merge to Main | + Functional | Every merge | 100% Smoke; 90%+ Functional |

| Nightly | Regression, Edge Case | Scheduled | Blockers addressed before standup |

| Pre-Release | All Active | RC promotion | No open Critical defects; 100% RTM coverage |

Automation results push back to the test management system so manual and automated results reconcile into one release picture. A test case version bump should trigger a review flag on the automated script implementing it.

Flaky test management

Flaky test cases are quality debt, not infrastructure. Two-sprint investigation SLA: quarantine non-deterministic behavior, fix genuinely unstable code. Flaky cases left in the pipeline teach teams to override failures.

TestMu AI for Test Case Management

TestMu AI is an AI test management platform covering authoring, lifecycle, live RTM, CI/CD integration, and two-way defect sync in one workspace, with no coordination required between a spreadsheet, a tracker, and a dashboard.

- AI generated test cases: KaneAI produces structured cases from prompts, user stories, or requirement docs, each with preconditions, steps, expected results, priority, and tags.

- Lifecycle with status tracking. Unverified, Faulty, Ready, Live, Archived. Unverified cases cannot enter active runs.

- Test case deduplication: built-in capabilities identify similar cases and enable test case reuse to reduce duplication and keep coverage accurate.

- Execution on 10,000+ real browsers and devices: Manual, automated, and exploratory testing results share one workspace.

- Two-way Jira and Azure DevOps sync: Failed cases auto-create issues; defect closure updates both systems.

Subscribe to the TestMu AI YouTube channel for the latest tutorials on modern software testing.

Conclusion

A test case repository doesn't win on volume. It wins on evidence: every requirement linked, every execution recorded, every stale or duplicate case retired.

Teams that treat test case management as a practice rather than a tool feature ship fewer production escapes and make release decisions from data instead of meetings.

Six habits compound over time: write atomic cases with measurable expected results, link every case to a requirement, enforce peer review before Active status, deduplicate quarterly by linked requirement, track the stale case ratio, and keep the RTM live. TestMu AI's Test Management handles all six in one workspace. See the test manager docs to set up your first test run and coverage view in under 20 minutes.

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests