Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

AI Testing Services: What QA Teams Need to Know in 2026

AI testing services use artificial intelligence and machine learning to optimize the software testing lifecycle. They enhance speed, coverage, and efficiency through self-healing automation, intelligent test generation, and visual AI testing.

Prince Dewani

March 23, 2026

AI testing services use artificial intelligence and machine learning to optimize the software testing lifecycle. They enhance speed, coverage, and efficiency through self-healing automation, intelligent test generation, and visual AI testing.

This guide covers what these services include, which capabilities matter, when they make practical sense, and how to evaluate them.

Overview

AI testing services apply artificial intelligence and machine learning to optimize the software testing lifecycle, improving speed, coverage, and efficiency across test creation, execution, and validation.

Key aspects of AI in software testing:

- Self-Healing Test Scripts: Automatically repair broken element locators when UI changes, reducing manual maintenance across releases.

- Intelligent Test Generation: Converts plain-English prompts and project documents into structured, executable test scenarios with assertions.

- Visual AI Testing: Compares UI screenshots across browsers and devices using AI image analysis to catch pixel-level defects.

- Predictive Defect Detection: Analyzes historical test data and code changes to flag high-risk areas before test execution begins.

- AI Agent Validation: Evaluates chatbot, LLM, and voice agent outputs for hallucination, bias, toxicity, and drift before production.

Top AI testing platforms in 2026:

- TestMu AI: Full-stack agentic AI quality engineering platform with complete solutions for AI native testing, including KaneAI, HyperExecute, Test Manager, SmartUI, Test Intelligence, and more.

- Katalon: Unified automation platform for web, mobile, API, and desktop with AI self-healing.

- Tricentis Tosca: Enterprise codeless test automation with model-based approach and 160+ technology support.

- ACCELQ: Codeless AI test automation for web, API, and enterprise applications.

What Are AI Testing Services?

AI testing services apply AI and ML across the software testing lifecycle to automate test creation, heal broken scripts, detect defects predictively, and validate AI system outputs for accuracy and bias.

These services apply artificial intelligence and machine learning to every stage of software testing: test case generation, script maintenance, test execution, defect detection, and AI system validation. The goal is to reduce manual effort, catch defects earlier, and deliver faster feedback in continuous delivery pipelines.

89% of organizations are piloting or deploying Gen AI in quality engineering workflows. Only 15% have scaled it to enterprise level. Top barriers: integration complexity (64%), data privacy risks (67%), and hallucination concerns (60%). [1]

AI testing services cover two distinct areas:

- AI Quality Engineering: Uses AI and ML to test traditional software. Generates test cases from requirements, self-heals broken locators, prioritizes regression runs by code change risk, and orchestrates parallel execution across browsers and devices. Targets deterministic web and mobile applications.

- Quality Engineering for AI Systems: Validates AI-powered features for correctness, fairness, and safety. Chatbots, recommendation engines, LLMs, and voice agents produce non-deterministic outputs. Testing evaluates accuracy, bias, hallucination rate, toxicity, and drift over time. Required for any organization shipping AI features to production.

A complete platform covers both. Addressing only one leaves quality gaps that compound with every release.

Note: Automate Software Testing with Testmu AI. Try Now!

What Capabilities Should AI Testing Services Include?

AI testing services should include natural language test authoring, self-healing scripts, AI test orchestration, visual regression testing, real device coverage, and AI agent validation.

The most complete platforms span authoring, orchestration, visual validation, device coverage, and AI system testing. Teams comparing AI testing tools should evaluate each capability against the business outcome it delivers.

| Capability | What It Does | Business Outcome |

|---|---|---|

| Natural Language Test Authoring | Creates test cases from plain-English prompts instead of coded scripts | Non-technical members contribute to coverage; authoring drops from hours to minutes |

| Self-Healing Test Scripts | Updates element locators automatically when UI changes using ML-trained detection | Reduces maintenance overhead; fewer false failures in CI/CD pipelines |

| AI Test Orchestration | Distributes tests across infrastructure with smart retries and fail-fast logic | Reduce Test Execution Time; feedback loops shorten from hours to minutes |

| Visual Regression Testing | Compares UI screenshots across browsers and devices using AI image analysis | Catches pixel-level bugs functional tests miss; filters rendering noise |

| Real Device and Cross-Browser Coverage | Runs tests on real physical devices and browser/OS combinations in the cloud | Validates real-world experience without in-house device labs |

| AI Agent and Model Validation | Tests AI agents, chatbots, and ML models for hallucination, bias, toxicity, and drift | Prevents reputational and regulatory risk before AI features reach production |

| Multi-Format Test Input | Generates scenarios from any format including PRDs, Jira tickets, PDFs, images, audio, and video | Eliminates manual requirement-to-test translation; maintains traceability |

| Framework-Agnostic Code Export | Exports automation code in Playwright, Selenium, Cypress, or Appium | Avoids vendor lock-in; teams own their test code |

Here is what matters in each:

- Natural Language Test Authoring: Converts plain-English instructions into executable, multi-step test scenarios with built-in assertions. Context awareness across the full user journey is what separates production-grade NLP testing from single-step prompt converters.

- Self-Healing Test Scripts: Identifies UI components by semantic role using ML-trained detection, not by DOM position. Semantic understanding is what makes AI UI testing reliable when layouts change between releases.

- AI Agent and Model Validation: Evaluates chatbot, voice agent, and LLM responses for hallucination rate, bias, toxicity, tone consistency, and context retention across multi-turn conversations. Non-deterministic outputs require statistical evaluation, not binary pass/fail. This is what AI testing agents are built for.

- Framework-Agnostic Code Export: Exports test code to Playwright, Selenium, Cypress, or Appium. Portable code means your team owns the tests regardless of which platform generated them.

When Do AI Testing Services Make Sense?

AI testing services make sense when flaky tests slow releases, AI features ship to production, compliance requires continuous validation, or QA is the release bottleneck.

They solve specific bottlenecks. Here is when they pay off and when they do not.

You need them when:

- Flaky Tests Are Slowing Your Release Cycle: More time debugging false failures than finding real bugs. Self-healing scripts and intelligent retry logic cut false positives immediately. Teams with large regression suites and frequent flaky tests get the fastest ROI.

- Your Product Ships AI-Driven Features: Chatbots, recommendation engines, AI-generated content, or voice agents in your application. These need validation for hallucination, bias, drift, and output quality. Traditional test automation cannot evaluate non-deterministic AI outputs.

- Regulatory Compliance Requires Continuous Validation: Healthcare, fintech, and automotive organizations need auditable, repeatable test runs with standardized metrics. Structured reporting makes compliance documentation faster to produce.

- QA Is the Bottleneck, Not the Safeguard: Manual test creation cannot keep pace with sprint velocity. No-code test automation and AI-generated scenarios scale coverage without adding headcount.

You probably do not need them when:

- Stable Product with Infrequent Releases: Quarterly releases, small suite, basic automation covers the scope. Adding AI adds complexity without proportional return.

- Small Test Suite with Predictable UI: Fewer than 50 test cases, minimal UI changes. Setup overhead exceeds time saved. Start with deterministic automation and revisit when complexity grows.

How Should You Evaluate AI Testing Services?

Evaluate AI testing services on natural language authoring, framework export, real device infrastructure, test orchestration, AI agent validation, and pricing transparency.

Here is a structured evaluation framework for these platforms.

1. Natural Language Test Authoring

Allows non-technical team members to create tests in plain English. This determines how fast your test coverage grows and whether product managers, manual testers, and analysts can contribute. Evaluate whether the platform understands multi-step user journeys or only handles single-step prompts.

2. Framework-Agnostic Code Export

Generates test code in open-source frameworks like Playwright, Selenium, Cypress, or Appium. Without this, your test suite is locked inside the vendor's runtime. Evaluate whether exported code runs independently with zero platform dependency.

3. Real Device and Cross-Browser Infrastructure

Runs tests on actual physical devices and browsers in the cloud, not emulators. Emulators miss OS-specific and GPU-related bugs that only surface on real hardware. Evaluate device count, OS version coverage, geolocation support, and on-demand scalability.

4. AI-Native Test Orchestration

Distributes test execution intelligently across infrastructure with smart retries and fail-fast logic. This directly controls how fast your CI/CD pipeline gets feedback. Evaluate whether the platform learns from historical runs to optimize future execution.

5. AI Agent and Model Validation

Tests chatbots, voice agents, LLMs, and recommendation engines for hallucination, bias, toxicity, and context retention. Without this, AI-powered features ship without proper quality validation. Evaluate multi-modal support and automated persona-based scenario generation.

6. Transparent and Scalable Pricing

Published per-agent or per-minute rates that align cost with actual usage. Test volume fluctuates with release cycles, so pricing should scale up and down without long-term lock-in or hidden fees.

Which Are the Top AI Testing Platforms/Services in 2026?

Here are the 5 Top platforms that cover the widest scope of AI testing services capabilities in 2026.

1. TestMu AI

TestMu AI is the world's first full-stack agentic AI quality engineering platform. One platform for all AI native testing solutions from planning, authoring, executing, to analysing tests across 3000+ browser-OS combinations and 10000+ iOS and Android real devices.

- Natural Language Test Authoring: KaneAI creates executable test scenarios from plain-English prompts across web, mobile, and API testing.

- AI-Native Test Orchestration: HyperExecute distributes tests with smart retries, fail-fast logic, and intelligent test prioritization.

- Visual UI Testing: SmartUI compares screenshots across browsers, devices, and resolutions to catch pixel-level regressions before they reach production.

2. Katalon

Unified automation platform for web, mobile, API, and desktop testing with AI self-healing, codeless recording, and Groovy/Java scripting in a single workspace.

- Multi-Platform Coverage: Automates across web, mobile, API, and desktop from one IDE built on Selenium and Appium.

- AI Self-Healing: Locators adapt automatically when UI elements change, with rule-based and LLM-backed healing.

- CI/CD Integration: Connects with Jenkins, GitHub Actions, GitLab CI, and Azure DevOps for continuous testing in pipelines.

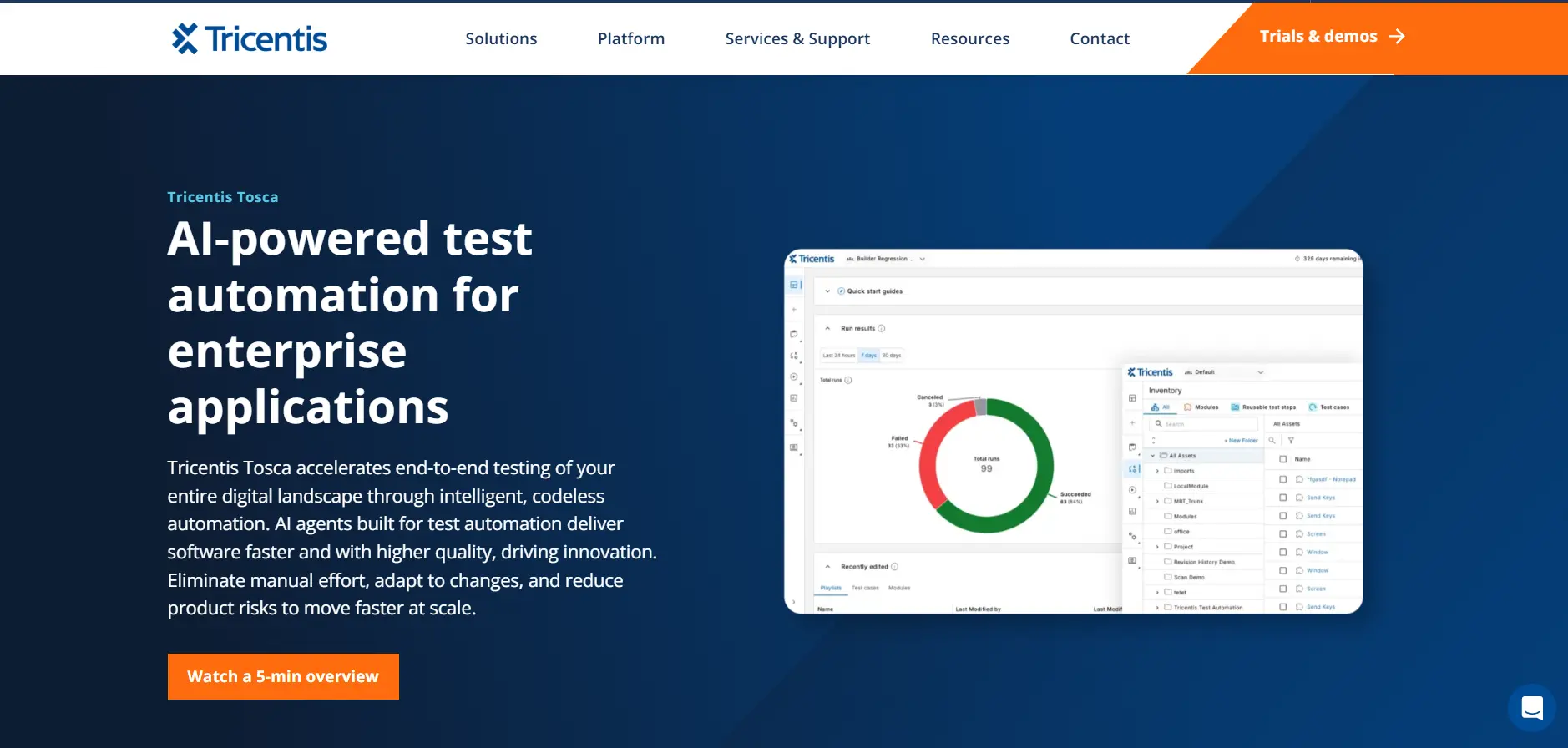

3. Tricentis Tosca

Enterprise-grade codeless test automation with a model-based approach and support for 160+ technologies including SAP, Salesforce, and web applications.

- Model-Based Automation: Reusable modules reduce test maintenance and scale across enterprise applications without scripting.

- Agentic AI Capabilities: AI agents generate and maintain test cases across SAP GUI, web, and Salesforce with minimal manual input.

- Risk-Based Test Optimization: Prioritizes test execution by business impact and code change analysis to focus on high-risk areas first.

4. Functionize

AI-powered testing platform for autonomous test creation, self-healing execution, and cloud-scale parallel runs across web applications.

- Autonomous Test Creation: ML-driven test generation from application monitoring, NLP prompts, and user flow recordings.

- Self-Healing Execution: Tests adapt to application changes using deep learning, reducing maintenance overhead significantly.

- Cloud-Scale Parallel Testing: Runs tests across multiple browsers, devices, and geographies simultaneously in the Functionize Test Cloud.

5. ACCELQ

Codeless AI test automation platform for web, API, mobile, desktop, and enterprise applications including Salesforce, ServiceNow, and SAP.

- Codeless Automation: Natural language test design with visual workflow modeling and unified lifecycle management.

- Full-Stack Coverage: Automates across web UI, API, database, mobile, desktop, and mainframe from one platform.

- Autonomics-Based Maintenance: Self-healing test scripts with adaptive change management reduce ongoing maintenance effort.

What Are the Common Challenges with AI Testing Services?

QA teams adopting these services run into a few recurring problems:

Test Authoring Still Depends on Code: Most platforms require engineers who can write scripts. This limits how fast test coverage grows and excludes non-technical team members from contributing.

Scripts Break on Every UI Change: UI updates break element locators. Fixing them eats into sprint capacity. Teams with large regression suites spend more time on maintenance than on writing new tests.

No Support for AI System Validation: Most platforms test traditional software only. No way to evaluate chatbot responses, LLM outputs, or recommendation engine accuracy for hallucination, bias, or drift.

Vendor Lock-In on Test Code: Tests built inside a proprietary runtime cannot be exported. Switching platforms means rewriting every test from scratch.

Platforms like TestMu AI offer a full-stack solution to these challenges. It includes KaneAI, the world's first end-to-end testing agent. It plans, authors, and runs test cases using natural language prompts.

No coding required, no prior technical knowledge needed. Anyone on the team can describe what they want to test in plain English, and it generates structured, executable scenarios.

It comes with the following capabilities:

- Natural Language Test Creation: Generates and evolves complex test cases from plain-English instructions. Produces executable scenarios with smart assertions. You can read this full list of supported instructions in the KaneAI command guide.

- Framework Flexibility: Exports automation code in Playwright, Selenium, Cypress, and Appium. Your team owns the code. Runs outside the platform. No vendor lock-in.

- Multi-Format Test Input: Converts PRDs, Jira tickets, PDFs, images, audio, video, and spreadsheets into structured test cases. Integrates with Jira to turn ticket descriptions into executable cases. Generates scenarios from GitHub PRs.

- Reusable Test Modules: Converts common test steps (login flows, navigation patterns) into reusable blocks. Eliminates duplication across suites. Speeds up maintenance when shared workflows change.

Teams new to the platform can follow the getting started with KaneAI guide to run their first test in minutes.

What Is the Difference Between AI Testing Services and Traditional QA?

AI testing services differ from traditional QA in test creation speed, maintenance overhead, defect detection approach, scalability, and the skill level required to participate.

They do not replace traditional QA. They add an acceleration layer.

| Dimension | Traditional QA | AI Testing Services |

|---|---|---|

| Test Creation | Manual scripting; hours per test case | Natural language prompts; minutes per test case |

| Test Maintenance | Manual updates when UI changes break locators | Self-healing scripts adapt automatically using ML |

| Defect Detection | Reactive; finds bugs after execution | Predictive; flags high-risk areas before testing |

| Scalability | Limited by team size and local infrastructure | Parallel orchestration across cloud devices and browsers |

| Skill Requirement | Coding expertise required | Accessible to non-technical members via NLP |

| CI/CD Integration | Custom pipeline configuration | Native integration with smart triggers and fail-fast |

| AI System Validation | Not designed for AI/ML outputs | Built for bias, hallucination, and drift testing |

Manual exploratory testing, domain-specific judgment, and risk-based strategy still require human expertise. These services handle repetitive, data-heavy work. The role of ai in software testing continues to expand as platforms mature across industries.

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests