Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

- Home

- /

- Learning Hub

- /

- AI Chatbot Guide

AI Chatbot Guide: How They Work & How to Use Them

Learn what AI chatbots are, how they work, the different types, how to build one, real-world use cases, best practices for integration, and why testing matters.

Salman Khan

March 4, 2026

AI chatbots have revolutionized the way we interact with technology by simulating conversations with human users through advanced Natural Language Processing (NLP).

The most advanced chatbots have evolved into agentic AI systems that do not just answer questions but autonomously execute multi-step tasks across tools, databases, and APIs using the Model Context Protocol (MCP).

Overview

What is an AI Chatbot?

An AI chatbot is a software application powered by Large Language Models and Natural Language Processing. It simulates human conversation through text or voice using transformer architectures and RAG.

What Are the Advantages of AI Chatbots?

The shift toward agentic chatbots has created a new standard for business performance. Here are some of the key advantages that AI chatbots deliver:

- 87% Conversation Resolution Rate: Modern AI chatbots successfully resolve 87% of customer inquiries entirely without human intervention.

- 92% Customer Satisfaction: Despite earlier bot fatigue, 92% of customers now report positive experiences with AI chatbots as their reasoning capabilities improve.

- 30-40% Reduction in Service Costs: AI chatbots slash customer service expenses by handling routine inquiries and qualifying leads autonomously.

- 11-Second First Response Time: AI agents deliver responses in an average of 11 seconds, a massive improvement over the 4+ hour industry average for email support.

What Are the Various Types of AI Chatbots?

AI chatbots come in several forms, each designed for different use cases and levels of autonomy. Here are the main types:

- Autonomous AI Agents: Create plans, execute them across tools, and verify outcomes using a Plan-Act-Verify reasoning loop without human input for every step.

- Generative & RAG Chatbots: Powered by LLMs and augmented by Retrieval-Augmented Generation, they combine general knowledge with real-time vector searches of private knowledge bases.

- Small Language Models (SLMs): Compact models with 1B to 7B parameters, fine-tuned on domain-specific datasets, 80% cheaper to operate than general-purpose LLMs.

- Hybrid (Orchestrated) Chatbots: Use an Orchestrator layer to select the right tool, combining rigid rule-based scripts for high-risk actions with generative AI for nuanced engagement.

What Are AI Chatbots?

An AI chatbot is software powered by LLMs and NLP that simulates human conversation. It uses transformer architectures and RAG to understand context, retain memory, and generate accurate responses.

Unlike earlier rule-based bots that followed rigid scripts, modern AI chatbots leverage deep learning and vast training data. They interpret user intent accurately, maintain multi-turn context, and deliver nuanced responses grounded in real-time data retrieval from private knowledge bases.

What Are the Advantages of Using Chatbots?

AI chatbots offer 30-40% cost reduction, 87% resolution without humans, and 11-second response times. They achieve 92% customer satisfaction, making them essential for modern business operations.

According to the statistics by Hyperleap AI, the shift toward agentic chatbots has created a new standard for business performance. These improvements span financial returns, operational efficiency, customer satisfaction, and enterprise adoption rates globally across every major industry sector.

Financial Impact & ROI (The Business Case)

- 30-40% Reduction in Service Costs: AI chatbots slash customer service expenses by nearly half by handling routine inquiries and qualifying leads autonomously.

- $2.5 Million Annual Savings: Large companies save an average of $2.5 million per year through increased efficiency and reduced manual labor.

- 15-35% Revenue Increase: Beyond cost savings, businesses report a significant boost in revenue driven by improved conversion rates and 24/7 upselling.

Performance & Accuracy Benchmarks (The Technical Edge)

- 87% Conversation Resolution Rate: Modern AI chatbots successfully resolve 87% of customer inquiries entirely without human intervention.

- 11-Second First Response Time: AI agents deliver responses in an average of 11 seconds, a massive improvement over the 4+ hour industry average for email support.

- 94% Response Accuracy Rate: Current LLM-powered bots achieve 94% accuracy in understanding and responding to user intent.

- 13% Escalation Rate: Only 13% of conversations now require a human handoff, representing a 60% reduction compared to traditional chat systems.

Customer Experience & Adoption (The User Perspective)

- 92% Customer Satisfaction (CSAT): Despite earlier "bot fatigue," 92% of customers now report positive experiences with AI chatbots as their reasoning capabilities improve.

- 23% Cart Abandonment Recovery: E-commerce businesses using AI chatbot follow-up sequences recover 23% more abandoned carts.

- 68% Preference Over Humans: In scenarios where speed is critical, 68% of customers prefer the convenience of an AI chatbot over waiting for a human agent.

2026 Market & Industry Trends

- 67% Fortune 500 Adoption: By 2026, two-thirds of Fortune 500 companies will have implemented advanced AI agents.

- 78% E-commerce Adoption: The retail sector leads the market, with 78% of businesses using bots for tracking, returns, and support.

- 15% Zero-Hallucination Achievement: Approximately 15% of top-tier platforms have achieved "zero-hallucination" rates using RAG (Retrieval-Augmented Generation) techniques.

Test your AI-powered chatbots with Agent to Agent Testing. Try TestMu AI Now!

What Are the Different Types of AI Chatbots?

The four main types are autonomous AI agents, generative and RAG chatbots, Small Language Models, and hybrid systems. Each is designed for different levels of autonomy, complexity, and business needs.

Autonomous AI Agents (The Action-Takers)

An autonomous AI agent doesn't just process a user's prompt; it creates a plan, executes that plan across different software tools, and verifies the outcome. This ability to perform multi-step workflows is the core differentiator.

They use a "Plan-Act-Verify" reasoning loop. They do not need human input for every step. They use the Model Context Protocol to securely connect to your email, CRM, and databases.

Best For: Fully automated customer onboarding, complex IT helpdesk resolution, and real-time inventory management.

Generative & RAG Chatbots

These chatbots are powered by Large Language Models like GPT-5 and Gemini 3, but they are critically augmented by Retrieval-Augmented Generation (RAG). While they focus on conversation rather than pure execution, they excel at nuanced, multi-turn reasoning and human-like empathy.

They combine general knowledge from pre-training with real-time vector searches of your private knowledge base. This ensures that answers are not just articulate but are 100% grounded in your actual data, almost entirely eliminating hallucinations.

Best For: Deep technical support, creative content writing, analyzing thousand-page PDF libraries, and personalized virtual assistants.

Small Language Models (SLMs)

Small Language Models (SLMs) are compact, highly efficient models with 1B to 7B parameters. They are fine-tuned on massive, highly curated datasets specific to one topic for deep domain expertise.

SLMs like Phi-4 or Llama-Edge are 80% cheaper to operate than their general-purpose LLM counterparts and often run locally or on "Edge" devices to ensure total data sovereignty. They trade broad, generic awareness for deep, precise, domain-specific expertise.

Best For: Drafting specialized legal contracts, reviewing medical charts for compliance, and analyzing proprietary financial data in private environments.

Hybrid (Orchestrated) Chatbots

The Hybrid approach is the default model for major financial institutions and government services where safety and rigid compliance are mandatory. These systems use an Orchestrator layer to select the right tool for the user's needs.

This model uses rigid rule-based scripts for high-risk actions (like processing a payment or deleting user data) and seamlessly switches to Generative AI for nuanced, low-risk customer engagement (like handling feedback or explaining a policy). This ensures the business has absolute control over critical operations while retaining the flexibility of modern AI.

Best For: Banking services (scripted balance checks; AI-powered financial advice) and healthcare (scripted appointment booking; AI-powered symptom triage).

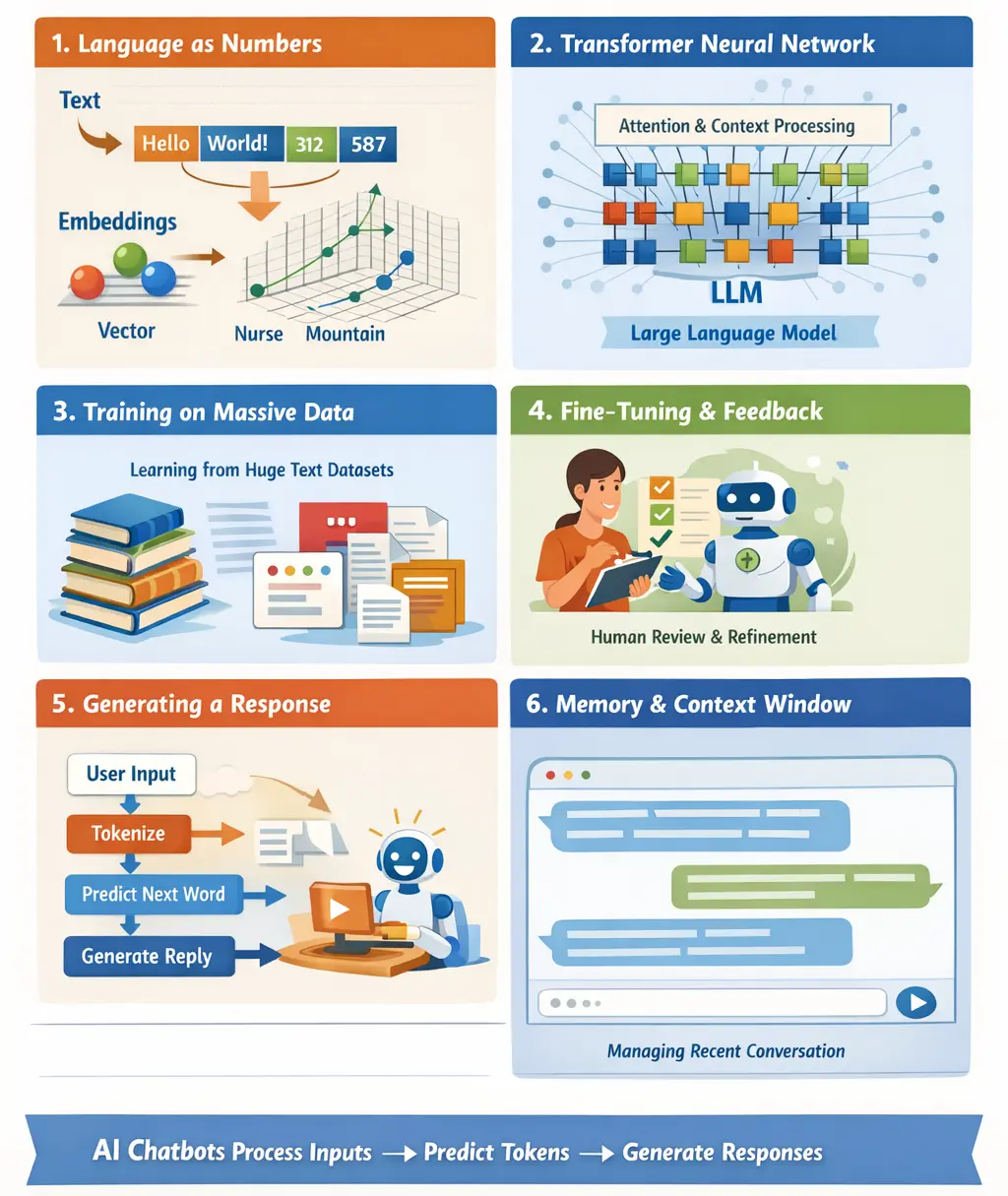

How Does an AI Chatbot Work?

An AI chatbot works by tokenizing input into numbers and processing them through transformer neural networks. It then predicts the most probable next tokens to generate natural language responses.

The process involves multiple stages working together. Here is a breakdown of the key stages:

Language as Numbers

Computers do not understand words the way humans do. Before anything else, text gets converted into numbers. This process is called tokenization. Words or parts of words are split into smaller units called tokens. Each token is mapped to a numerical representation.

Those numbers are then transformed into vectors using a method known as embeddings. Embeddings allow the system to represent meaning in a mathematical space. Words with similar meanings tend to have similar numerical patterns. For example, "doctor" and "nurse" would be positioned closer together than "doctor" and "mountain."

Neural Networks and the Transformer Model

Most modern AI chatbots are built on a type of neural network architecture called a transformer. This approach was introduced in the research paper "Attention Is All You Need" by scientists at Google in 2017.

Transformers rely heavily on something called attention. Attention allows the model to weigh different parts of a sentence based on their relevance to one another. Instead of reading text strictly left to right, the model evaluates relationships between all words in a sentence at once. This makes it much better at understanding context.

Large language models, often called LLMs, are built by stacking many transformer layers together. The result is a powerful system capable of identifying patterns across massive amounts of text data.

Training on Massive Datasets

Chatbots learn by analyzing huge collections of text. These datasets can include books, articles, websites, and other publicly available writing. During training, the model repeatedly tries to predict the next word in a sentence.

This training process involves adjusting billions of internal parameters. Each parameter helps the model refine how it predicts language. The more data and training cycles involved, the more accurate and nuanced the predictions become.

Fine-Tuning and Human Feedback

After initial training, many chatbots go through additional refinement. One common method is reinforcement learning from human feedback, often abbreviated as RLHF.

In this stage, human reviewers evaluate responses and rank them. The model learns from this feedback, improving qualities like helpfulness, clarity, and safety. This step helps align the chatbot's behavior with human expectations.

Organizations such as OpenAI have used this approach extensively. It has proven effective at improving conversational quality and significantly reducing harmful or misleading outputs.

Generating a Response in Real Time

When you type a message to a chatbot, several things happen quickly. The entire process occurs in milliseconds:

- Your input is tokenized and converted into numbers.

- The model processes the tokens using its trained neural network.

- It predicts the most probable next token.

- That token is added to the response.

- The process repeats until the reply is complete.

Importantly, the chatbot does not "think" or "know" facts in a human sense. It generates responses by calculating probabilities based on patterns learned during training.

Memory and Context Windows

Chatbots operate within a context window. This is the amount of text they can consider at once. Everything within that window influences the response. If a conversation gets too long, earlier parts may fall outside the window and stop influencing replies.

Some systems also include external memory tools or retrieval mechanisms that allow them to reference documents or structured data. This extends their practical usefulness well beyond simple text prediction.

How to Build an AI Chatbot

Building an AI chatbot involves five key steps from defining objectives to deployment. Each step covers framework selection, conversation design, model training, and continuous performance monitoring.

Each step builds on the previous one to ensure a solid foundation. Below are the five key steps to build an effective AI chatbot from scratch:

- Define the Objective: Define a precise business objective, identify target users, and outline primary use cases. After that, set measurable success metrics and establish clear functional boundaries before development begins.

- Select the Framework: Choose a scalable framework aligned with integration requirements, such as Microsoft Bot Framework, Google Dialogflow, or APIs from OpenAI.

- Design Conversation Architecture: Map core user intents, define structured decision paths, and apply prompt engineering for precise prompts and fallback responses. Also, ensure context handling for consistent multi-turn interactions.

- Implement and Train: Prepare high-quality training data, configure NLP components, and train the model for accurate intent recognition. Post that, refine performance through controlled iterative testing.

- Test and Deploy: Conduct functional, integration, and stress testing, and deploy within a secure, scalable infrastructure. Then, you can monitor performance metrics continuously and optimize based on real user feedback.

Real-World AI Chatbot Use Cases

AI chatbots are deployed across industries as a leading application of AI automation, streamlining interactions and operations at scale. They deliver measurable impact in customer support, ecommerce, banking, healthcare, and HR.

These use cases span multiple sectors and business functions. Here are some of the most impactful real-world applications of AI chatbots:

- Customer Support: Integrate with CRM systems to fetch order status and generate return labels automatically. Escalate complex cases with full conversation logs attached to reduce handling time.

- Ecommerce: Analyze browsing behavior to deliver personalized product recommendations in real time. Trigger targeted discounts during cart abandonment and guide users through optimized checkout flows.

- Banking and Finance: Authenticate users securely and retrieve live account balances instantly. Flag suspicious transactions proactively while maintaining detailed compliance-ready interaction records for audits.

- Healthcare: Sync with hospital systems to schedule appointments and send medication reminders automatically. Triage symptoms using structured protocols and document interactions securely for regulatory compliance.

- HR and Internal Ops: Calculate leave balances dynamically using HR system data. Generate policy-specific responses from internal documentation and automate IT or payroll ticket creation.

What Are the Best AI Chatbots in 2026?

The best AI chatbots in 2026 are ChatGPT, Google Gemini, Claude, Perplexity AI, and Grok. Each excels in distinct areas from versatile productivity to research and real-time social trend insights.

Each platform has unique strengths suited to different workflows. The table below scores them across five key criteria on a 1-10 scale:

| Chatbot | Best For | Reasoning | Integrations | Multimodal | Free Tier |

|---|---|---|---|---|---|

| ChatGPT (OpenAI) | Versatile productivity, coding, writing, and research | 9/10 | 9/10 (plugins, API, agents) | Yes (text, image, voice) | Yes (GPT-4o mini) |

| Google Gemini | Google Workspace workflows, multimodal tasks | 8/10 | 9/10 (Gmail, Drive, Sheets, Android) | Yes (text, image, audio, video) | Yes |

| Claude (Anthropic) | Long-form writing, document analysis, and technical reasoning | 9/10 | 7/10 (API-first, MCP support) | Yes (text, image, PDF) | Yes |

| Perplexity AI | Research, fact-checking, cited answers | 7/10 | 6/10 | Partial (image input) | Yes |

| Grok (xAI) | Real-time social trends, X (Formerly Twitter) data insights | 7/10 | 5/10 (X ecosystem-focused) | Yes (image) | Limited |

Notably, providers like OpenAI and Perplexity have extended their chatbot capabilities into dedicated AI browsers such as ChatGPT Atlas and Perplexity Comet that combine conversational AI with full web browsing functionality.

How to Troubleshoot Common AI Chatbot Issues

Troubleshoot AI chatbot issues by addressing user input errors and data-driven response errors. This involves training NLU for input variations, diversifying data, and using continuous feedback loops.

Most issues fall into two primary categories. Here is how to effectively diagnose and address each category systematically.

Handling User Input Issues

- Understand Variations: Train the NLU to recognize slang, abbreviations, and regional differences to interpret intent accurately.

- Validate Inputs: Pre-process inputs to catch typos, unsupported characters, or formats. Regular expressions can help automate this.

- Clarify Ambiguities: When inputs are unclear, prompt follow-up questions to refine understanding before responding.

Fixing Data-Driven Response Errors

- Review and Diversify Data: Ensure training datasets are comprehensive and balanced to prevent bias and gaps.

- Audit Regularly: Benchmark responses periodically to detect performance drifts or inconsistencies.

- Leverage Feedback: Incorporate user feedback continuously to update data and fine-tune the chatbot's reasoning.

Why Is It Important to Test AI Chatbots?

Testing AI chatbots is critical for catching hallucinations, validating workflows, and ensuring security. Even top-tier models have significant error rates that impact compliance and reliability.

According to the latest data from Hyperleap AI, 92% of customers are satisfied with AI chatbots. However, 13% of interactions still require human escalation due to logic failures, making rigorous AI agent testing essential to bridge the gap between potential and performance.

- Eliminating the Hallucination Gap: Even the most advanced models still hallucinate. A hallucination report reveals that even top-tier models can have a >15% error rate when analyzing complex statements. Testing ensures your bot doesn't provide "silky-smooth fiction" as fact, which is critical for legal and medical compliance.

- Validating Agentic Workflows: Chatbots use a "Plan-Act-Verify" loop. Testing is required to ensure the "Act" phase doesn't go off the rails, such as an agent accidentally deleting a database record instead of updating it. You must test the trajectory (how it got to the answer), not just the outcome (the final answer).

- Stress-Testing Multi-Agent Systems (MAS): Modern architectures often involve multiple AI agents coordinating to solve a task, creating a "black box" of communication where subtle errors cascade when one agent misinterprets another's output. This is where Agent to Agent Testing by TestMu AI helps, using intelligent testing agents to evaluate other AI agents across diverse scenarios and spot hallucinations, bias, and performance issues before they reach production.

- Ensuring Security & Data Privacy: With agents having "ambient" access to internal tools via MCP, testing must verify that an agent doesn't overstep its permissions. Testing confirms that a support bot can't accidentally "reason" its way into accessing payroll data.

To get started, check out this TestMu AI Agent to Agent Testing guide.

Best Practices for Effective Chatbot Integration

Effective chatbot integration requires personalized experiences, robust security, and continuous monitoring. These practices ensure reliable performance and regulatory compliance across all interactions.

- Enhancing User Experience: Implement sentiment analysis to detect users' emotions and adjust responses dynamically. This creates personalized interactions, improves satisfaction, and builds stronger engagement over time.

- Personalized Responses: Use emotional insights to tailor replies, such as offering apologies or solutions to frustrated users. Consistent personalization encourages positive experiences and repeat interactions.

- Feedback Loops: Capture user reactions to refine AI understanding continuously. Incorporate these insights into training data, improving the chatbot's ability to handle complex or nuanced scenarios effectively.

- End-to-End Encryption: Secure all communications between the chatbot and users to prevent unauthorized access. Encryption ensures privacy, maintaining trust while sensitive data is exchanged in real time.

- Data Anonymization: Remove personally identifiable information from stored or processed data. Anonymization minimizes risk exposure, ensuring compliance with privacy laws while protecting sensitive user information.

- Access Controls and Audits: Implement role-based access to sensitive data and conduct regular security audits. These measures prevent misuse, detect vulnerabilities, and strengthen overall system security continuously.

- Data Retention and Transparency: Define clear retention periods and deletion policies for user data. Communicate privacy practices openly to build trust and ensure users understand data usage.

- AI Model Monitoring: Implement AI observability to continuously evaluate model performance and data handling practices. Monitoring ensures accurate responses, prevents misuse of private data, and supports ongoing optimization of chatbot functionality.

Conclusion

Successfully scaling and maintaining an AI chatbot requires a strategic combination of infrastructure management, proactive monitoring, and continuous improvement. Implement scalable servers, load balancing, and optimized databases to handle increasing user interactions efficiently.

Complement these measures with caching mechanisms, scheduled software updates, version control, and regular backups to ensure reliability and security. Continuously analyze user feedback, conduct performance audits, and refine responses to keep the chatbot relevant and effective.

Citations

- Implementing AI-based Chatbot: Benefits and Challenges: https://www.sciencedirect.com/science/article/pii/S1877050924015278

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests