Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

On This Page

- What Is AI in Test Automation?

- Why Use AI in Test Automation?

- Components of AI in Test Automation

- How Machine Learning Generates Automated Tests?

- Use Cases of AI in Test Automation

- How Does KaneAI Help With AI Test Automation?

- Shortcomings of AI Test Automation

- Best Practices for AI in Test Automation

- Future of AI in Test Automation

AI in Test Automation: A Detailed Guide

Explore how to use AI in test automation, from its importance to best practices. Boost efficiency and accuracy in your testing processes.

Salman Khan

February 28, 2026

Nowadays, organizations continuously strive to deliver software applications at light speed, for which traditional test automation methods may become least effective. However, by incorporating AI in testing workflows, organizations can reshape the entire approach to test automation.

AI in test automation involves using artificial intelligence techniques to enhance, optimize and automate various aspects of test automation processes. It leverages AI that enhances the capabilities of testing tools or frameworks so that they can become more efficient and capable of handling complex test scenarios.

In this blog, we look at how to use AI in test automation, along with its key use cases and tools.

Overview

What Is AI in Test Automation?

AI in test automation uses machine learning, NLP, and computer vision to enhance traditional test automation. It makes testing more efficient, adaptive, and capable of handling complex scenarios.

Why Use AI in Test Automation?

AI prioritizes critical test cases, converts requirements into scripts, detects UI discrepancies, and enables self-healing tests. It integrates with CI/CD pipelines for intelligent execution and actionable insights.

- Prioritized Test Execution: Focuses on high-risk and critical test cases.

- Requirement-to-Script Conversion: Automatically transforms requirements into executable tests.

- UI Discrepancy Detection: Identifies visual and functional inconsistencies.

- Self-Healing Capabilities: Adjusts to UI or locator changes without manual updates.

- CI/CD Integration: Enables intelligent execution with actionable insights.

What Are the Key Components of AI in Test Automation?

AI in test automation is built on several core components that work together to enhance testing efficiency and accuracy.

- Machine Learning: Analyzes historical data and recognizes patterns to predict potential failure points. It accelerates defect detection while optimizing resource usage.

- Natural Language Processing: Enables writing test steps in plain language without coding knowledge. It improves collaboration between technical and non-technical stakeholders.

- Data Analytics: Identifies anomalies, trends, and root causes from large volumes of test data. It helps teams detect recurring issues and performance bottlenecks early.

- Robotic Process Automation: Automates repetitive tasks like data population, environment setup, and reporting. It reduces human error and frees testers to focus on complex scenarios.

What Are Some Leading AI Test Automation Tools?

Several AI-powered tools have emerged to streamline and enhance test automation workflows.

- TestMu AI KaneAI: AI test automation agent enabling test creation and debugging using natural language. Supports multi-language code export and intelligent test planning.

- Functionize: AI-powered platform with NLP-based test creation and self-healing tests. Supports visual testing and ML-driven test maintenance.

- Tricentis Tosca: Enterprise AI-powered platform with vision AI and model-based testing. Works best for SAP, Oracle, and Salesforce environments.

- TestCraft: Browser extension offering accessibility suggestions and auto test generation. Generates scenarios and ideas to enhance overall test coverage.

What Is AI in Test Automation?

AI in test automation leverages artificial intelligence techniques, such as machine learning, deep learning, natural language processing, computer vision, and more, to enhance traditional test automation approaches. It makes them efficient, effective and adaptive, which helps automate and enhance various aspects of the test automation process.

Creating test scripts on the basis of natural language processing is the simplest example of AI test automation. Here, you can use plain language like English to give prompt inputs using various prompting techniques, and based on that, AI will generate test scripts for you. Not only does AI enhance test automation, but it also helps run tests, detect future bugs, and retrieve data to further enhance the testing life cycle.

To explore more about how AI is transforming testing, attending AI conferences can also provide valuable insights from industry leaders.

Why Use AI in Test Automation?

AI in test automation enhances the testing life cycle by combining artificial intelligence technologies to address the complex challenges testers face in their daily workflows. It improves testing efficiency by analyzing historical test data and code changes to prioritize critical test cases and optimize regression testing.

Beyond just machine learning, AI incorporates natural language processing to convert requirements into test cases or test scripts, visual AI (computer vision) to detect UI discrepancies, and self-healing capabilities to adapt test scripts to software updates. These features minimize manual effort, reduce downtime, and ensure stability in test automation.

AI can also be integrated with CI/CD pipelines, which offer intelligent test execution and deliver actionable insights through advanced analytics. By detecting anomalies, predicting defects, and addressing flaky tests, the AI test automation approach ensures reliable and high-quality software releases.

Note: Run your automated tests with AI and cloud. Try TestMu AI Today!

Components of AI in Test Automation

Here are the different components of AI in automation testing:

- Machine Learning: It is the backbone of AI test automation that enables models to recognize patterns, analyze historical data, and make appropriate predictions. ML-powered tools can analyze all past test cases and results to prioritize defect-prone areas and predict potential points of failure in test scripts.

- Natural Language Processing: In this technique, testers can write test steps or scenarios using any natural language, eliminating the need to write them themselves.

- Data Analytics: It helps teams sift through enormous chunks of test data to recognize anomalies and identify trends and patterns. AI-powered tools help testers identify any underlying root cause or detect recurring issues that are bound to go unnoticed. They can also monitor performance trends, which helps them identify any bottlenecks beforehand.

- Robotic Process Automation: It handles repetitive, rule-based tasks by working alongside AI to reduce human error and overall manual effort. In the testing life cycle, robotic process automation can help automate tasks like data population and environment configuration. It also facilitates the generation of detailed test reports and their distribution after test execution.

For instance, certain components of software applications tend to fail after code updates, but ML can identify them and suggest areas to fix errors. It accelerates the defect detection process while minimizing the usage of different resources.

NLP also improves collaboration between non-technical and technical stakeholders by translating complex business requirements into simple and actionable test cases or test scripts.

How Machine Learning Generates Automated Tests?

Following are the ways how machine learning generates tests:

- Training: During the training phase, a machine learning model is trained using an organization’s dataset, which may include the codebase, application interface, logs, test cases, and specification documents. A small dataset can limit the model’s effectiveness.

- Output Generation: Based on the use case, the model can create test cases and evaluate existing ones for coverage, completeness, and accuracy. However, testers must review the outputs to validate and ensure their usability.

- Continuous Improvement: As the model is used more frequently, the amount of training data grows, leading to better accuracy and performance over time. The AI model essentially learns and gets smarter with ongoing use.

Therefore, some tools come with pre-trained models designed for specific tasks, such as UI testing. These models improve over time through continuous learning, making them adaptable to the organization’s needs.

Use Cases of AI in Test Automation

It’s hard to ignore the profound impact that AI has had on automation testing. However, uses of AI in software testing for automation extend beyond just user interface testing.

Key applications range from test case generation and self-healing mechanisms to defect prediction and anomaly detection. These testing-specific applications are part of a broader set of AI agent use cases transforming industries from healthcare to finance.

Let’s take a look at some of the most popular use cases:

- Test Case Generation: AI analyzes user stories, requirements, code, and design documents to generate comprehensive test cases, ensuring thorough coverage and identifying potential edge cases that manual testing might overlook.

- Test Script Generation: AI dynamically creates test scripts, keeping automation aligned with evolving software and reducing manual maintenance efforts.

- Test Data Generation: AI generates realistic and diverse test data, covering various scenarios to ensure software applications behave correctly across different inputs, enhancing the robustness of test automation processes.

- Test Optimization: AI evaluates historical test data and code changes, prioritizes and optimizes test cases and test scripts and focuses on high-risk areas to improve test automation efficiency.

- Visual Testing: AI-powered visual testing tools detect UI inconsistencies across different environments, ensuring a consistent user experience by comparing visual elements against expected outcomes.

- Self-Healing Mechanism: AI-driven self-healing mechanisms automatically adjust test scripts in response to changes in the UI or underlying code, reducing maintenance efforts and minimizing test failures due to code updates.

- Defect Prediction: AI analyzes code changes and historical defect data to predict potential areas of failure. It enables proactive testing and early issue resolution to maintain software quality.

- Anomaly Detection: AI identifies unexpected patterns or behaviors during test automation, detecting anomalies that may indicate defects or performance issues. This enhances the reliability of software applications.

- Test Reporting and Analysis: AI generates detailed test reports and analytics, providing actionable insights into test results, code quality, and potential areas of improvement, facilitating informed decision-making.

Best AI Test Automation Tools

QA teams might want to use an AI automation testing tool that works for a wide array of domains. But most importantly, you have to choose according to what fits your project’s unique requirements.

Here is a list of the best AI testing tools for test automation:

TestMu AI KaneAI

KaneAI by TestMu AI is an AI test automation agent and a smart test assistant for high-speed quality engineering teams, enabling the creation, debugging, and evolution of tests using natural language. It significantly reduces the expertise and time required to start test automation.

Features:

- Test Creation: Creates and evolves tests using natural language instructions, making test automation accessible to all skill levels.

- Intelligent Test Planner: Generates and automates test steps automatically based on high-level objectives, simplifying the test creation process.

- Multi-Language Code Export: Converts your tests into all major programming languages and frameworks for flexible automation.

- Sophisticated Testing: Express complex conditions and assertions in natural language.

- API Testing Support: Seamlessly test backends while enhancing coverage by integrating with your existing UI tests.

- Leverage Datasets and Parameters: Datasets and parameters for easy configuration, reusable values, and flexible parameterized testing.

- JIRA Integration: Seamlessly integrate and achieve continuous testing by tagging KaneAI on JIRA and triggering test automation directly.

- Smart Versioning Support: Tracks changes with version control, ensuring organized test management.

With the rise of AI in testing, its crucial to stay competitive by upskilling or polishing your skillsets. The KaneAI Certification proves your hands-on AI testing skills and positions you as a future-ready, high-value QA professional.

Functionize

Functionize is an AI-powered test automation platform that uses machine learning and NLP to simplify test creation, execution, and maintenance for web and mobile applications.

Features:

- Uses NLP to let testers write test cases in plain English that are automatically converted into executable scripts.

- Employs ML-based self-healing to adapt tests when the UI changes, reducing manual maintenance.

- Performs visual regression testing with pixel-level screenshot comparisons across releases.

Tricentis Tosca

Tricentis Tosca is an AI-powered test automation platform that works best for enterprise testing needs, including SAP, Oracle, and Salesforce.

Features:

- Offers automatic conversion of test cases with the help of recorded actions.

- Comes with vision AI for identifying UI elements and picking them up to include them in test cases with the help of computer vision.

- Divides the view of the software application into different smaller units known as models which connects actions performed by testers to these units.

TestCraft

TestCraft is a browser extension that is a versatile option for various QA teams. The best thing about this tool is that it serves in various ways depending on unique requirements.

Features:

- Offers suggestions for accessibility problems on existing test cases as well as generates new ones.

- Generates different scenarios and ideas for the testing phase which helps in enhancing test coverage.

- Offers automatic generation of test cases.

How Does KaneAI Help With AI Test Automation?

KaneAI leverages modern Large Language Models (LLMs), offering flexibility to create, debug, and evolve end-to-end tests with the help of natural language. Its multi-language code export offers to convert automated tests in different frameworks and languages.

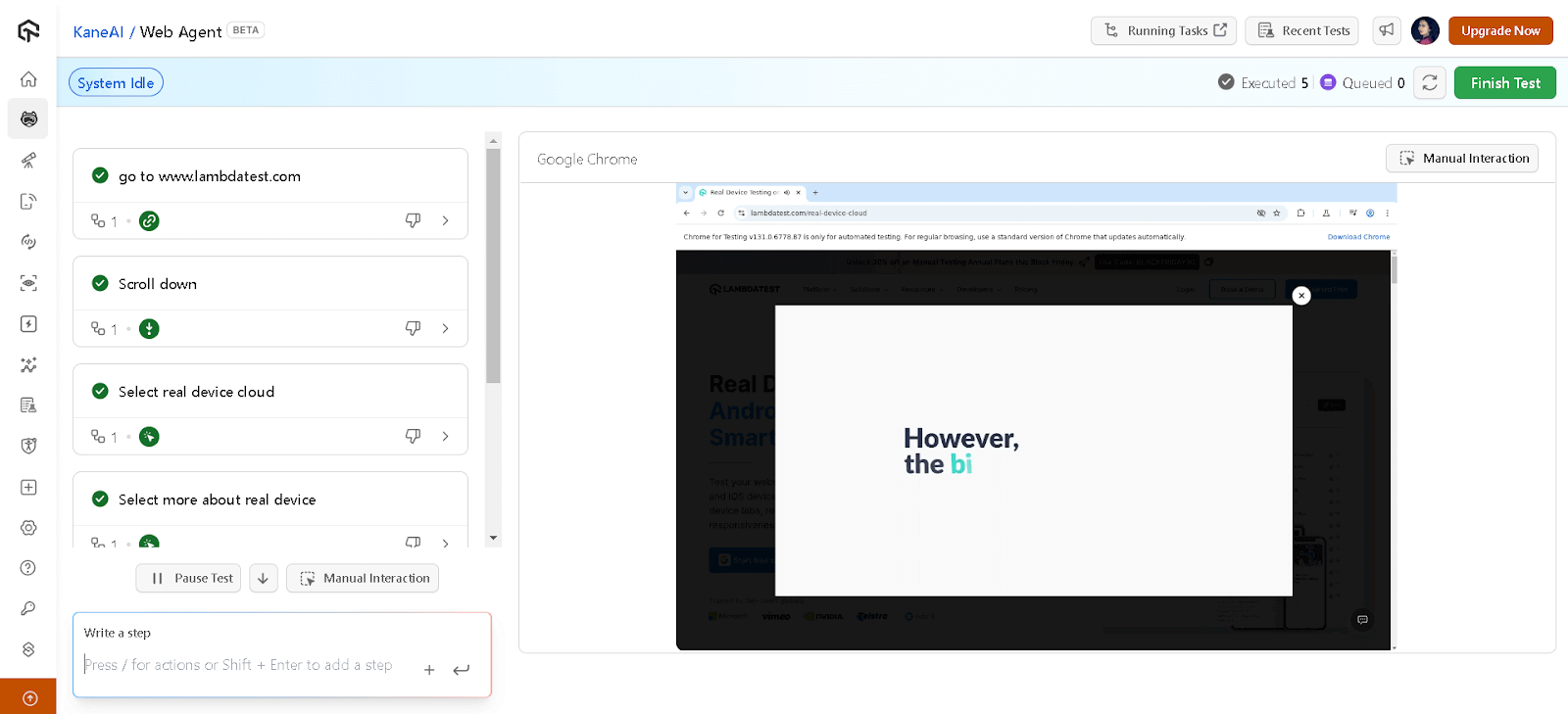

Shown below are steps to perform AI test automation using KaneAI:

Note: Make sure you have access to KaneAI. To get access, please contact sales.

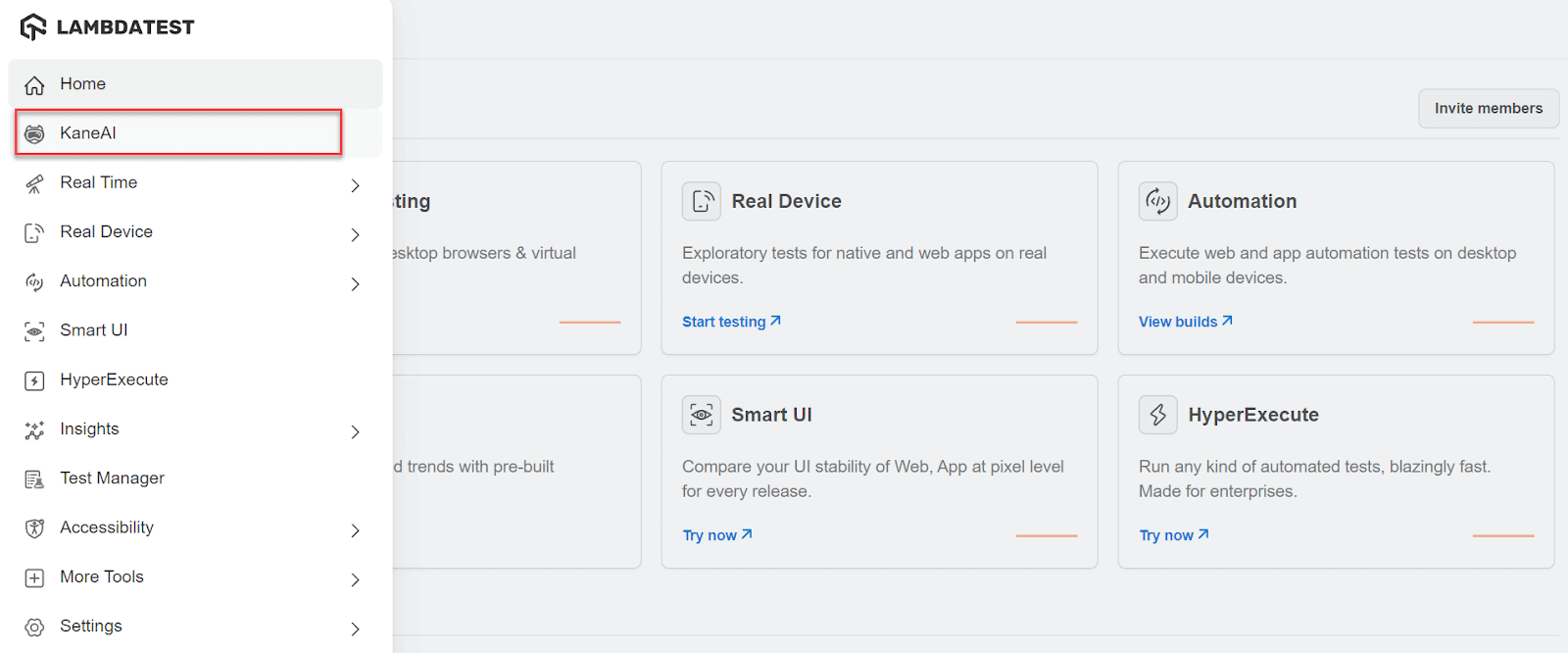

- From the TestMu AI dashboard, click the KaneAI option.

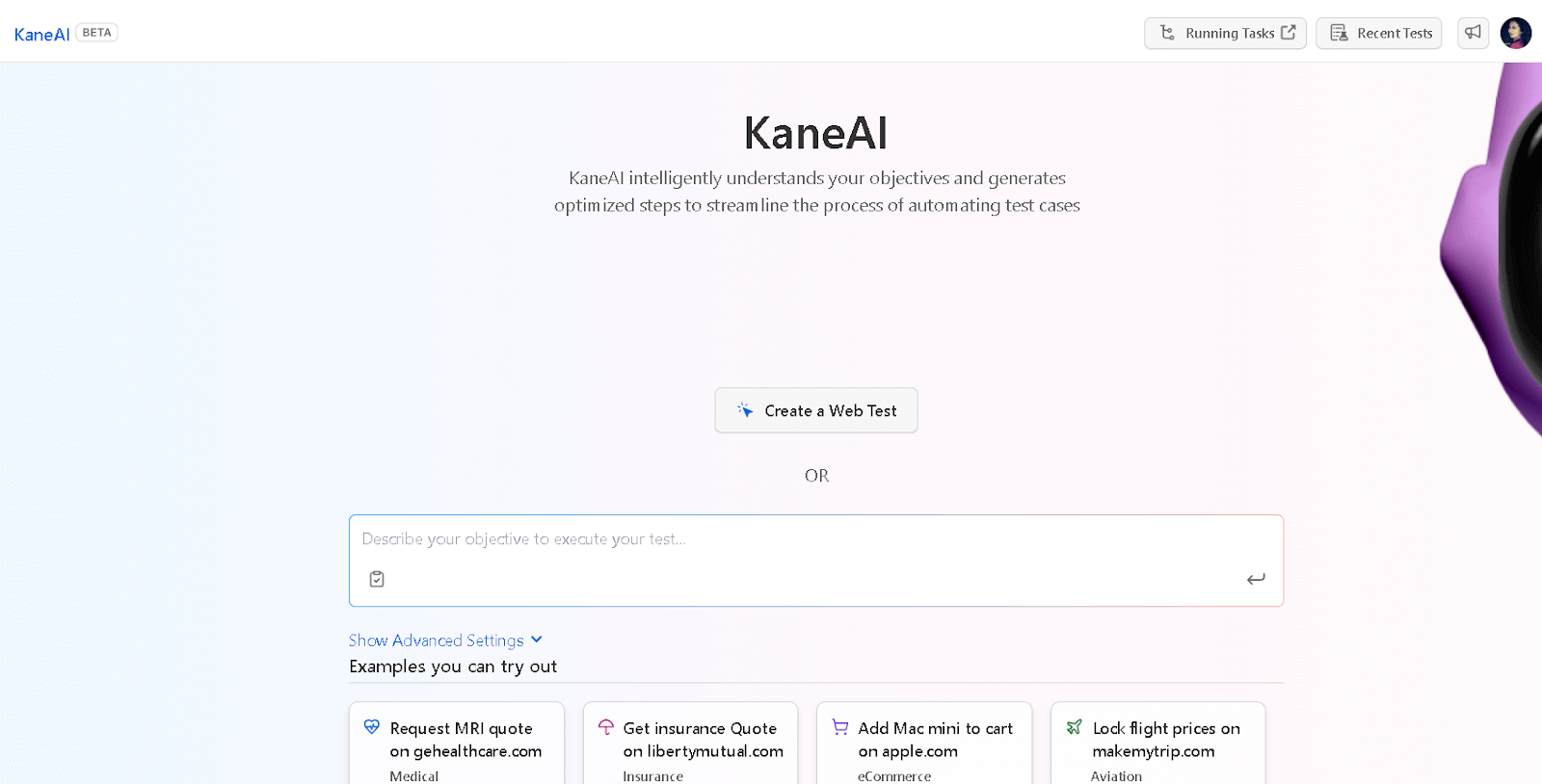

- Select the Create a Web Test button, which opens the browser and a side panel for writing test cases.

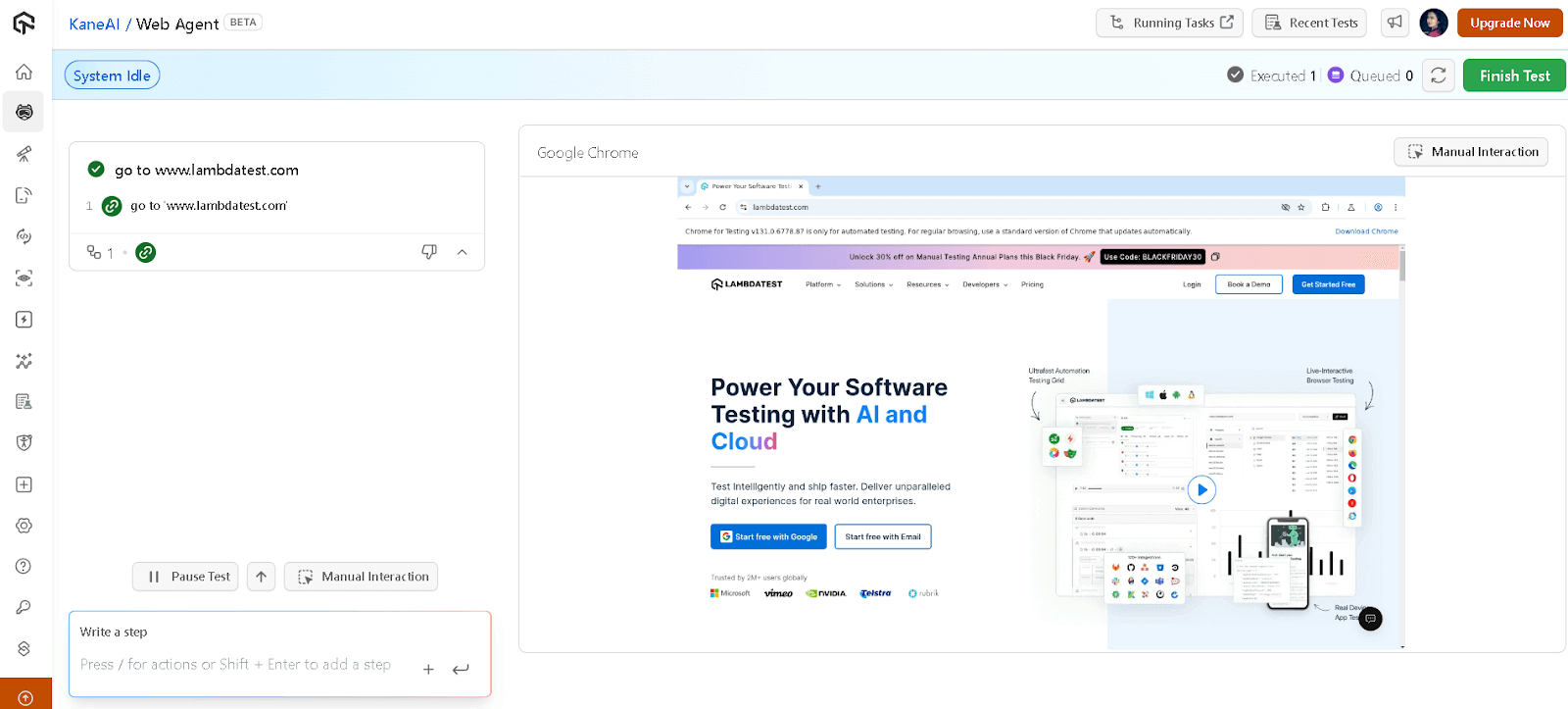

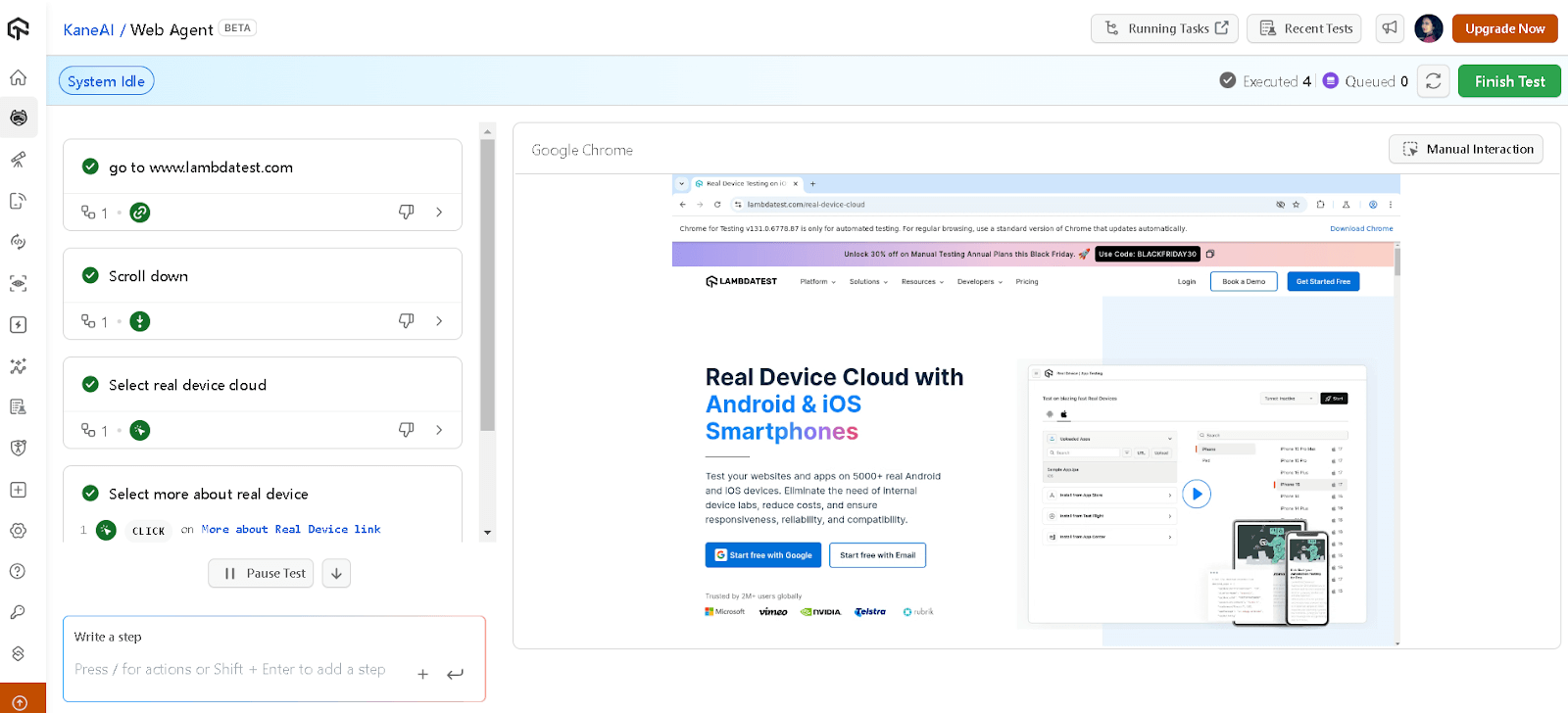

- Write the steps using a Write a step textarea for the test.

- Click the Finish Test button at the top right to end the testing session.

When you write the test steps, it records the test step upon pressing enter, and you can also see the website opening on the browser. These steps will be executed on KaneAI and you can update or reuse it accordingly.

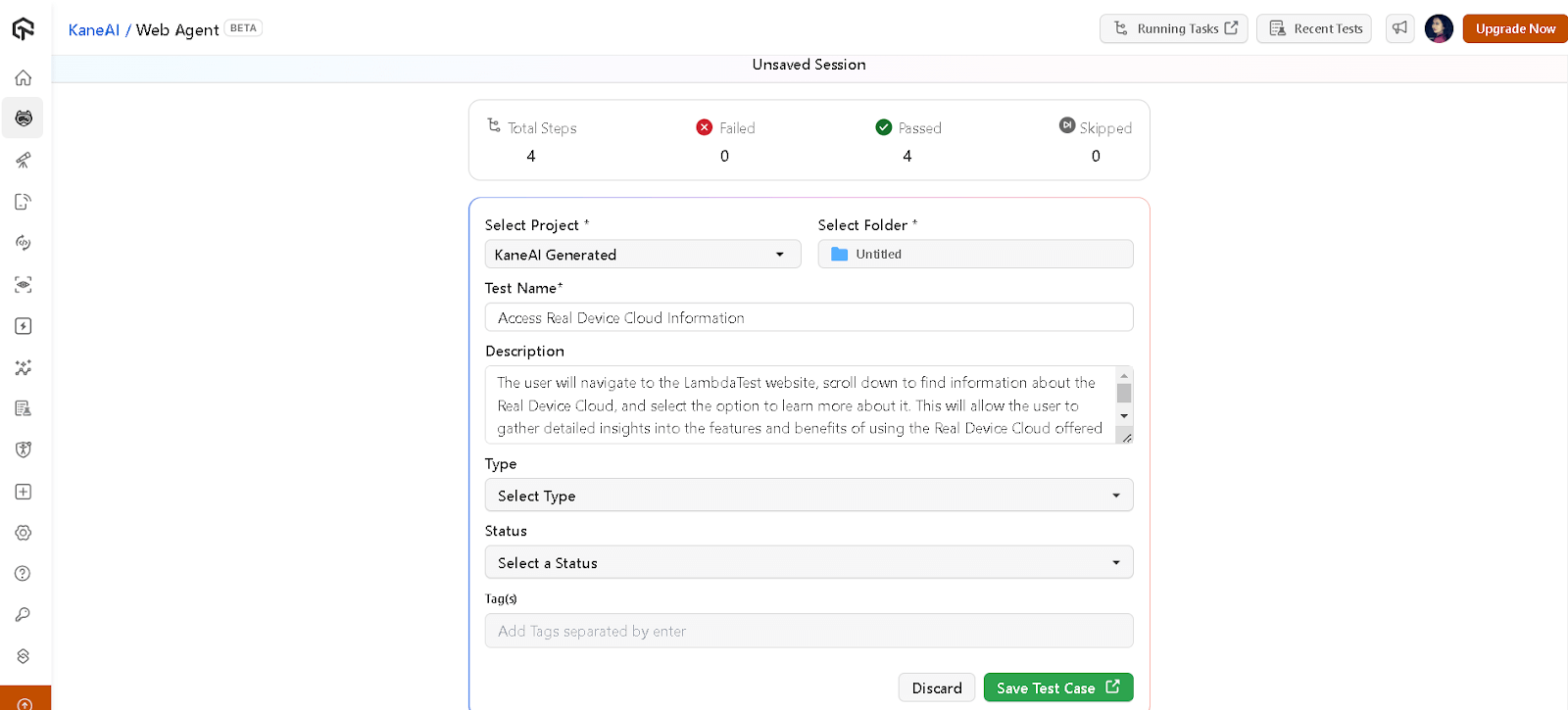

Now, select the Folder where you want to save tests and choose Type and Status. You can also change other details if needed. Then, click on the Save Test Case button.

To get started with AI test automation, refer to this KaneAI documentation.

Shortcomings of AI Test Automation

Let’s take a look at some shortcomings of incorporating AI testing in automation:

- Integration Bottlenecks: AI-based testing presents major challenges in integration with third-party tools due to the architecture of AI tools as well as the compatibility of third-party tools.

- Training Dataset: Someone might have used a poor data set to train an AI tool where there were chances of biases being present. Data sets like these can often hamper test results (e.g., false positives or negatives).

- Unpredictabilities: When it comes to AI algorithms, they can be very unpredictable, for example, in the case of reinforcement learning or neural networks. These are trained with stochastic methods, and in specific scenarios, they may offer various outputs for relatively the same input.

- AI Algorithm Verification: AI algorithms work well with functions that come with the package and predefined libraries, making it easy to incorporate them. However, determining their accuracy can be challenging. QA teams cannot compare AI outputs to expected results, but the evaluation requires carefully chosen metrics such as precision, recall, or F1 score, depending on the task.

Best Practices for AI Test Automation

To perform AI test automation, you need to stick to some specific best practices to mitigate any potential challenges and ensure optimal results:

- Train and Update AI Models Regularly: QA teams should periodically retrain all AI models with the help of the latest feedback and data sets. For instance, if AI models can predict the facts, you should include newly recognized and effective data for its training, along with any recent development cycles. It ensures the evolution of the model alongside the software applications in addition to retaining its ability to accurately predict potential issues.

- Evaluate and Monitor Test Results: It’s also crucial to validate AI-generated insights against actual real-world results to ensure accuracy. For instance, if AI tools flag potential performance issues, QA teams should take corrective action after ensuring its accuracy. Such an approach facilitates informed decision making on the basis of highly reliable data, at the same time, mitigates the possibility of unnecessary reworking.

- Ensure High-Quality Data Sets: It’s important to verify the accuracy of precision of the algorithm that generates and processes the data. You can achieve this via rigorous testing and validation. This ensures the data is free from errors and biases, thereby actually reflecting real-world scenarios. For instance, an algorithm generating performance testing data should accurately replicate the load conditions of the software and expected user behavior.

- Test the Algorithm: Before you integrate an algorithm or a tool into a software application, thoroughly testing its compatibility and behavior with unique project requirements is essential. While there are plenty of resources for validating the efficiency of an algorithm and recommending ideal environments, it’s a risky move to rely solely on external validations.

- Prevent Security Loopholes: It’s crucial than ever to make security a critical priority, which includes sticking to security protocols and facilitating only highly secure data transmission. You should also offer an additional protection layer by engaging security engineers and cybersecurity experts.

Future of AI in Test Automation

Artificial intelligence, along with its use cases in test automation, is rapidly evolving. With every passing year, it opens up new possibilities as AI algorithms become more advanced.

While most testing technologies for AI automation still lie in their beginning stage, however, AI has the huge potential to revolutionize the software testing industry.

Automating tasks is the bare minimum that AI-powered testing tools are doing. The best part is that they’re learning and adapting to the most complex behaviors of software, which further makes the possibilities limitless.

The future of AI in test automation points toward greater autonomy and smarter orchestration. To understand how this orchestration works in practice, explore how MCP and AI agents enable seamless communication between AI systems and diverse testing tools.

Conclusion

Testers often face ongoing challenges due to the fast-changing market demands. There was a time when this constant innovation felt overwhelming. However, AI has entered the scenario to ease the pain of QA teams across the globe.

By making the best out of artificial intelligence technologies, developers and testers can save time, effort, and resources. They can also leverage AI-powered tools that can conduct an in-depth analysis of test results, simultaneously identifying potential issues. Such features can prove indispensable for development and QA teams that strive to retain their competitive edge in the current digital landscape.

Testers looking to systematically build AI capabilities can follow this AI roadmap for software testers, which outlines a phase-by-phase path from automation to AI-driven testing.

Citations

- AI-based Test Automation: A Grey Literature Analysis: https://ieeexplore.ieee.org/document/9440153

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests