Acceptance Criteria vs Acceptance Tests: What's the Difference?

Learn the difference between acceptance criteria and acceptance tests, who writes them, when they're written, and how to avoid common mistakes in agile teams.

Akarshi Aggarwal

April 28, 2026

If you've ever sat in a sprint planning meeting where someone used acceptance criteria and acceptance tests as if they meant the same thing, you're not alone. Most teams do it. Even experienced QA engineers and product owners blur the line between the two without realizing it.

When the two get confused, acceptance testing breaks down at the handoff. Stories get marked done before they are verified, tests pass on paper but miss what the feature was supposed to do, and defects reach production before anyone can identify where the process failed. This blog breaks down the difference between acceptance criteria and acceptance tests and how one leads to the other.

Overview

What Is the Difference Between Acceptance Criteria and Acceptance Tests?

Acceptance criteria define the conditions a feature must meet to be considered complete, written before development begins by the product owner. Acceptance tests verify that those conditions are actually met in working software, written by QA engineers or developers during or after development.

Think of acceptance criteria as the target and acceptance tests as the proof you hit it.

Who Writes Acceptance Criteria vs Acceptance Tests?

- Acceptance criteria are written by the product owner or business analyst, ideally in collaboration with developers and QA during backlog refinement.

- Acceptance tests are written by QA engineers or developers, usually during the sprint after acceptance criteria are finalized.

- In ATDD teams, acceptance tests are written alongside the criteria, before development begins.

What Is the Given/When/Then Format?

Given/When/Then is a pattern expressed using the Gherkin syntax. It structures acceptance criteria so non-technical stakeholders can read them while the format maps almost directly to automated test code.

- Given: The initial context or precondition (e.g., the user is on the login page).

- When: The action the user takes (e.g., they enter a valid email and password).

- Then: The expected outcome (e.g., they are redirected to their dashboard).

How Many Acceptance Tests Should One Criterion Have?

At minimum, two: one for the happy path and one for a failure or edge case. Criteria involving conditional logic, multiple user states, or performance thresholds may need five or more to cover fully.

What Are the Most Common Mistakes Teams Make?

- Writing acceptance criteria after development has already started.

- Using criteria that are too vague to test (e.g., "the page should load fast").

- Confusing acceptance tests with UAT (User Acceptance Testing).

- Skipping negative scenarios and edge cases.

- Treating acceptance tests as a QA-only responsibility.

What Are Acceptance Criteria?

Acceptance criteria are the conditions a feature must meet to be considered complete and acceptable. They define what "done" looks like for a specific user story, from the perspective of the user or stakeholder.

Think of acceptance criteria as a set of statements that answer one question: what must be true for this feature to work as expected? They do not describe how to build it or how to test it. They just describe the expected outcome.

Good acceptance criteria share a few consistent traits:

- Written before development begins

- Expressed in simple language that non-technical stakeholders can read and agree on

- Pass/fail by nature (there is no partial acceptance)

- Scoped to a single feature or user story

Example: For a user story like "As a user, I want to reset my password so I can regain access to my account," a set of acceptance criteria might look like this:

- The user can request a password reset using their registered email address

- A reset link is sent within 60 seconds

- The reset link expires after 24 hours

- The user cannot reuse their last three passwords

What Are Acceptance Tests?

Acceptance tests are the actual verification that the acceptance criteria have been met. Where acceptance criteria describe the expected behavior, acceptance tests check whether that behavior exists in the working software.

They are executable and can be run manually by a QA engineer or automated using a test framework. Either way, they produce a result: pass or fail.

Acceptance tests are typically written after the acceptance criteria are agreed upon, and they operate at the system level, meaning they test the software as a whole, not individual units or components.

Using the same password reset example, an acceptance test for the first criterion might look like this:

- Navigate to the login page and click "Forgot password"

- Enter the registered email address and submit

- Verify that the system triggers the reset email within 60 seconds (check via email service logs or API response)

- Confirm the email contains a valid reset link

One acceptance criterion can generate multiple acceptance tests, especially when edge cases and negative scenarios are included.

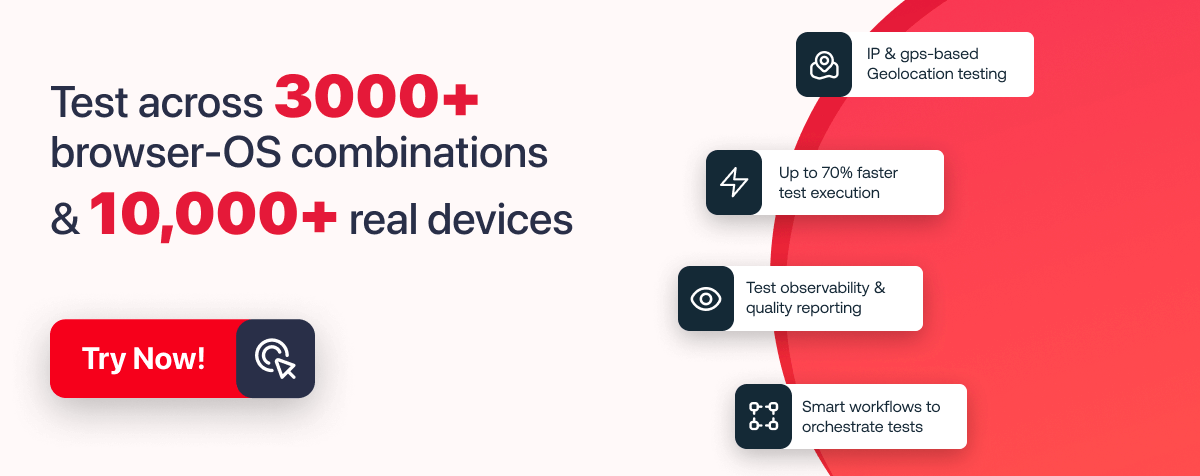

Note: Run Acceptance Tests Across 10,000+ Real Browsers and Devices. Try TestMu AI Now!

Acceptance Criteria vs Acceptance Tests: Key Differences

The simplest way to think about it: acceptance criteria define the target, and acceptance tests verify you hit it. They work together, but they are distinct artifacts created at different stages by different people.

| Aspect | Acceptance Criteria | Acceptance Tests |

|---|---|---|

| What it is | Conditions a feature must meet to be accepted | Verification that those conditions are actually met |

| Who writes it | Product owner or business analyst | QA engineers or developers |

| When it is written | Before development begins | During or after development |

| Scope | Feature-level | System-level |

| Format | Written statements, Given/When/Then, checklists | Test cases, automated scripts, manual test steps |

| Output | Defines pass/fail conditions | Produces actual pass/fail results |

| Audience | Developers, designers, stakeholders | QA team, developers, CI/CD pipelines |

How Acceptance Criteria Become Acceptance Tests

Acceptance criteria and acceptance tests are not independent artifacts. One leads directly to the other. Here is what that looks like with a real example.

Start with a user story: "As a registered user, I want to log in with my email and password so I can access my account."

The product owner writes acceptance criteria:

- Given the user is on the login page, when they enter a valid email and correct password, then they are redirected to their dashboard

- Given the user enters an incorrect password, when they submit the form, then an error message appears and the password field clears

- Given the user has failed login five times, when they attempt again, then their account is temporarily locked

This format is called Given/When/Then, a pattern from Behavior Driven Development (BDD) written in a syntax called Gherkin. It reads naturally for non-technical stakeholders but maps almost directly to automated test code.

A QA engineer then takes each of those Given/When/Then statements and turns them into acceptance tests. Each scenario becomes a test case. The test either confirms the described behavior works, or it flags that it does not.

Writing those test cases manually for every scenario can slow teams down, especially as the feature set grows. KaneAI solves this by letting you author and evolve acceptance tests directly from natural language instructions, so the chain from acceptance criteria to executable test stays tight without the scripting bottleneck.

The chain is: user story then acceptance criteria then acceptance tests. Each one is a more concrete version of the one before it.

Who Writes What and When?

In most agile teams, acceptance criteria are written by the product owner or business analyst, ideally in collaboration with developers and QA during backlog refinement. Writing them together means fewer surprises later, and QA can flag criteria that are vague or hard to test early.

Acceptance tests are written by QA engineers or developers, usually during the sprint after acceptance criteria are finalized. In teams practicing ATDD (Acceptance Test-Driven Development), acceptance tests are written before development begins, alongside the criteria, and serve as the definition of done.

A rough sprint-level sequence:

- Acceptance criteria are ready before a story enters development

- Acceptance tests are written during development and run before the story is marked done

- Both are reviewed together during sprint review

Acceptance criteria written in isolation by a product owner often end up vague. Acceptance tests written without input from the product owner often miss the business intent. The best teams treat both as shared artifacts.

Common Mistakes Teams Make

Both acceptance criteria and acceptance tests are straightforward in theory but easy to get wrong in practice. These are the mistakes that show up most often and tend to cause the most rework.

- Writing acceptance criteria after development starts: This is the most common one. When criteria are written after the code is partially done, they end up describing what was built rather than what was needed. The whole point of writing them upfront is to align before anyone writes a line of code.

- Using criteria that are too vague to test: Statements like "the page should load fast" or "the form should be user-friendly" are not acceptance criteria. They are opinions. Good criteria have a clear, objective pass/fail outcome: "the page loads in under 2 seconds on a 4G connection."

- Confusing acceptance tests with UAT: User Acceptance Testing (UAT) is one type of acceptance testing, usually performed by end users or stakeholders in a staging environment before release. Acceptance tests as a whole are broader and happen throughout the development cycle, not just at the end.

- Skipping negative scenarios: Most acceptance criteria cover the happy path. But what happens when the input is invalid? When the network drops mid-request? When a field is left blank? These scenarios need their own criteria and their own tests.

- Treating acceptance tests as a QA-only responsibility: In agile teams, developers often write and run acceptance tests. Treating acceptance tests as something QA handles after development creates handoff delays and late-stage defect discovery.

Conclusion

Acceptance criteria and acceptance tests are not interchangeable, but they are inseparable. One defines what the software needs to do. The other proves it does. When both are written well, reviewed together, and treated as shared team artifacts rather than handoff documents, the gap between what was built and what was needed gets a lot smaller.

TestMu AI helps QA teams run acceptance tests at scale across 3,000+ real browsers and OS combinations and 10,000+ real devices, so every criterion gets validated before release. Get started with test automation on TestMu AI.

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests