Test authoring is a fundamental component of software development, involving the creation and design of test cases that validate various aspects of a software application, from functionality to performance and reliability.

The practice has moved from manual spreadsheets to AI-agentic workflows that generate end-to-end tests automatically, closing coverage gaps human authors often miss.

Overview

What is Test Authoring?

Test authoring involves translating requirements and user stories into structured, repeatable test cases. Each test includes steps, data, expected results, and pre/postconditions to verify software behaves as intended.

What are the types of test authoring?

- Unit Tests: Validate individual functions or components in isolation.

- Integration Tests: Verify interactions between services, APIs, or modules.

- System Tests: Cover end-to-end workflows across the application stack.

- Acceptance Tests: Confirm the system meets business requirements.

- Functional, Performance, Security, Accessibility, Visual Regression: Address specific validation needs using targeted approaches.

What are the best practices for test authoring?

- Write atomic, independent tests to ensure reliability and enable parallel execution.

- Reuse modular components like page objects to simplify test maintenance.

- Separate test logic from data to expand coverage without duplicating scripts.

- Prioritize tests based on business impact and risk to optimize QA effort.

- Maintain living documentation that stays aligned with evolving requirements.

How is AI used in test authoring?

AI agents automate test generation, suggest edge cases, and update tests as applications evolve. Human-in-the-loop validation ensures tests reflect real user workflows.

What are the future trends in test authoring??

- Agentic AI Testing: Autonomous agents generate and execute tests end-to-end.

- Self-Healing Tests: Adapt automatically to UI and workflow changes.

- Shift-Right Testing: Use production user behavior to prioritize scenarios.

- Testing in Production: Run tests safely for specific user segments with feature flags.

- Living Documentation: Maintain test cases aligned with evolving requirements.

What is Test Authoring?

Test authoring is the process of designing, creating, and documenting test cases that validate the functionality, performance, and reliability of a software application.

It goes beyond simply writing test steps; effective test authoring connects product requirements with structured, repeatable verification that ensures software behaves as intended.

At its core, test case authoring bridges the gap between what the software should do (requirements and user stories) and how QA teams verify it actually works (executable test scenarios).

Effective tests simulate real-world usage, providing a clear picture of how the system should function under user interaction.

Key Components of well-authored tests:

- Test Case ID & Title: Unique ID with a clear, descriptive title.

- Test Scenario: High-level flow or feature being tested.

- Preconditions: Required system state before execution.

- Test Steps: Sequential, atomic actions to perform.

- Test Data: Specific input values or data sources.

- Expected Results: Verifiable outcomes if the test passes.

- Actual Results: Observed outcome during execution.

- Postconditions: System state after the test completes.

Why Test Authoring Matters

Poorly authored test cases are among the most common reasons automation projects fail. Flaky tests, incomplete coverage, and ambiguous steps lead to unreliable results that slow down release cycles rather than accelerating them.

Investing in structured test authoring directly impacts several critical outcomes.

- Early defect detection is the most immediate benefit. Teams that follow disciplined test case design catch bugs during development sprints rather than in production, where fixes cost 10–100x more.

- Consistent execution: reduces variability in testing. Well-authored tests deliver reliable results across manual and automated runs, which is critical for regression testing after code changes.

- Faster Onboarding: Clear, documented test cases help new team members start contributing quickly, reducing knowledge transfer delays.

- Efficient Test Management: Structured test organization improves prioritization, coverage analysis, and resource allocation decisions.

Test Authoring vs. Test Case Writing vs. Test Design

These three terms are frequently used interchangeably, but they describe different activities within the testing process.

- Test case writing is the act of documenting individual test steps, expected results, and pass/fail criteria. It is a mechanical activity focused on recording what to do.

- Test design determines what needs to be tested and is the analytical process of choosing the right testing techniques such as equivalence partitioning, and decision tables, and defining the scenarios that should be covered.

- Test authoring encompasses both. It is the end-to-end process of analyzing requirements, designing test scenarios, writing detailed test cases, and maintaining them as the application evolves.

Test authoring is the umbrella that includes design decisions, documentation, data preparation, and ongoing maintenance.

In practice, a test author identifies what needs to be tested (design), creates structured test cases (writing), prepares test data, links tests to requirements for traceability, and updates tests when the application changes.

The distinction matters because teams that only write tests without designing them end up with high volumes of low-value test cases that provide coverage in quantity but not in quality.

Types of Test Authoring

Test authoring can be categorized by the level of testing, the technique applied, or the authoring approach used. Understanding these categories helps teams select the right strategy for each testing need.

By Testing Level

- Unit Test Authoring: Tests individual functions or components in isolation. Written by developers using frameworks like JUnit, pytest, or Jest. These tests are fast, independent, and highly specific.

- Integration Test Authoring: Validates interactions between services or modules, focusing on APIs, data flow, and dependencies. More complex due to configurations and timing issues.

- System Test Authoring: Covers end-to-end user workflows across the entire application stack, requiring realistic environments and test data.

- Acceptance Test Authoring: Ensures the system meets business requirements. Derived from user stories and often written collaboratively using BDD testing tools like Cucumber or SpecFlow.

By Testing Technique

- Functional Test Authoring: Verifies features work as specified by validating inputs and expected outputs.

- Performance Test Authoring: Defines load, concurrency, and response time scenarios using tools like k6, JMeter, or Gatling.

- Security Test Authoring: Identifies vulnerabilities such as SQL injection, XSS, and auth bypass, often guided by OWASP frameworks.

- Accessibility Test Authoring: Ensures compliance with WCAG and regulations by testing screen readers, keyboard navigation, and color contrast.

- Visual Regression Test Authoring: Compares UI screenshots across runs to detect unintended layout or design changes.

By Authoring Approach

- Scenario-based authoring focuses on real user journeys, login, search, purchase, account management, and structures tests around complete workflows rather than individual features.

- Data-Driven Authoring: Separates test logic from data to run the same tests across multiple inputs, increasing coverage with less effort.

- Risk-Based Authoring: Prioritizes tests based on business impact and failure risk to optimize QA resources.

The AI Agentic Test Authoring Process: Step by Step

A structured approach to test authoring prevents gaps in coverage and ensures consistency across the team. Modern teams increasingly use AI agents to automate test generation, identify edge cases, and maintain test suites at scale.

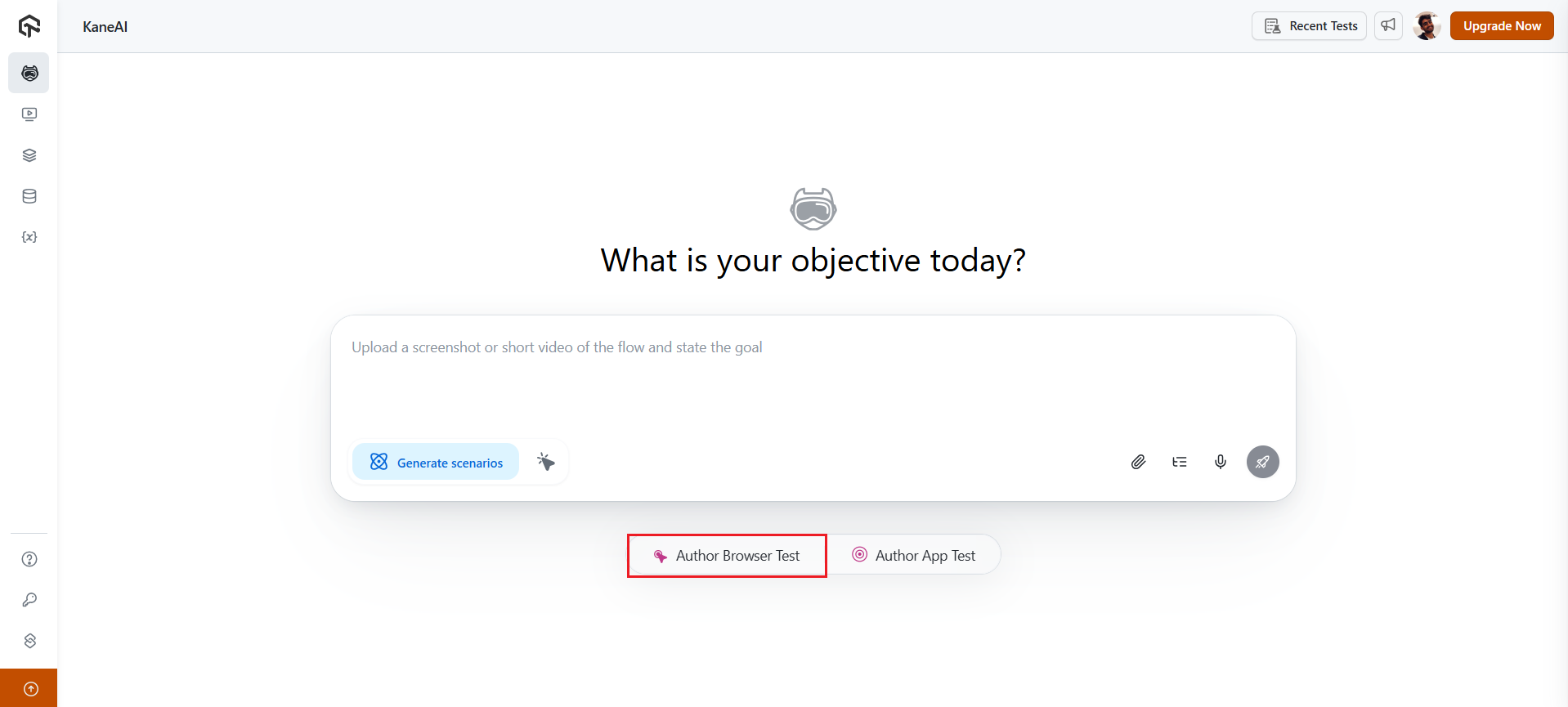

Follow these five phases to see how an AI agent like KaneAI can streamline test authoring end-to-end:

1. Requirement Analysis

Begin by reviewing requirements documents, user stories, acceptance criteria, and design specifications. AI tools can assist by automatically extracting key testing aspects and identifying ambiguities.

AI can also suggest additional test scenarios to ensure comprehensive coverage from the start.

With an AI agent, this step is accelerated further. AI interprets requirements from PRDs, PDFs, audio, and Jira, translating them into clear, actionable test flows in natural language, making it easier to convert complex requirements into executable test cases.

2. Test Scenario Identification

Next, break down the requirements into testable scenarios. AI agents analyze the application’s codebase, user behavior patterns, and requirement documents to automatically suggest test scenarios, including edge cases and risk areas that might be overlooked by human testers.

This ensures comprehensive coverage across various user scenarios.

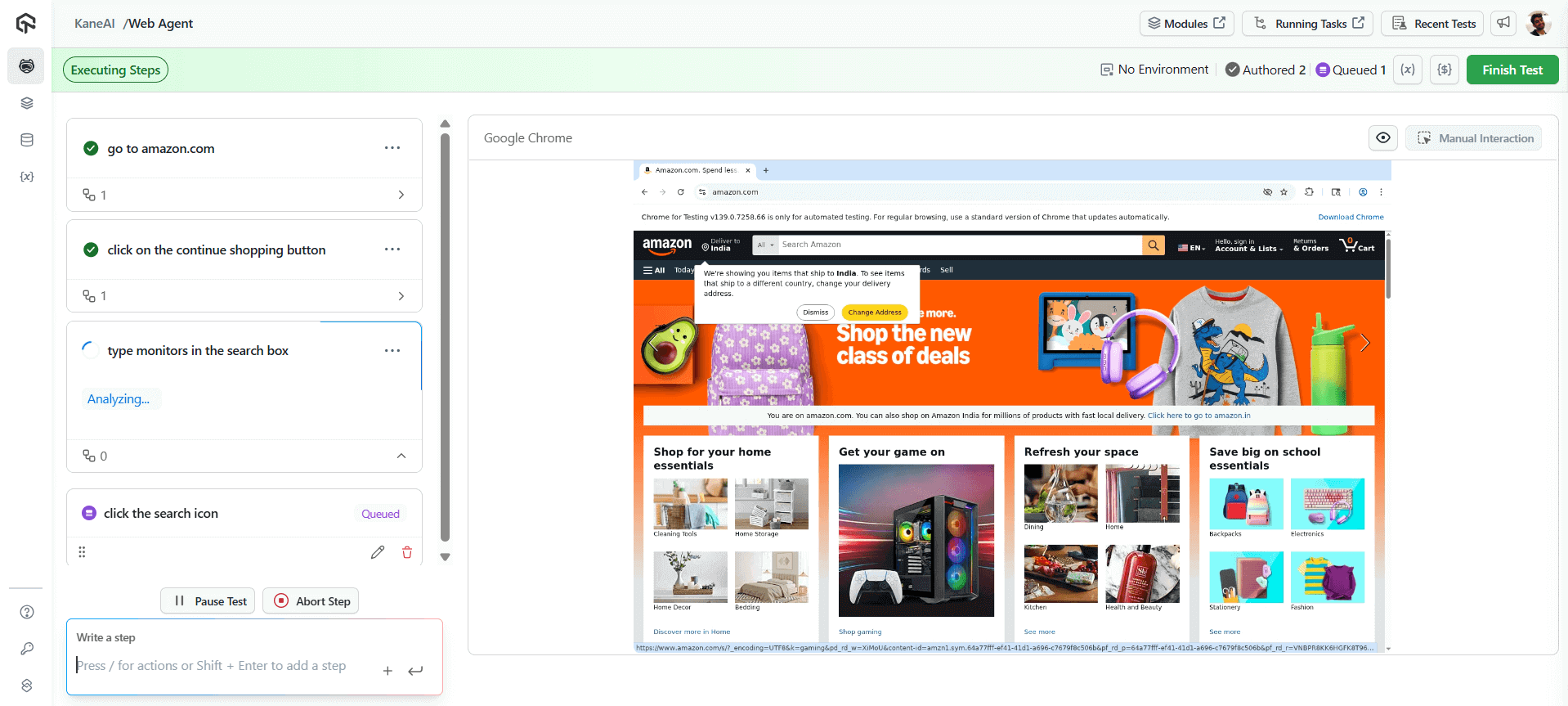

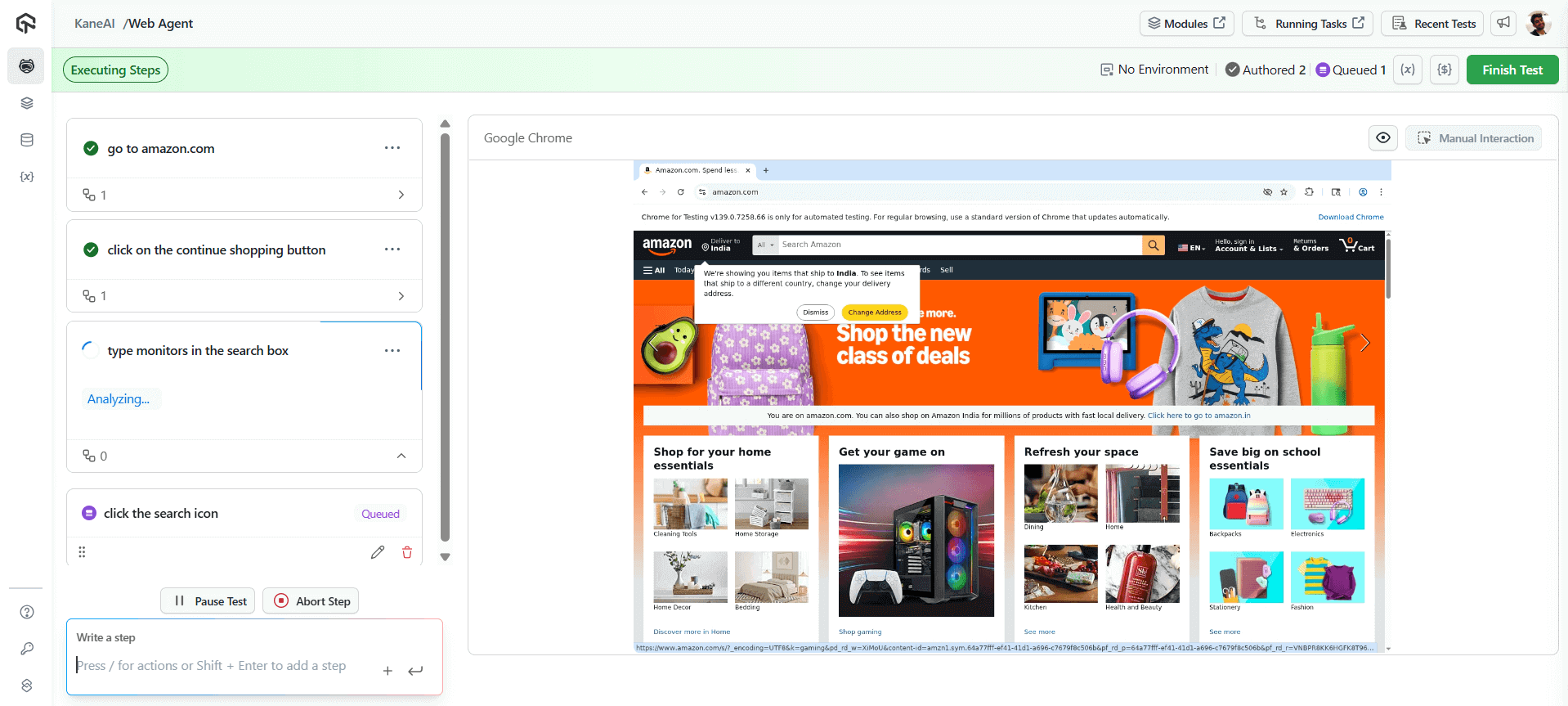

For example, a product search flow can be tested across steps like navigating to amazon.com, clicking “Continue Shopping,” typing “monitors” in the search box, and selecting the search icon, all generated and executed by the AI Agent with minimal input.

3. Test Case Design

Once scenarios are identified, define the test case ID, title, preconditions, steps, test data, and expected results to ensure clarity and consistency.

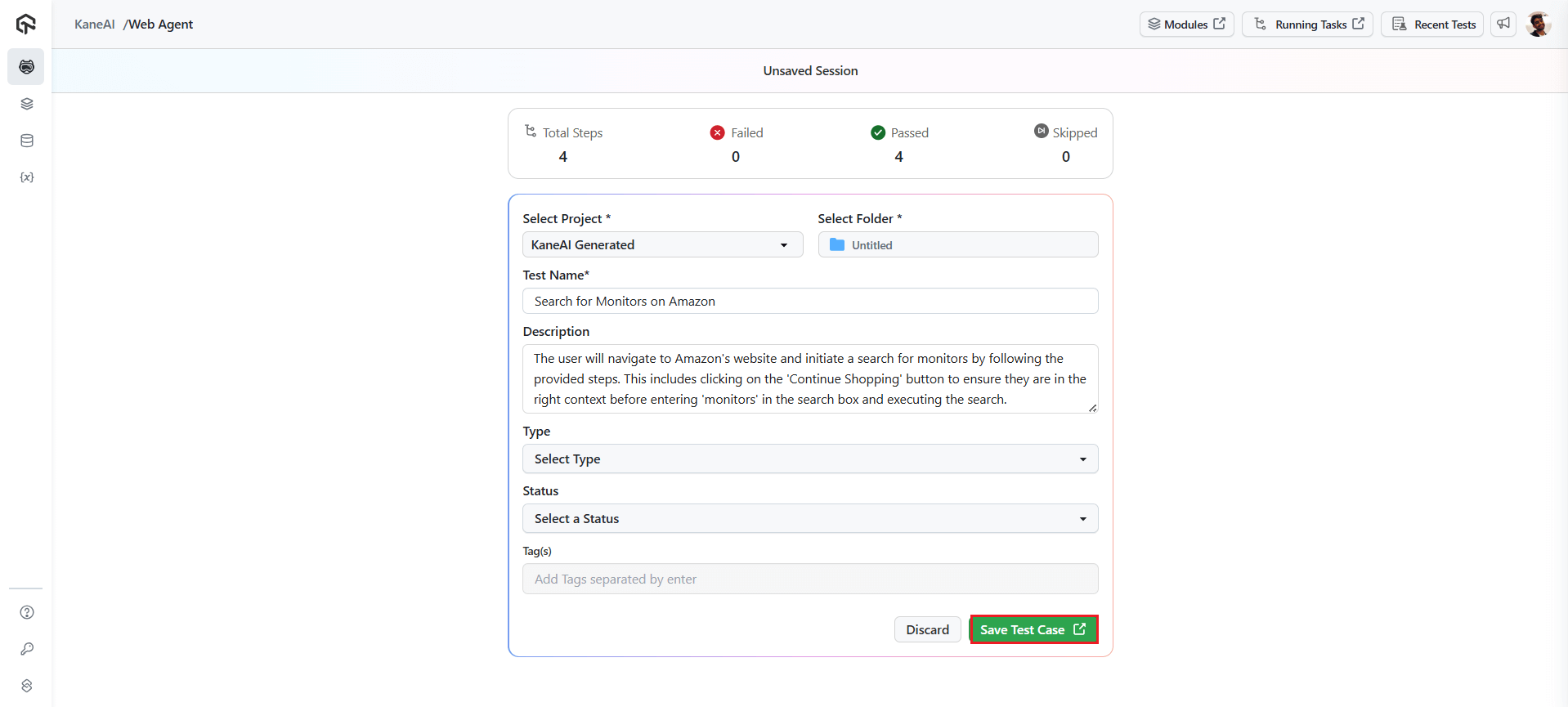

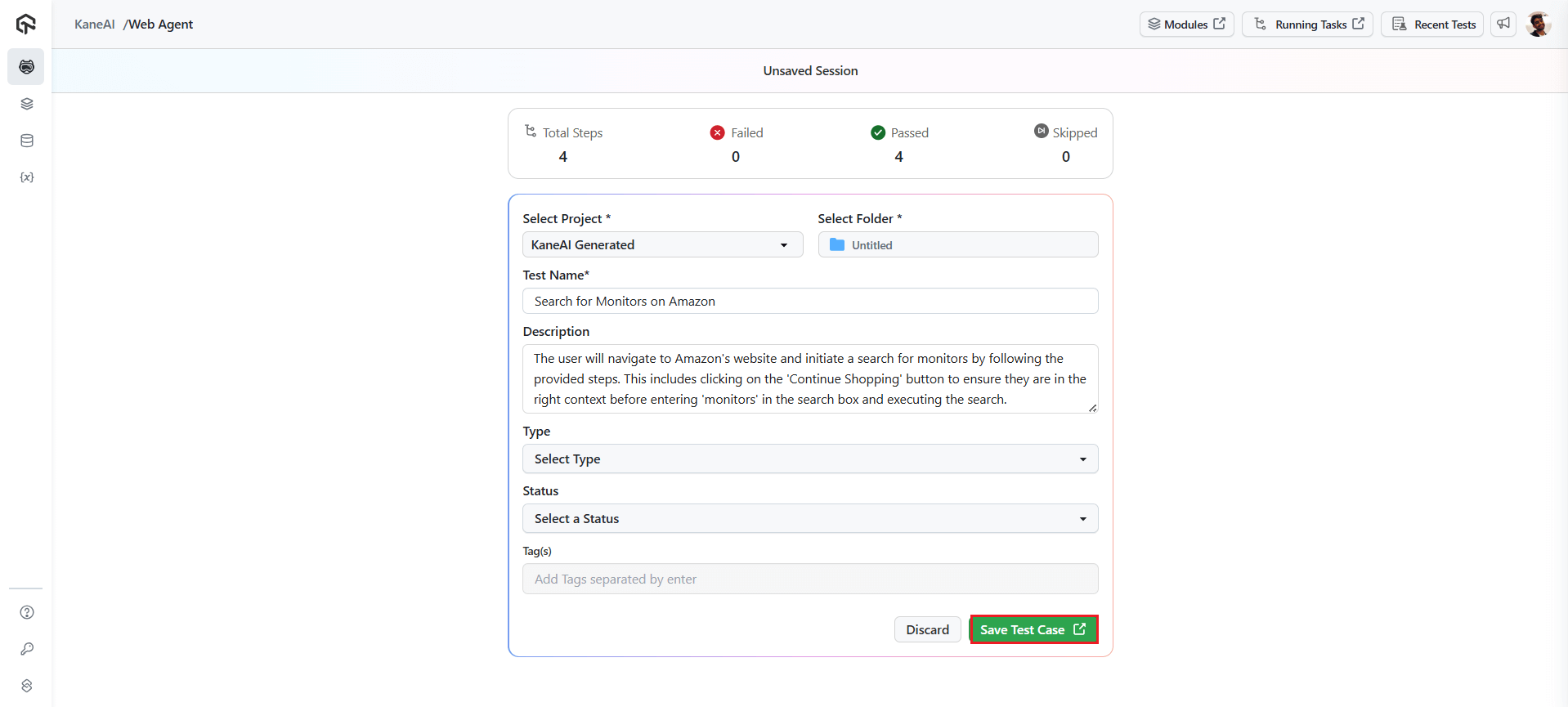

With an AI agent, you can describe a scenario like “Search for monitors on Amazon and verify results,” and it will automatically generate the complete test flow, including navigation, search actions, and result validation.

4. Review and Validation

This phase acts as a human-in-the-loop checkpoint, where teams review and refine test cases to ensure completeness, accuracy, and alignment with real user behavior and business requirements.

Stakeholders validate that scenarios reflect actual workflows and expectations. Address feedback before moving to execution or automation.

5. Maintenance and Evolution

As software evolves, test cases must be updated to stay relevant. UI changes, code refactors, and new features can break existing tests, making maintenance an ongoing effort.

Self-healing mechanisms help keep tests stable by adapting to UI and locator changes. AI automatically adjusts test scripts when minor UI changes occur, ensuring tests remain functional and accurate with minimal manual effort.

This reduces maintenance overhead and keeps test coverage aligned with the evolving application.

Accelerate Test Authoring with KaneAI:

KaneAI is TestMu’s AI-native test agent that lets QA teams describe test goals in natural language and automatically generates complete, executable test flows.

What sets it apart:

- Natural language to test conversion: Describe a scenario like “Add product, apply SAVE20, verify discount,” and the AI agent generates complete steps, assertions, and test data automatically.

- Multi-step planning: It understands complex workflows and plans the complete test path, including navigation, data entry, validations, and cleanup steps.

- Cross-platform execution: Run generated tests across 10,000+ real devices and browser combinations on a high-performance agentic test cloud for complete cross-browser coverage.

- Self-healing locators: Tests automatically adapt when UI elements change, dramatically reducing maintenance overhead.

- CI/CD integration: Plug generated tests directly into your Jenkins, GitHub Actions, or Azure DevOps pipeline for continuous testing.

Teams using the AI agent for test authoring report up to 60% reduction in test creation time and 50% reduction in test maintenance effort, enabling QA to keep pace with accelerating release cycles.

Roles and Responsibilities of a Test Author

A test author is responsible for defining, writing, validating, and maintaining test cases. In practice, this role spans several disciplines:

- Requirement analysis: Reviewing requirement documents, user stories, and acceptance criteria. Clarifying doubts with product owners and developers.

- Test planning: Defining test scope, estimating effort, and identifying which scenarios need manual versus automated coverage.

- Test case creation: Writing detailed, structured test cases with clear steps, data, and expected results. Ensuring each test is atomic, repeatable, and traceable.

- Review and collaboration: Conducting peer reviews, incorporating feedback from stakeholders, and collaborating with developers on testability concerns.

- Traceability management: Linking test cases to requirements and maintaining coverage metrics.

- Maintenance: Updating test cases as requirements change, removing obsolete tests, and refactoring test suites for efficiency.

- Automation contribution: Identifying which test cases are candidates for automation and collaborating with automation engineers on script development.

In smaller teams, the test author may also execute tests and manage defect reporting.

In larger organizations, the role is more specialized, focusing purely on test design and documentation while execution is handled by dedicated QA engineers or automation frameworks.

Conclusion

Test authoring is the foundation that determines whether your entire QA strategy succeeds or fails. Every automated test suite, every regression cycle, and every release gate depends on the quality of the test cases underneath.

Embrace AI as an accelerator: tool like KaneAI are not replacing QA engineers but empowering them to focus on test strategy while AI handles the repetitive mechanics of test creation, maintenance, and prioritization.