Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

- Home

- /

- Learning Hub

- /

- JBehave Testing

JBehave Testing: A Detailed Guide [2026]

Learn JBehave testing with this guide. Set up BDD in Java, write Given-When-Then stories, run tests on a cloud Selenium Grid, and debug common pitfalls.

Ravi Kumar

March 15, 2026

JBehave testing binds behavior scenarios to Java test scripts. It turns acceptance criteria into executable tests inside your build pipeline.

When a JBehave scenario fails, it maps to a real business flow breaking, not a CSS selector changing.

Overview

What Is JBehave Framework

JBehave is a Java-based BDD framework that uses Given-When-Then syntax to create executable test stories, bridging the gap between business requirements and test automation.

How to Get Started With JBehave Testing?

Setting up JBehave involves creating a Maven project, writing story files, and configuring a runner class. Follow these steps to get started:

- Set Up Maven Project: Create a Maven project with JBehave core, Selenium WebDriver, and JUnit dependencies in your pom.xml file.

- Write Story Files: Create .story files using Given-When-Then format to describe user scenarios in plain language that maps to business requirements.

- Create Step Definitions: Write Java classes with @Given, @When, and @Then annotations that map story steps to executable code logic.

- Configure the Runner: Set up a JUnitStories runner class that loads stories, connects step definitions, and configures reporting formats.

- Execute and Report: Run tests with Maven, generate HTML reports, and review results on the cloud dashboard for cross-browser validation.

What Is JBehave?

JBehave is the Behavior-Driven Development (BDD) framework built for Java. It expresses tests as plain-language user stories that double as living documentation.

It uses its own plain-text story format that employs Given-When-Then keywords to structure each scenario. Each keyword carries a distinct responsibility in the test flow.

- Given sets preconditions. The user is on the login page, the database has seed data, the API token is valid.

- When triggers the action under test. Entering credentials, submitting a form, calling an endpoint.

- Then asserts the expected outcome. The dashboard loads, the response code is 200, the order appears in the queue.

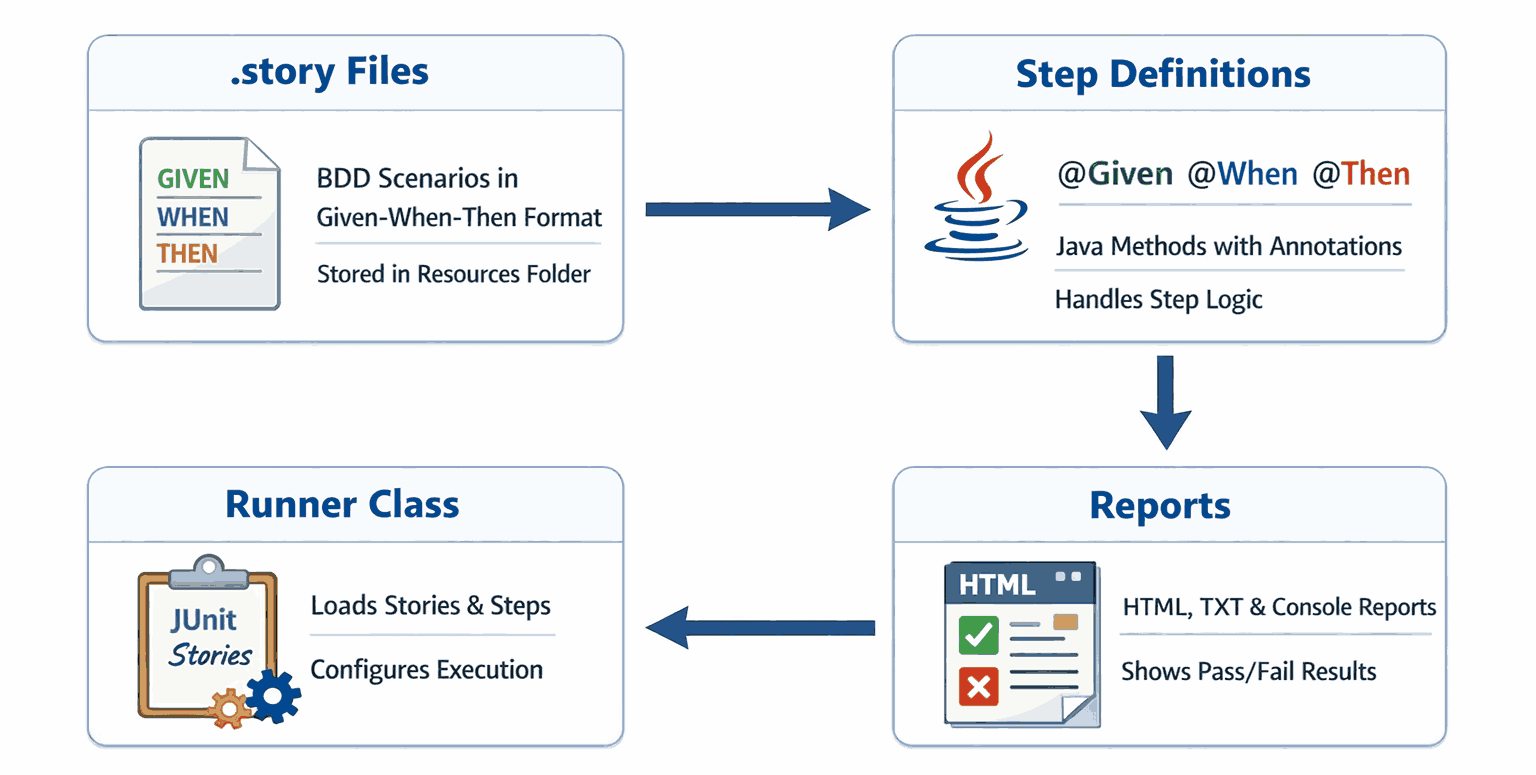

JBehave Architecture Overview

Here is the component breakdown of JBehave:

- .story File: Plain-text BDD scenarios in Given-When-Then format. Store them in a dedicated resources folder, version-controlled but separate from Java code.

- Step Definitions: Java classes with @Given, @When, @Then annotations containing execution logic. Mismatched regex is the top reason steps show as PENDING.

- Runner Class: Loads stories, connects step definitions, and configures output. Most teams extend JUnitStories and override configuration() and stepsFactory().

- Reports: JBehave generates HTML, TXT, and console reports. The HTML report shows every story, scenario, and step with pass/fail status.

Why Use JBehave for BDD in Java?

JBehave integrates natively with Java, uses plain-language Given-When-Then stories, and aligns developers, QA, and business teams around shared executable specifications.

- Human-Readable Stories. JBehave scenarios use Given-When-Then syntax that non-technical stakeholders can review without reading Java code.

- Integration with Java. Integrates directly with Maven, Gradle, JUnit, and TestNG. Step definitions use @Given, @When, @Then annotations on standard Java methods.

- Separation of Concerns. Story files (.story) hold the specification. Java classes hold the execution logic. Product owners review scenarios without touching code.

- Modular and Configurable. Group stories by feature, inject parameters from external tables, customize reports, and run stories in parallel for large suites.

- Seamless Integration. Plugs into Maven and Gradle build lifecycles with Spring dependency injection and automation testing in CI/CD pipeline tools like Jenkins.

- Team Collaboration. Stories are plain-text files in version control. Product owners, QA, and developers edit and review them through pull requests.

- Community and Ecosystem. Maintained since 2003 with a stable API and thorough documentation. Teams needing long-term BDD stability choose JBehave for Java.

If you are exploring other BDD frameworks for Java, you can also refer to this guide on Cucumber testing for comparison.

How to Set Up JBehave

Clone the sample project, run mvn clean install to download JBehave Core and Selenium dependencies, and optionally set cloud credentials to run tests on a remote grid.

You need Java 17 or later and Maven 3.8 or later installed on your machine.

An IDE such as IntelliJ IDEA or Eclipse with the JBehave Support plugin is strongly recommended for step navigation and syntax validation.

For this guide, I'll run tests on a cloud Selenium Grid offered by TestMu AI (Formerly LambdaTest).

TestMu AI is a full-stack agentic AI quality engineering platform that helps teams test smarter and deliver faster. It runs Selenium testing with Behave on real browsers instantly, with no local infrastructure setup needed.

Note: Run JBehave tests across 3000 real environments. Try TestMu AI Now!

Step 1: Clone the Sample Project

Clone the sample project to get a working JBehave structure:

git clone https://github.com/Ravikumar7210/LambdaTest_JBehave_Testing.git

cd jbehave-login-testThis project includes:

- .story files for BDD scenarios.

- Java step definitions using JBehave annotations.

- Selenium WebDriver configuration for local and cloud execution.

Step 2: Install Dependencies

Ensure Maven is installed, then run:

mvn clean installThis downloads all required libraries, including:

- JBehave Core for BDD story execution.

- Selenium with Java for browser automation.

- JUnit for test orchestration.

Step 3: Configure Your TestMu AI Credentials (Optional)

Skip this step if you are running tests locally. To run on a cloud Selenium Grid like TestMu AI, set your credentials as environment variables:

set LT_USERNAME=your_user_name

set LT_ACCESS_KEY=your_access_keyOr add them permanently via System Properties → Environment Variables. Never hardcode credentials in source files.

How to Write and Run Your First JBehave Test

Write a .story file with Given-When-Then steps, implement Java step definitions, configure a JUnitStories runner, and execute with mvn test locally or on a cloud grid.

1. Create a Maven Project

Use Spring Initializr or your IDE to create a new Maven project. Add the following dependencies in pom.xml:

<properties>

<java.version>17</java.version>

<spring-boot.version>3.3.4</spring-boot.version>

<jbehave.version>5.2.0</jbehave.version>

<selenium.version>4.26.0</selenium.version>

<webdrivermanager.version>5.9.1</webdrivermanager.version>

<maven.compiler.source>${java.version}</maven.compiler.source>

<maven.compiler.target>${java.version}</maven.compiler.target>

</properties>

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-dependencies</artifactId>

<version>${spring-boot.version}</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<dependencies>

<!-- Spring Boot core -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter</artifactId>

</dependency>

<!-- JBehave core + Spring integration -->

<dependency>

<groupId>org.jbehave</groupId>

<artifactId>jbehave-core</artifactId>

<version>${jbehave.version}</version>

</dependency>

<dependency>

<groupId>org.jbehave</groupId>

<artifactId>jbehave-spring</artifactId>

<version>${jbehave.version}</version>

</dependency>

<!-- Selenium WebDriver -->

<dependency>

<groupId>org.seleniumhq.selenium</groupId>

<artifactId>selenium-java</artifactId>

<version>${selenium.version}</version>

</dependency>

<!-- WebDriverManager (optional for local runs) -->

<dependency>

<groupId>io.github.bonigarcia</groupId>

<artifactId>webdrivermanager</artifactId>

<version>${webdrivermanager.version}</version>

</dependency>

<!-- Testing stack -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.junit.jupiter</groupId>

<artifactId>junit-jupiter</artifactId>

<scope>test</scope>

</dependency>

</dependencies>

2. Create Your Story File

Create a file at src/main/resources/stories/login.story:

Narrative:

In order to access my account

As a registered user

I want to log in and see my dashboard

Scenario: Valid login

Given the user is on the login page

When the user enters valid credentials

Then the user should see the dashboardThis story describes a user journey in plain language. I keep steps free of selectors, waits, and browser configuration — that boundary is what makes stories readable to non-engineers.

3. Write Step Definitions

Create a class at: src/test/java/steps/LoginSteps.java

This class maps each .story step to a Java method using JBehave annotations. The annotation text must match the story wording exactly.

@Component

public class LoginSteps {

@Autowired

private WebDriver driver;

private WebDriverWait wait;

@BeforeScenario

public void beforeScenario() {

driver.manage().window().maximize();

wait = new WebDriverWait(driver, Duration.ofSeconds(20));

ltLog("Starting scenario: Valid login");

}

@AfterScenario

public void tearDown() {

if (driver != null) {

ltLog("Closing WebDriver after scenario");

driver.quit();

}

}

@Given("the user is on the login page")

public void openLoginPage() throws InterruptedException {

driver.get("https://ecommerce-playground.lambdatest.io/index.php?route=account/login");

Thread.sleep(1000);

wait.until(ExpectedConditions.visibilityOfElementLocated(By.id("input-email")));

ltLog("Navigated to login page and email field visible");

}

@When("the user enters valid credentials")

public void enterCredentials() {

driver.findElement(By.id("input-email")).sendKeys("[email protected]");

driver.findElement(By.id("input-password")).sendKeys("Ravil@1234");

driver.findElement(By.cssSelector("input[type='submit']")).click();

ltLog("Entered credentials and submitted login form");

}

@Then("the user should see the dashboard")

public void verifyDashboard() {

try {

By heading = By.cssSelector("h2");

By breadcrumbLast = By.cssSelector("ul.breadcrumb li:last-child");

wait.until(ExpectedConditions.or(

ExpectedConditions.textToBePresentInElementLocated(heading, "My Account"),

ExpectedConditions.textToBePresentInElementLocated(breadcrumbLast, "Account")

));

ltLog("Dashboard verified (heading/breadcrumb matched)");

ltMarkStatus("passed", "Login scenario completed successfully");

} catch (TimeoutException e) {

ltLog("Dashboard verification timed out");

ltMarkStatus("failed", "Dashboard not visible after login");

throw e;

}

}

private void ltLog(String message) {

try {

((JavascriptExecutor) driver).executeScript("lambda-comment=" + message);

} catch (Exception ignored) { }

}

private void ltMarkStatus(String status, String reason) {

try {

((JavascriptExecutor) driver).executeScript("lambda-status=" + status);

((JavascriptExecutor) driver).executeScript("lambda-comment=" + reason);

} catch (Exception ignored) { }

}

}4. Configure the Runner

Create a runner class at: src/test/java/runners/LoginStoryRunner.java

This class tells JBehave where to find stories, which step classes to use, and what report formats to produce.

@SpringBootTest

public class JBehaveRunnerTest {

@Autowired

private ApplicationContext context;

@Test

void runStories() {

Configuration configuration = new MostUsefulConfiguration()

.useStoryLoader(new LoadFromClasspath(this.getClass()))

.useStoryReporterBuilder(new StoryReporterBuilder()

.withDefaultFormats()

.withFormats(Format.CONSOLE, Format.TXT, Format.HTML)

.withPathResolver(new FilePrintStreamFactory.ResolveToSimpleName())

);

InjectableStepsFactory stepsFactory = new SpringStepsFactory(configuration, context);

StoryFinder finder = new StoryFinder();

// Pick up all .story files under /stories/

List<String> stories = finder.findPaths(

codeLocationFromClass(this.getClass()),

"stories/*.story",

""

);

Embedder embedder = new Embedder();

embedder.useConfiguration(configuration);

embedder.useStepsFactory(stepsFactory);

embedder.runStoriesAsPaths(stories);

// Quit driver after all stories

WebDriver driver = context.getBean(WebDriver.class);

if (driver != null) {

System.out.println("Closing WebDriver session at end of run");

driver.quit();

}

}

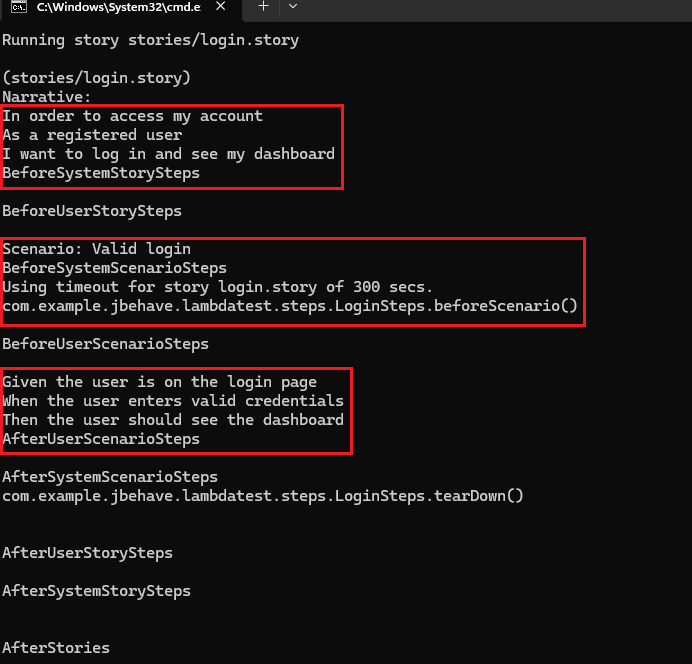

}5. Run the Test

Run the below command to execute the test:

mvn testThis will:

- Launch a browser session locally or on the cloud grid if credentials are configured.

- Execute the login scenario.

- Generate a report at target/jbehave/view/index.html.

- Show the logs on the CMD panel.

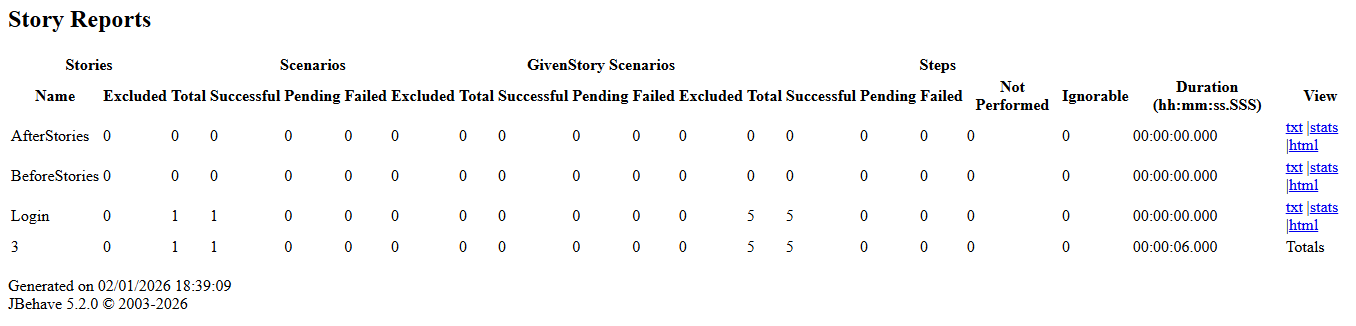

6. View Test Results

Open target/jbehave/view/index.html in your browser. It groups failures by story and scenario so you can trace which flow broke.

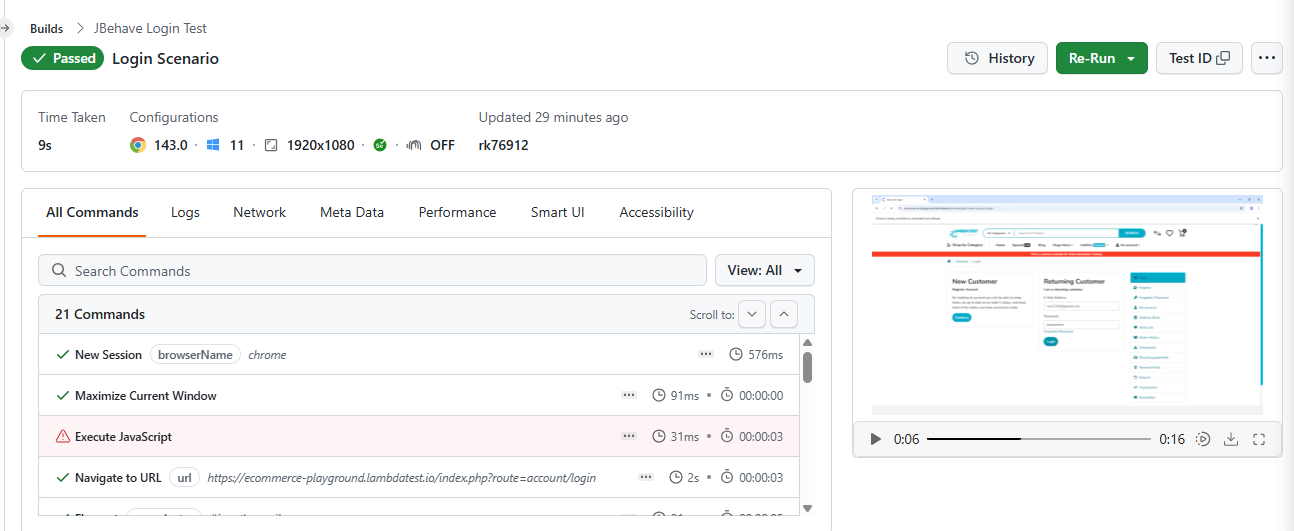

The TestMu AI Web Automation dashboard shows session details, execution logs, video recordings, and screenshots for each JBehave test run.

Common Pitfalls in JBehave Testing

Most JBehave failures trace back to the same recurring mistakes. I've seen these patterns across projects of different sizes, and knowing them before you hit them saves significant debugging time.

- Not Following BDD Principles. Teams often write JBehave stories in isolation without involving product owners or QA, resulting in tests that reflect technical assumptions rather than actual business requirements or user behavior.

- Overly Complex or Ambiguous Stories. Scenarios that cover multiple behaviors in one block become difficult to read, maintain, and debug. Failures are ambiguous when a single scenario tests several independent conditions at once.

- Vague and Overloaded Steps. Step methods that perform multiple actions at once make it impossible to isolate failures. Overloaded steps also break reusability and inflate the number of distinct step definitions needed across the suite.

- Unmatched Step Definitions. Steps without a matching Java method are marked PENDING. By default, JBehave does not fail the build for pending steps, which can create a false sense of coverage if reports are not reviewed carefully.

- Poor Story Organization. Scenarios that depend on a specific execution order or shared mutable state become flaky and unpredictable. Tests fail intermittently when run in parallel or executed in a different sequence.

- Misconfigured Project. Incorrect classpath setup, missing step registrations, or wrong runner configuration cause JBehave to silently skip stories entirely, producing no output and making the root cause very difficult to diagnose.

- Neglecting Test Data Management. Hardcoded values in story files make scenarios fragile and difficult to scale. Changing a single value requires editing multiple story files rather than updating one centralized, reusable data source.

- Poor Error Handling in Step Definitions. Catching broad exceptions in step definitions hides the actual cause of failure. Generic error messages make it hard to identify which step failed and what application state it was in.

- Not Leveraging IDE or Tooling Support. Without IDE support, unmatched story steps are invisible until runtime. Developers waste time hunting for typos in step text that a JBehave plugin would flag instantly while editing.

- Poor Reporting Setup. Without configured reporters, JBehave outputs raw text that is hard to parse after failures. Teams lose visibility into which specific steps failed and under what scenario conditions they occurred.

Debugging Tips for JBehave Testing

When a test fails, the root cause is rarely obvious. In my experience, these tips help you pinpoint the problem quickly without guesswork.

- Use Your IDE Debugger. Place a breakpoint inside the failing step method and run JUnitStories in debug mode to pause execution and inspect variable state at the exact point of failure.

- Use JBehave Reports Efficiently. After a test run, open target/jbehave/view/index.html in your browser. It provides step-level failure traces, full stack details, and scenario status across all executed story files.

- Enable Verbose Logging. Add PrintStreamStepMonitor to your configuration to log each step as it executes. This reveals which steps matched, were skipped, or had parameters injected during the test run.

- Validate Story Syntax and Paths. If no stories execute, start with a single minimal story to isolate the issue. Override storyPaths() explicitly in your JUnitStories runner to confirm path resolution is correct.

- Use Dry-Run Methods. Call .doDryRun(true) in your configuration to validate that all story steps are correctly wired to Java methods without actually executing any test logic or browser interactions.

- Check Parameter Injection. When steps fail with unexpected values, verify that $variable placeholders in story text match the @Named annotations in your step method signatures for correct parameter binding.

Conclusion

This guide covered JBehave from the ground up, including its architecture, the reasons Java teams choose it for BDD, and how its Given-When-Then model maps business requirements to executable tests.

The walkthrough covered setting up a Maven project, writing story files, mapping steps to Java methods, configuring a runner class, and executing tests with HTML reports.

Common pitfalls like misconfigured runners, unmatched step definitions, and poor story organization were also addressed alongside practical debugging techniques for tracing failures quickly.

Teams scaling JBehave beyond local execution often rely on cloud platforms for Java automation testing online, where TestMu AI provides access to real browsers and OS combinations.

Citations

- JBehave Official Documentation: https://jbehave.org/

Frequently asked questions

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests