Next-Gen App & Browser Testing Cloud

Trusted by 2 Mn+ QAs & Devs to accelerate their release cycles

- Home

- /

- Blog

- /

- Ensuring Quality in Data & AI: A Comprehensive Approach to Quality in the Age of Data & AI [Testμ 2023]

Ensuring Quality in Data & AI: A Comprehensive Approach to Quality in the Age of Data & AI [Testμ 2023]

Learn how to ensure quality in AI and data systems with a detailed five-pillar model framework by industry expert Bharat Hemachandran. Explore its impact on roles and prepare for the evolving tech landscape.

TestMu AI

March 23, 2026

As AI and ML continuously reshape our technological landscape, ensuring the quality of AI systems has become a critical aspect of software development. The introduction of AI brings a different set of challenges that the traditional QA methods fail to address.

In this session, our speaker, Bharath Hemachandran, presented a comprehensive framework for testing and validation in Data & AI projects.

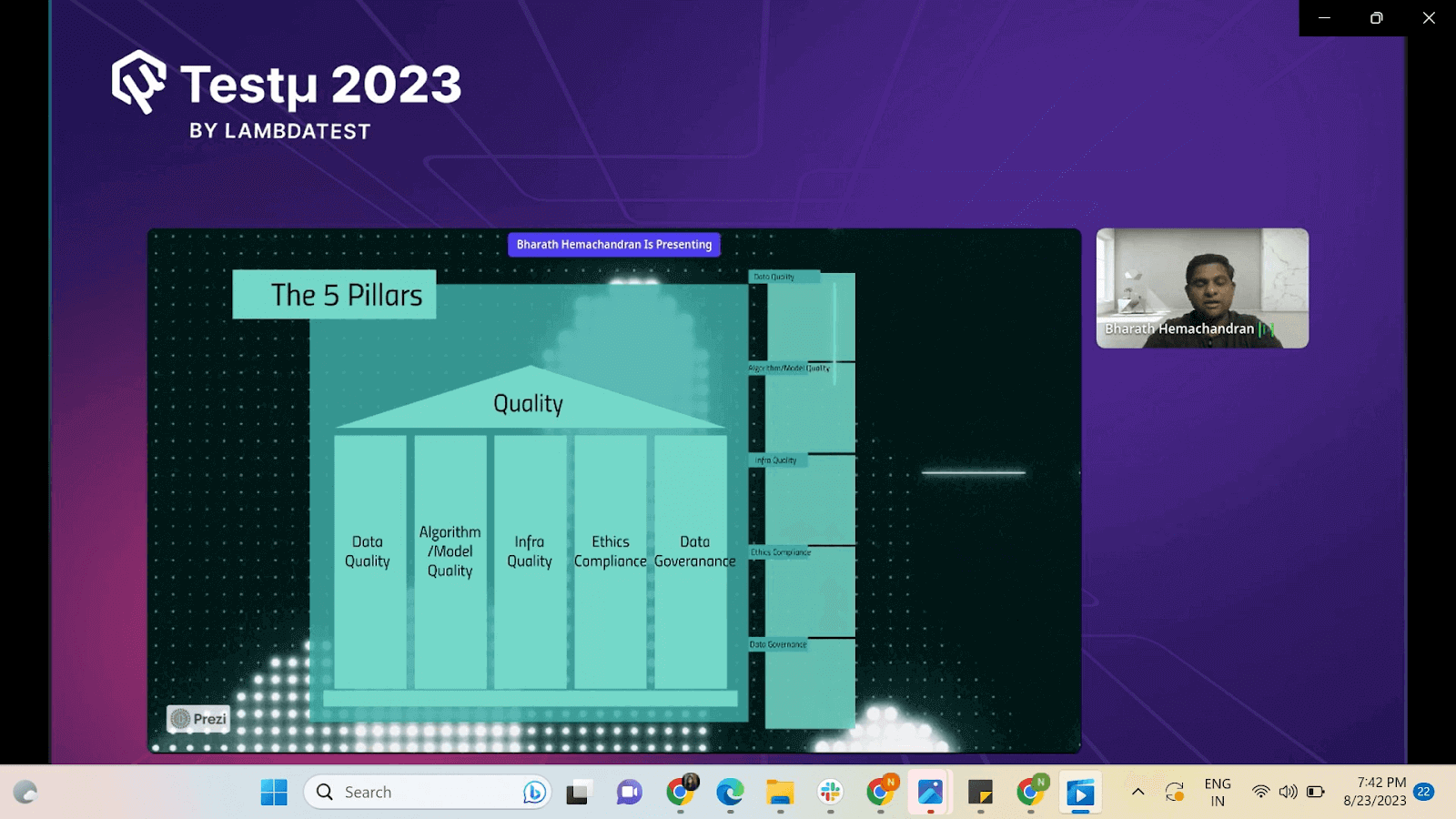

The framework covered five key pillars: data quality, model quality, infrastructure quality, compliance and ethics, and data governance. While discussing the significance of each pillar, he has provided real-life examples and practical strategies for implementing effective Quality measures in AI projects.

About the Speaker

Bharath Hemachandran currently works with Thoughtworks as a Quality Analyst and Principal Consultant. He has 16 years of experience in the software industry in various roles, from developer to IT Head of a real estate company. Coming to his interests, he loves to look at technology with a business mindset and solve real-world problems using technology. His passions include researching the use of Generative AI in Software Development and blogging.

If you couldn’t catch all the sessions live, don’t worry! You can access the recordings at your convenience by visiting the TestMu AI YouTube Channel.

What is Data Quality?

Getting started with the session, Bharath talked about what quality looks like. To explain this, he introduced a five-pillar framework approach. Further, he highlighted the challenge of determining what constitutes a high-quality system in data and AI, where traditional criteria like performance and security might not be sufficient.

Bharath suggested that simply meeting functional and cross-functional requirements is inadequate for assessing quality in these systems. The goal is to develop a way to differentiate between well-defined and poorly defined systems from the perspective of AI and data. To support his views, he shared five use cases where the systems were aligned cross-functionally but were considered failures:

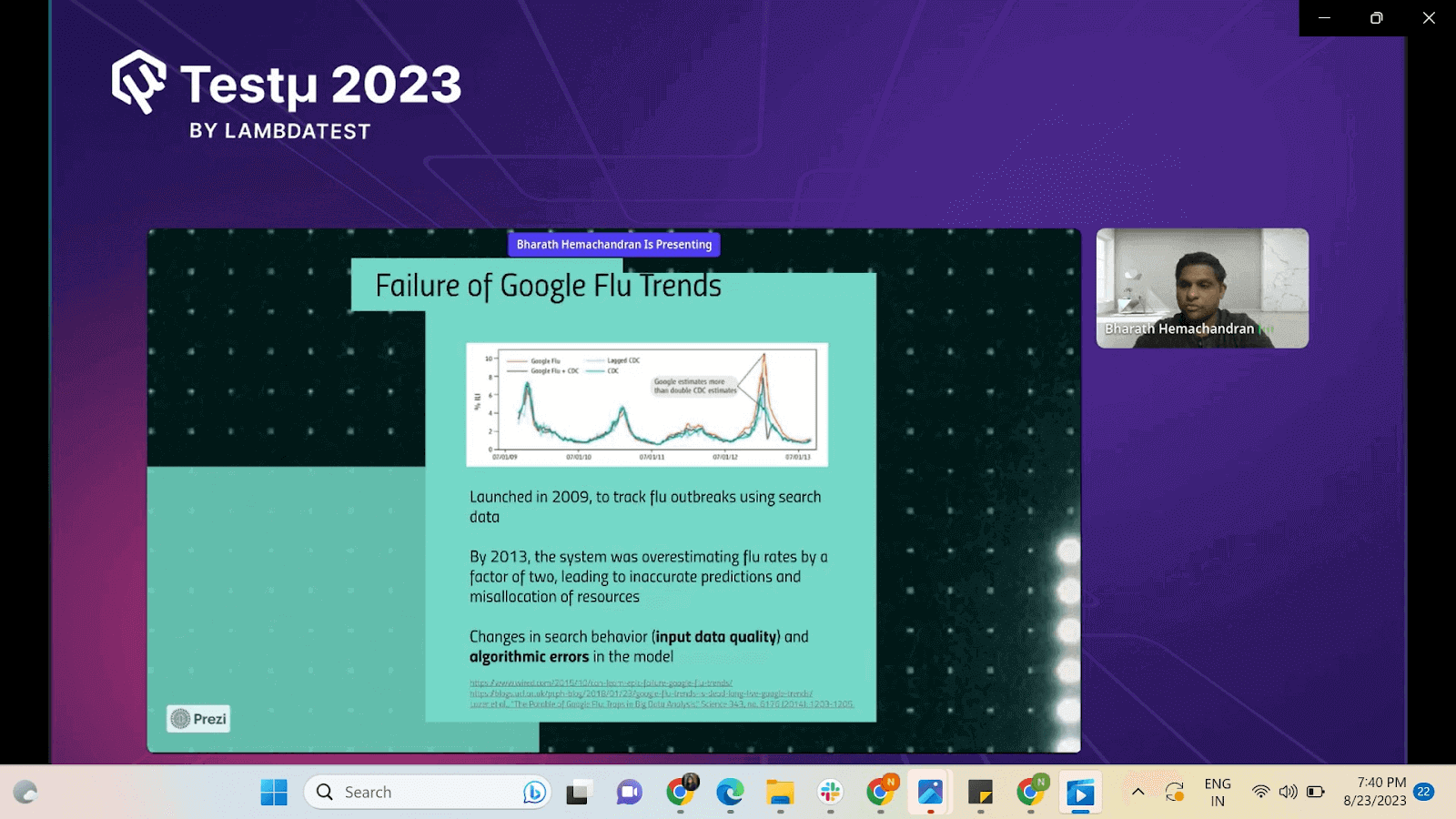

- Google Flu Trends: Data quality issue: Bad/incorrect data has caused the system to fail and led to incorrect predictions.

- Apple Card: Algorithmic flaws have caused Gender Bias, even if gender was not a parameter to define the credit score.

- Equifax Data Breach: A small, unpatched vulnerability has caused a huge breach. It shows that infrastructure quality supporting the system is just as important as the data quality.

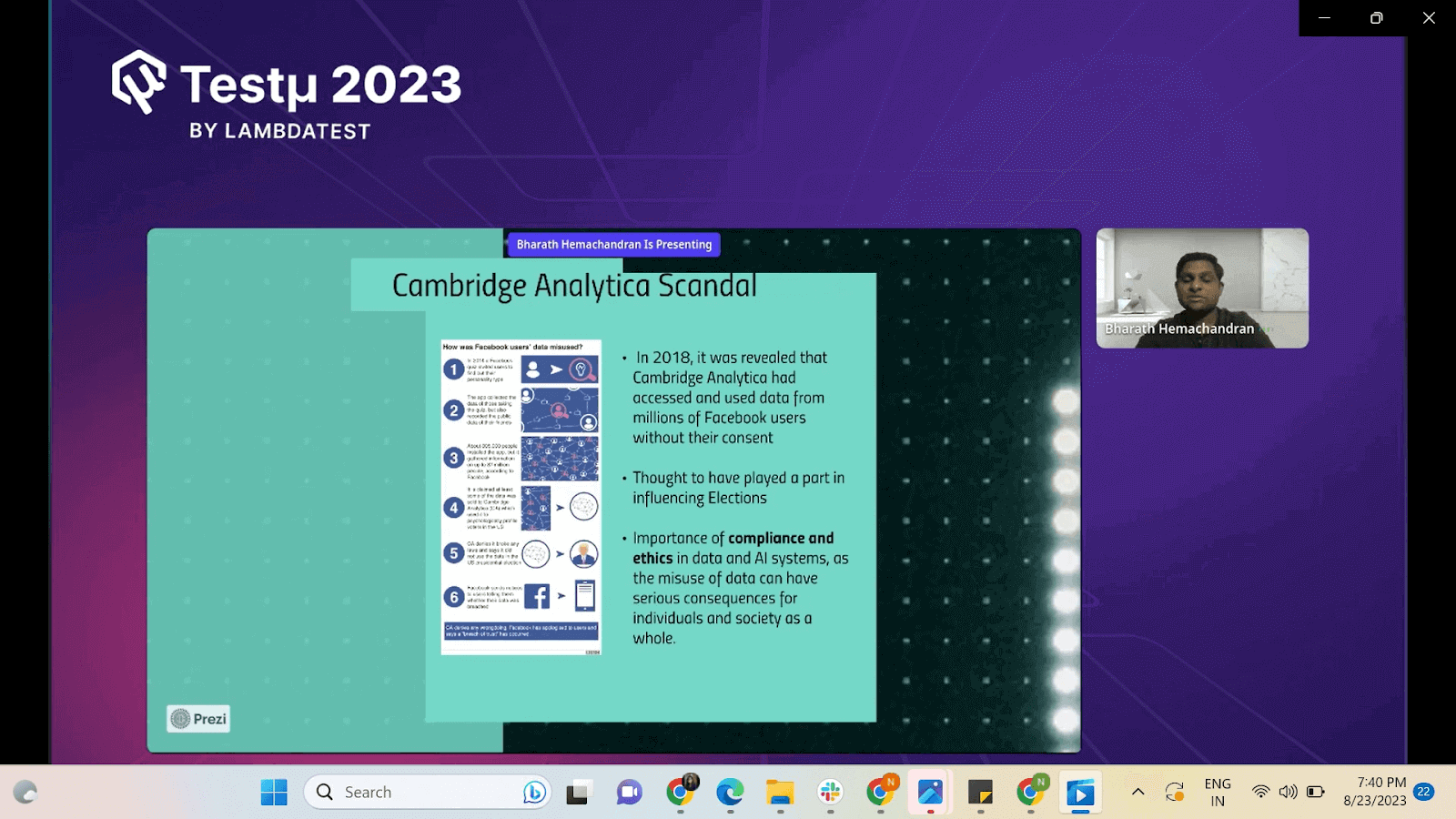

- Cambridge Analytica Scam: Highlights the importance of compliance and ethics in data and AI systems: Misuse of the data has caused the system failure.

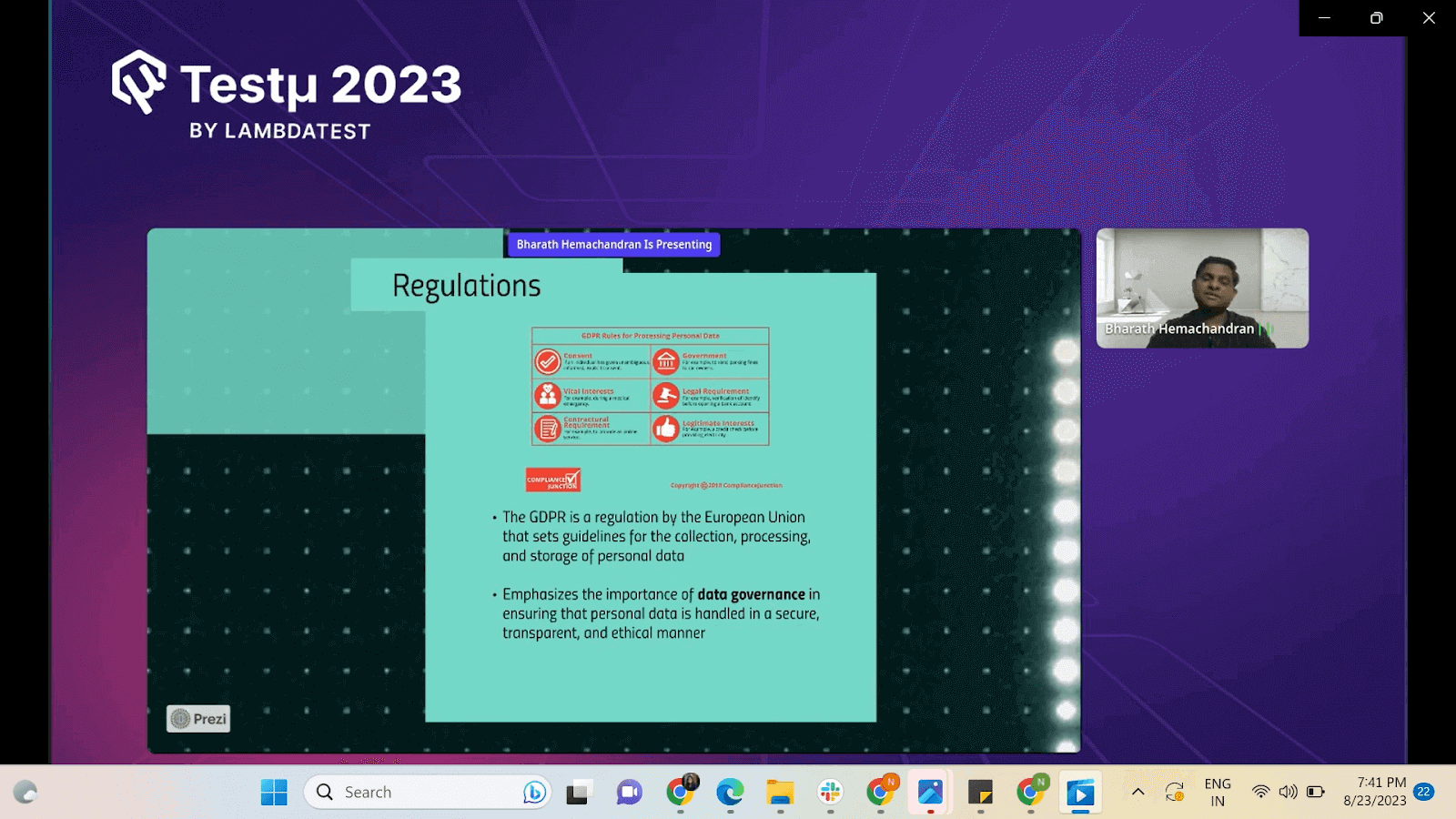

- External factors like regulation imposed by Govt. and regulatory bodies on different industries like GDPR, HIPAA, etc., highlight the importance of data governance for a system.

So, in addition to functional and cross-functional requirements, you need to have:

- Good data quality

- Guarding against data shifts or user behavior changes

- Explainable and functional data models

- Secure and well-governed infrastructure

- Compliance with ethics standards

- Conformance to data governance and industry regulations

Master the art of creating value within commercial interests through data regulation and governance. Join Bharath Hemachandran to delve into the significance of GDPR in ensuring robust data security, access, and authentication. pic.twitter.com/FTTdTRuM54

— LambdaTest (@testmuai) August 22, 2023

5 Pillar Model for the Quality of Data and AI System

After gaining relevant experience in software testing and quality assurance, Bharath came up with his five-pillar model to define quality for AI systems. He explained each pillar in depth as follows:

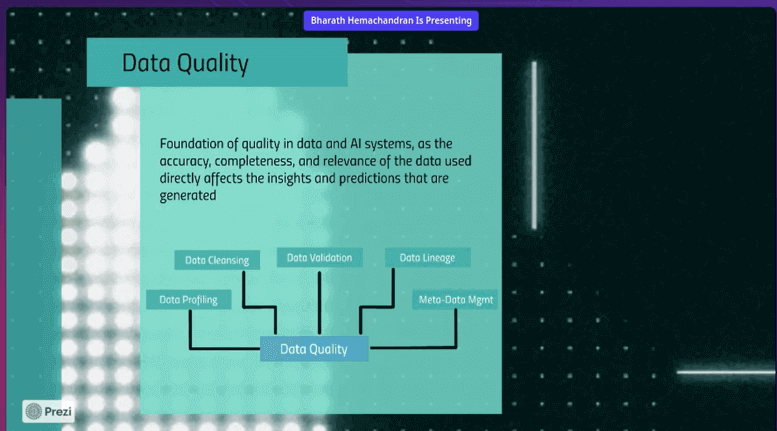

Pillar 1: Data Quality

Data quality is super important for any system to work well. This means the data should be fresh, complete, accurate, and consistent. Bharath walked us through various aspects of data quality:

- Data Profiling: Profiling data involves analyzing its characteristics, which assists in measuring data quality. Tools like DQ and Great Expectations are recommended.

- Data Cleansing: Anonymizing data, avoiding exposing PII (Personally Identifiable Information), and ensuring data validity are crucial for maintaining quality.

- Tracking Data Lineage: Understanding data sources, transformations, and usage is vital. Metadata management tools are highlighted for maintaining data lineage.

- Tool Frameworks: Apache’s tools and other data quality tools are available for addressing these aspects. Some great tools can be used, like Deequ.

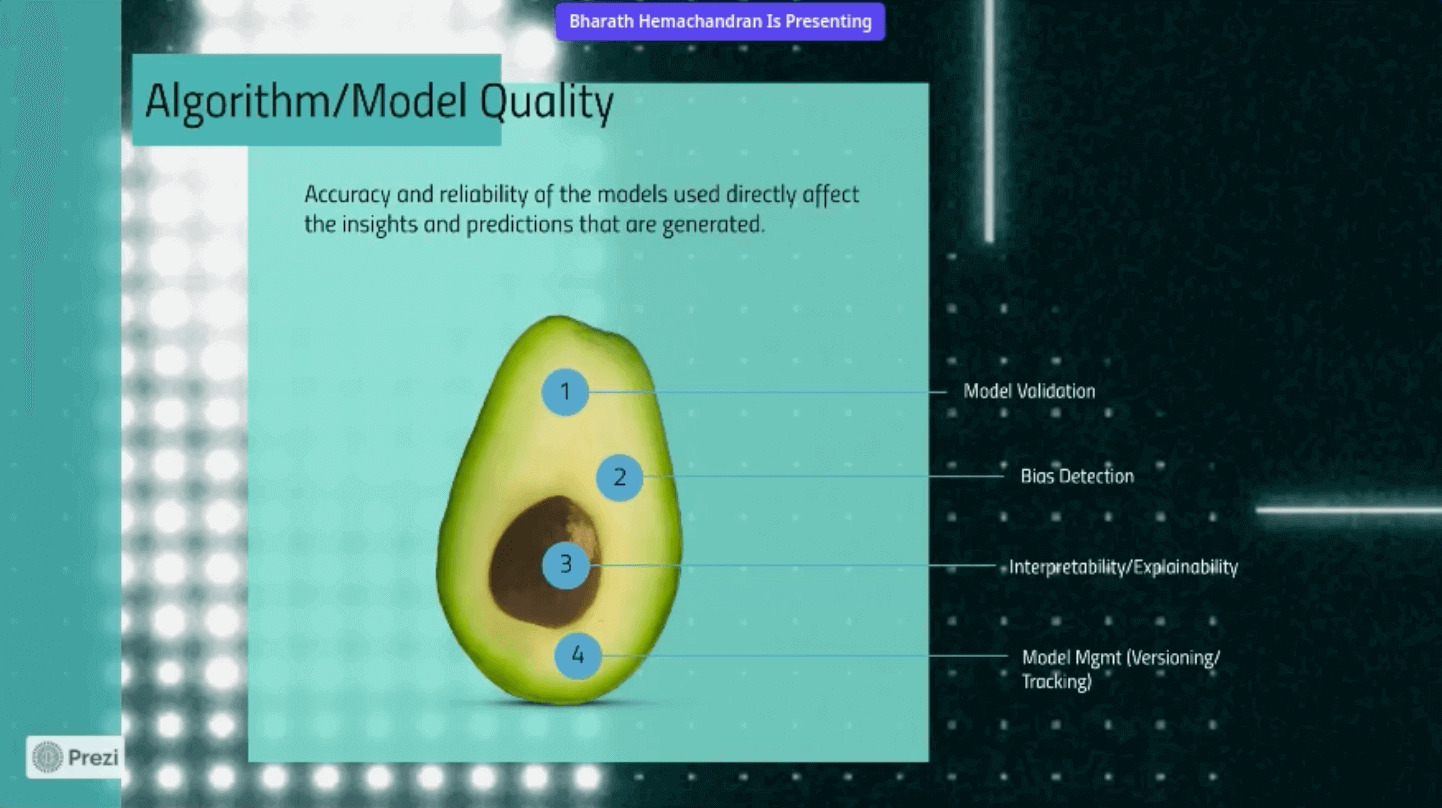

Pillar 2: Algorithm/Model Quality

Bharath explained that algorithms play a great role in determining the accuracy of these models, which further affects the predictions they generate.

- Model Validation: Ensuring the quality of AI algorithms or models involves collaboration with data scientists, ML engineers, and others.

- Bias Testing: Detecting and mitigating bias in AI models is important. Tools like IBM Fairness 360 aid in bias testing to ensure fairness.

- Interpretability and Explainability: Understanding the model’s working, changes over time, and implications on results is crucial for success.

- Data Quality Impact: The quality of training and testing data directly influences model performance. Poor data quality can lead to unexpected results.

Each of these pillars — model validation, bias detection, explainability, and data quality — maps directly to practical testing strategies. Our guide on testing AI applications covers how to implement these checks across the AI development lifecycle.

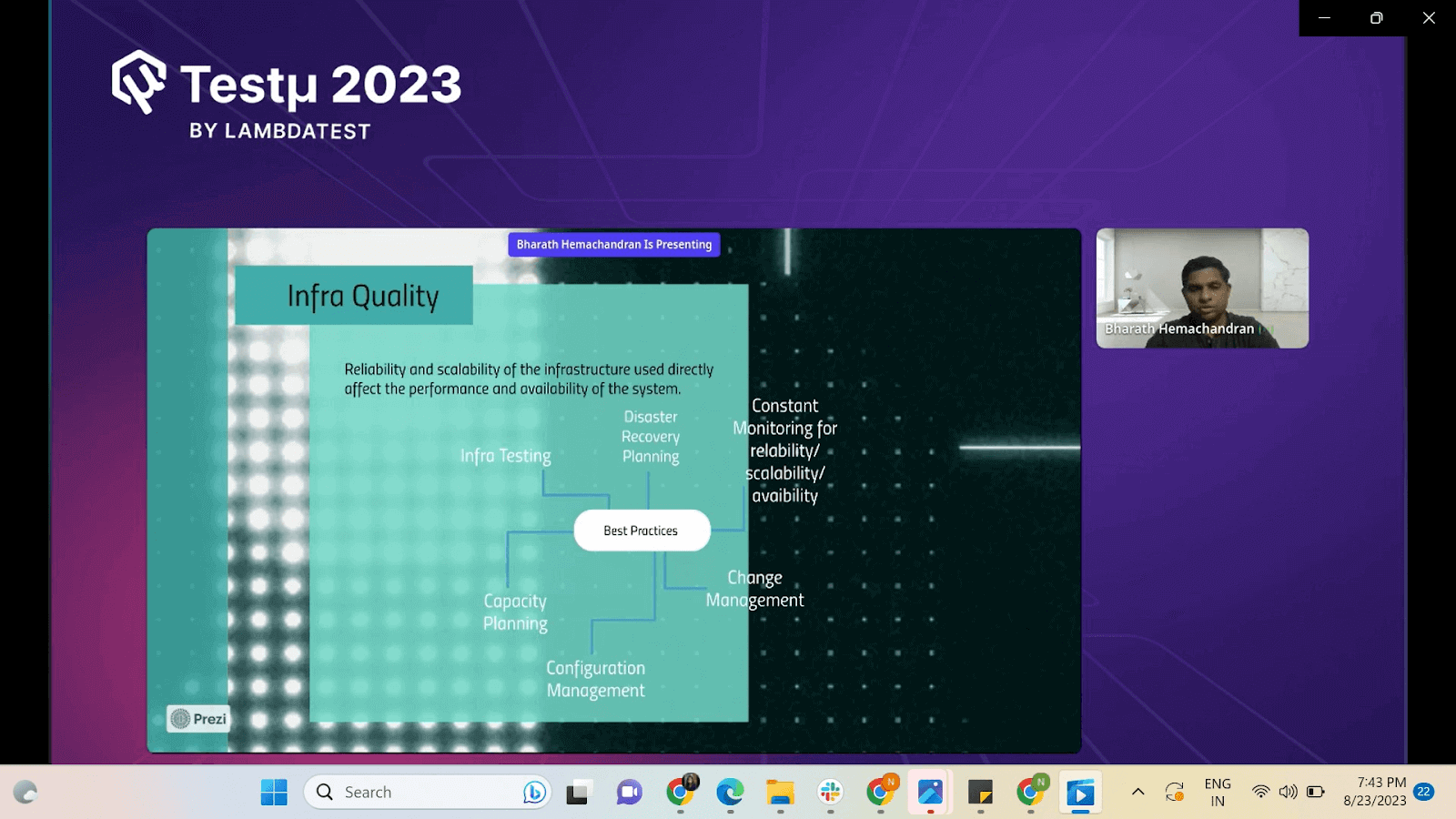

Pillar 3: Infrastructure Quality

The infrastructure quality can have a direct impact on the performance and availability of the system. Bharat further broke it down into different parts:

- Infrastructure Testing: Maintaining infrastructure quality involves thorough testing to ensure optimal performance of systems and servers.

- Configuration Testing: Ensuring configuration consistency to avoid dependency issues and performance unpredictability.

- Continuous Monitoring: Regularly tracking the quality of infrastructure over time and adapting to changes is essential.

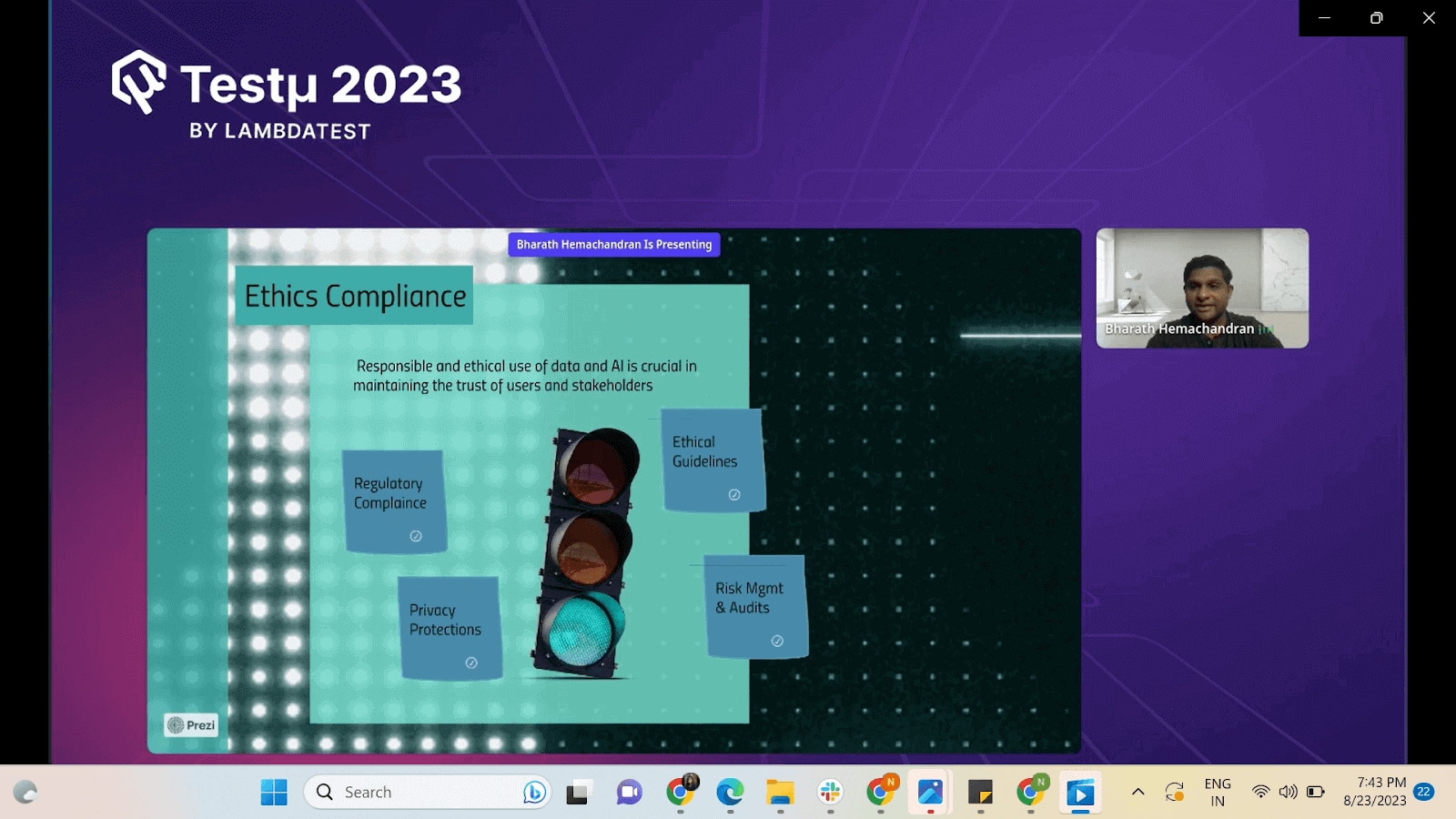

Pillar 4: Ethical Compliances

While many people wonder ethical compliances won’t play a major role in determining quality, Bharath explained its importance in maintaining the trust of customers and stakeholders.

- Ethical Considerations: Addressing ethical concerns is crucial, considering the impact of systems on users, values, and potential biases.

- Guidelines and Guardrails: Setting ethical guidelines and guardrails, such as respecting privacy, client data usage, and defining acceptable uses of AI models.

- Risk Management and Audits: Employing third-party audits to assess compliance and risk management, ensuring adherence to ethical guidelines.

- Regulatory Compliance: Ensuring data security, privacy, and regulatory compliance to avoid exposing sensitive data or violating regulations.

With the rise of AI in testing, its crucial to stay competitive by upskilling or polishing your skillsets. The KaneAI Certification proves your hands-on AI testing skills and positions you as a future-ready, high-value QA professional.

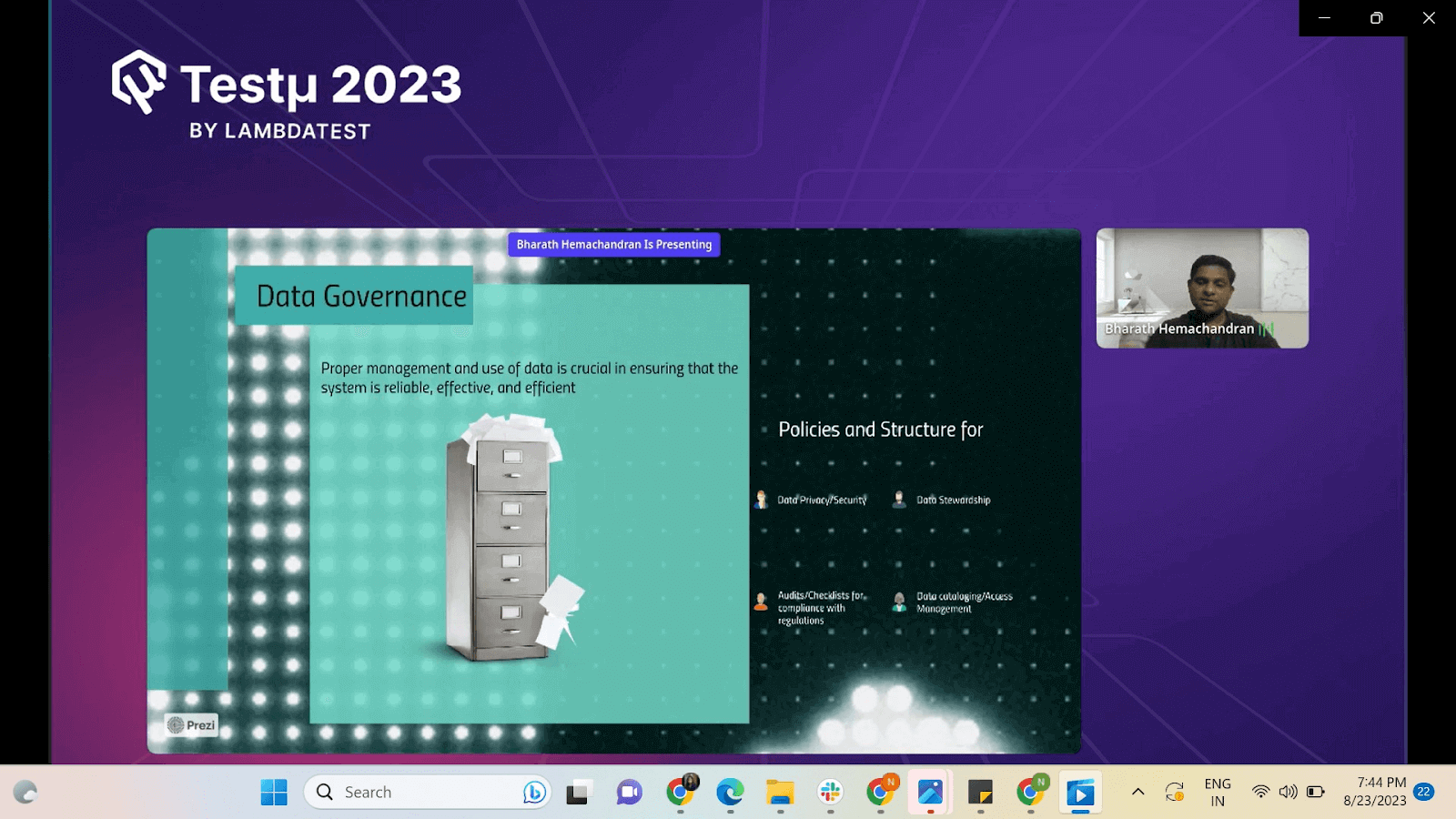

Pillar 5: Data Governance

Last but not least, Bharath highlighted the significance of Data Governance when considering the quality of AI systems. He further explained the aspects as follows:

- Data Governance Importance: Data governance combines technical and functional aspects to ensure proper data management and usage.

- Service Level Objectives: Defining service level objectives and policies related to data privacy, security, and authorization.

- Stewardship: Appointing stewards for data sources to ensure accountability and proper usage guidance.

- Audits and Checklists: Employing audits and checklists to validate compliance with established policies.

- Data Cataloging and Access Management: Proper cataloging and access management tools enforce policy adherence and control data usage.

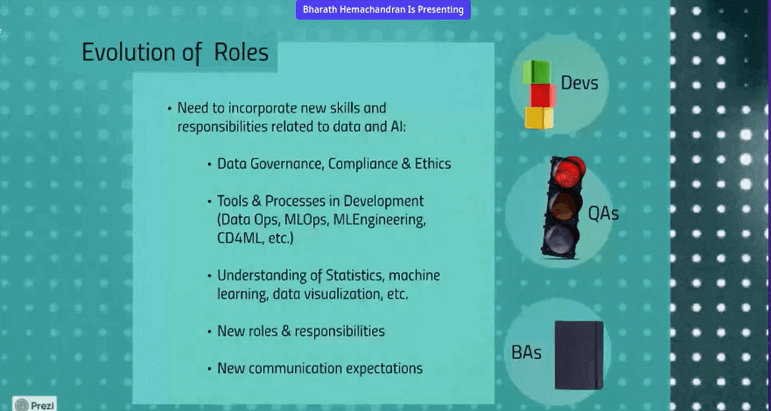

Impact of Quality on Roles

As Bharath explained data quality, he discussed a significant change in the roles within the tech landscape, particularly for developers, QAs, and BAs. He emphasized that the roles are no longer limited to traditional developers, QAs, and project managers. With the rise of data and AI, new roles have emerged, including data professionals like data scientists, data engineers, and data product managers.

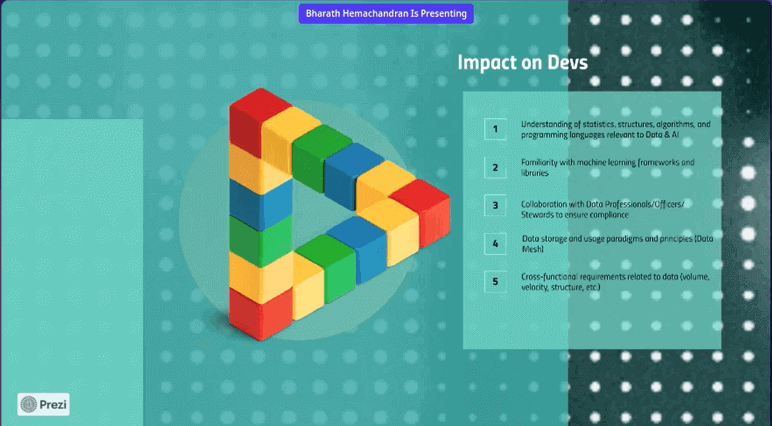

Impact on Developer Roles

Bharath discussed that developers today go beyond traditional coding and work with data specialists to understand cutting-edge storage and processing techniques. They execute cross-functional testing, provide rationales behind data decisions, and guarantee data validity to build systems that align with the five quality pillars.

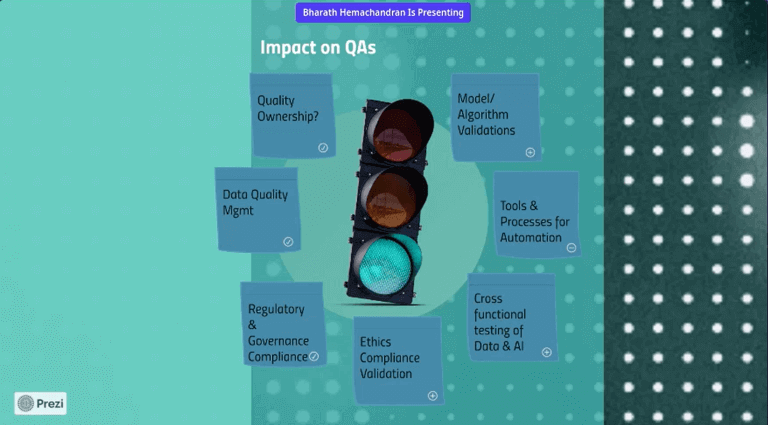

Impact on QA Roles

He discussed how QA roles have extended beyond functional tests, including cross-functional and data platform evaluations. Today, engineers detect bias, verify ethical usage, and validate data quality, ensuring compliance. Bridging technical and non-technical teams, they advocate a holistic quality approach.

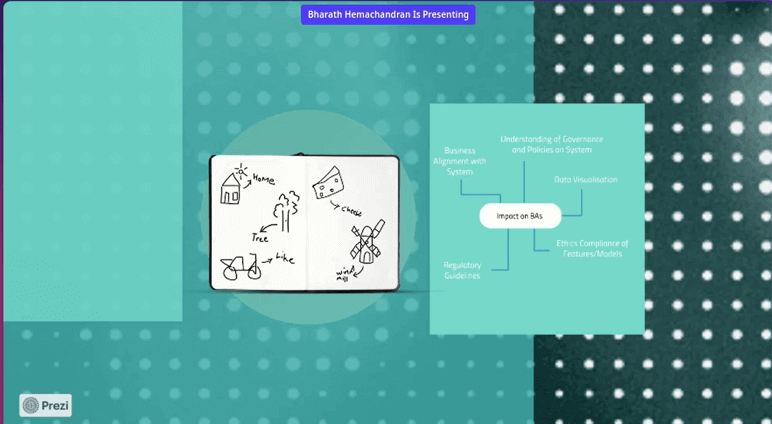

Impact on Business Analyst Roles

While discussing the impact of quality on BA roles, Bharath mentioned that BAs are pivotal in grasping ethical data usage and meeting regulatory demands. They communicate user needs, collaborate with QAs for compliance, and shape systems adhering to the five pillars of quality.

As the session approached the end, Bharath concluded by discussing the future of AI and data. He suggests everyone concentrate on their areas of expertise, remain open to skill-set shifts, and foster a comprehensive understanding, including compliance, ethics, security, and privacy considerations to sustain in the digital landscape. He recommended everyone adapt to the new changes and remain well-equipped in this new era of technology.

Time for Some Q&A

- How are we able to have the required traceability for related testing to requirements?

Bharath provided a concise approach to ensuring traceability of testing to requirements. He outlined five key considerations: regulatory compliance, ethical implications, infrastructure suitability, explainability of models, and comprehensive definitions of ‘done’. By doing these steps, the speaker showed how to ensure testing matches what’s needed.

- How do you see the interplay between model quality and overall system quality and what strategies can be employed to ensure both are maintained?

- What are some of the most common challenges that arise when applying the traditional software quality methodologies to the systems?

Bharath mentioned about the different challenges when it comes to applying traditional software quality methodologies to data and AI systems. Shifting from a tester-centric approach to involving all stakeholders in quality ownership is crucial. Addressing governance and ethical concerns upfront is essential, as these can’t be changed later. Choosing the right approach and not overcomplicating solutions is important. Data quality must be a priority, even for seemingly simple problems. Effective communication with new roles like data scientists and analysts is key.

Bharat defines Model quality using these essential steps-

— LambdaTest (@testmuai) August 22, 2023

Quality of algorithms, the kind of data to train the model, the explainability of your system, creating deterministic aspects, and using oracles to test and track the model version you use. pic.twitter.com/R6Ij8vaH92

Bharath answered this question by discussing model quality’s effect on the system. He mentioned three aspects of model quality, i.e., algorithms used, the quality of data for training, and the system’s explainability. To ensure model and system quality, Bharath recommended using Oracles to test and track model versions over time. This helps maintain good models and understand any changes.

Got more questions? Drop them on the TestMu AI Community.

Did you find this page helpful?

More Related Hubs

TestMu AI forEnterprise

Get access to solutions built on Enterprise

grade security, privacy, & compliance

- Advanced access controls

- Advanced data retention rules

- Advanced Local Testing

- Premium Support options

- Early access to beta features

- Private Slack Channel

- Unlimited Manual Accessibility DevTools Tests